Faux pictures is nothing new. Within the 1910s, British writer Arthur Conan Doyle was famously deceived by two school-aged sisters who had produced pictures of chic fairies cavorting of their backyard.

At the moment it’s exhausting to consider these pictures may have fooled anyone, but it surely was not till the Nineteen Eighties an knowledgeable named Geoffrey Crawley had the nerve to straight apply his data of movie pictures and deduce the plain.

The images have been faux, as later admitted by one of many sisters themselves.

Trying to find Artifacts and Frequent Sense

Digital pictures has opened up a wealth of strategies for fakers and detectives alike.

Forensic examination of suspect pictures these days includes looking for qualities inherent to digital pictures, comparable to analyzing metadata embedded within the pictures, utilizing software program comparable to Adobe Photoshop to appropriate distortions in pictures, and trying to find telltale indicators of manipulation, comparable to areas being duplicated to obscure authentic options.

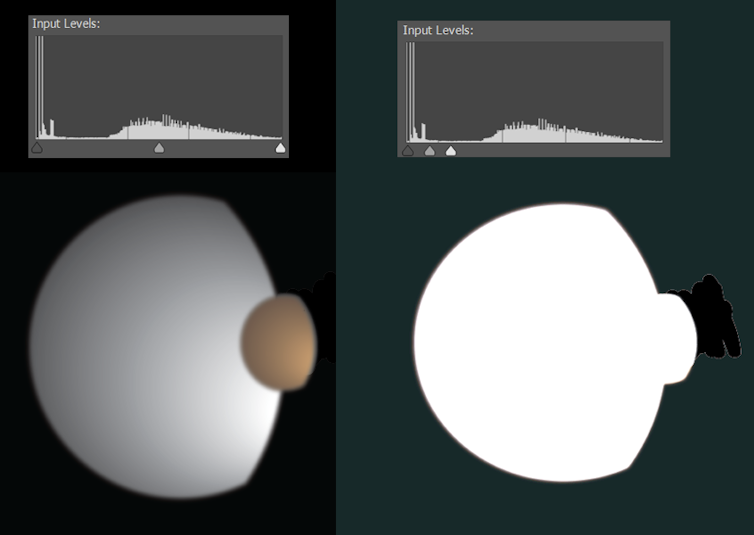

Generally digital edits are too refined to detect, however leap into view after we regulate the way in which mild and darkish pixels are distributed. For instance, in 2010 NASA launched a photograph of Saturn’s moons Dione and Titan. It was by no means faux, however had been cleaned as much as take away stray artifacts—which bought the eye of conspiracy theorists.

Curious, I put the picture into Photoshop. The illustration beneath recreates roughly how this seemed.

Most digital pictures are in compressed codecs comparable to JPEG, slimmed down by eradicating a lot of the data captured by the digital camera. Standardized algorithms guarantee the data eliminated has minimal seen influence—but it surely does depart traces.

The compression of any area of a picture will depend upon what’s going on within the picture and present digital camera settings; when a faux picture combines a number of sources, it’s typically attainable to detect this by cautious evaluation of the compression artifacts.

Some forensic methodology has little to do with the format of a picture, however is basically visible detective work. Is everybody within the {photograph} lit in the identical manner? Are shadows and reflections making sense? Are ears and fingers exhibiting mild and shadow in the suitable locations? What’s mirrored in individuals’s eyes? Would all of the strains and angles of the room add up if we modeled the scene in 3D?

Arthur Conan Doyle could have been fooled by fairy pictures, however I believe his creation Sherlock Holmes could be proper at house on the earth of forensic photograph evaluation.

A New Period of Synthetic Intelligence

The present explosion of pictures created by text-to-image synthetic intelligence instruments is in some ways extra radical than the shift from movie to digital pictures.

We will now conjure any picture we would like, simply by typing. These pictures usually are not franken-photos made by cobbling collectively pre-existing clumps of pixels. They’re completely new pictures with the content material, high quality, and elegance specified.

Till not too long ago, the complicated neural networks used to generate these pictures have had restricted availability to the general public. This modified on August 23, 2022, with the discharge to the general public of the open-source Steady Diffusion. Now anybody with a gaming-level Nvidia graphics card of their pc can create AI picture content material with none analysis lab or enterprise gatekeeping their actions.

This has prompted many to ask, “can we ever consider what we see on-line once more?”. That relies upon.

Textual content-to-image AI will get its smarts from coaching—the evaluation of a lot of picture/caption pairs. The strengths and weaknesses of every system are partially derived from simply what pictures it has been educated on. Right here is an instance: that is how Steady Diffusion sees George Clooney doing his ironing.

That is removed from real looking. All Steady Diffusion has to go on is the data it has discovered, and whereas it’s clear it has seen George Clooney and might hyperlink that string of letters to the actor’s options, it’s not a Clooney knowledgeable.

Nevertheless, it could have seen and digested many extra pictures of middle-aged males basically, so let’s see what occurs after we ask for a generic middle-aged man in the identical situation.

This can be a clear enchancment, however nonetheless not fairly real looking. As has all the time been the case, the tough geometry of fingers and ears are good locations to search for indicators of fakery—though on this medium we’re trying on the spatial geometry fairly than the tells of not possible lighting.

There could also be different clues. If we rigorously reconstructed the room, would the corners be sq.? Would the cabinets make sense? A forensic knowledgeable used to analyzing digital pictures may in all probability make a name on that.

We Can No Longer Imagine Our Eyes

If we prolong a text-to-image system’s data, it may well do even higher. You may add your individual described pictures to complement current coaching. This course of is named textual inversion.

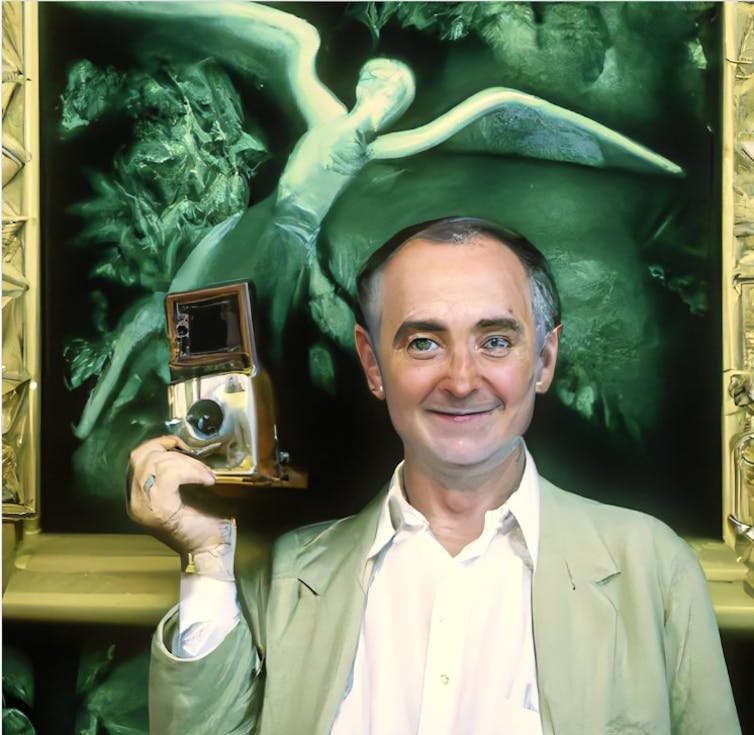

Lately, Google has launched Dream Sales space, another, extra subtle technique for injecting particular individuals, objects, and even artwork types into text-to-image AI methods.

This course of requires heavy-duty {hardware}, however the outcomes are staggering. Some nice work has begun to be shared on Reddit. Have a look at the pictures within the put up beneath that present pictures put into DreamBooth and real looking faux pictures from Steady Diffusion.

We will now not consider our eyes, however we should still be capable to belief these of forensics specialists, a minimum of for now. It’s completely attainable that future methods could possibly be intentionally educated to idiot them too.

We’re quickly shifting into an period the place excellent photographic and even video will probably be widespread. Time will inform how vital this will probably be, however within the meantime it’s price remembering the lesson of the Cottingley Fairy pictures—typically individuals simply wish to consider, even in apparent fakes.![]()

This text is republished from The Dialog underneath a Inventive Commons license. Learn the authentic article.

Picture Credit score: Brendan Murphy / author supplied