Let me introduce you to Philip Nitschke, often known as “Dr. Demise” or “the Elon Musk of assisted suicide.”

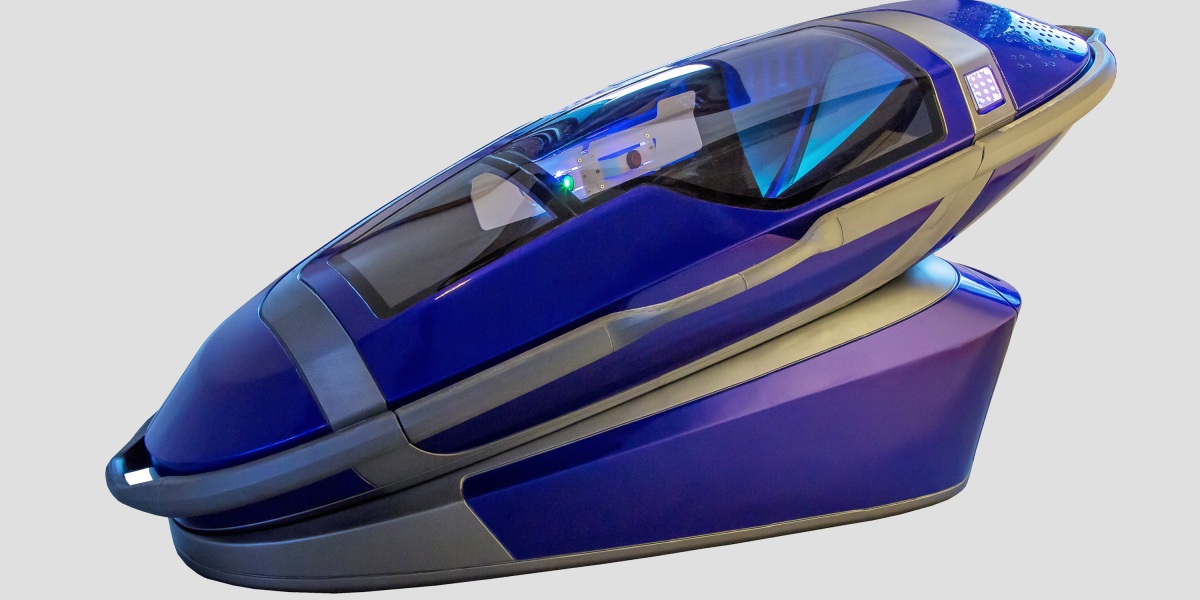

Nitschke has a curious objective: He desires to “demedicalize” loss of life and make assisted suicide as unassisted as doable by means of know-how. As my colleague Will Heaven experiences, Nitschke has developed a coffin-size machine referred to as the Sarco. Individuals looking for to finish their lives can enter the machine after present process an algorithm-based psychiatric self-assessment. In the event that they cross, the Sarco will launch nitrogen fuel, which asphyxiates them in minutes. An individual who has chosen to die should reply three questions: Who’re you? The place are you? And have you learnt what’s going to occur once you press that button?

In Switzerland, the place assisted suicide is authorized, candidates for euthanasia should show psychological capability, which is usually assessed by a psychiatrist. However Nitschke desires to take folks out of the equation fully.

Nitschke is an excessive instance. However as Will writes, AI is already getting used to triage and deal with sufferers in a rising variety of health-care fields. Algorithms have gotten an more and more necessary a part of care, and we should attempt to make sure that their function is proscribed to medical selections, not ethical ones.

Will explores the messy morality of efforts to develop AI that may assist make life-and-death selections right here.

I’m most likely not the one one who feels extraordinarily uneasy about letting algorithms make selections about whether or not folks dwell or die. Nitschke’s work looks as if a basic case of misplaced belief in algorithms’ capabilities. He’s making an attempt to sidestep sophisticated human judgments by introducing a know-how that might make supposedly “unbiased” and “goal” selections.

That may be a harmful path, and we all know the place it leads. AI programs replicate the people who construct them, and they’re riddled with biases. We’ve seen facial recognition programs that don’t acknowledge Black folks and label them as criminals or gorillas. Within the Netherlands, tax authorities used an algorithm to attempt to weed out advantages fraud, solely to penalize harmless folks—principally lower-income folks and members of ethnic minorities. This led to devastating penalties for hundreds: chapter, divorce, suicide, and youngsters being taken into foster care.

As AI is rolled out in well being care to assist make a number of the highest-stake selections there are, it’s extra essential than ever to critically study how these programs are constructed. Even when we handle to create an ideal algorithm with zero bias, algorithms lack the nuance and complexity to make selections about people and society on their very own. We must always fastidiously query how a lot decision-making we actually need to flip over to AI. There may be nothing inevitable about letting it deeper and deeper into our lives and societies. That may be a selection made by people.