[ad_1]

Last November, we introduced the 1,000 Languages Initiative, an bold dedication to construct a machine studying (ML) mannequin that may assist the world’s one thousand most-spoken languages, bringing better inclusion to billions of individuals across the globe. However, a few of these languages are spoken by fewer than twenty million folks, so a core problem is easy methods to assist languages for which there are comparatively few audio system or restricted obtainable knowledge.

Today, we’re excited to share extra in regards to the Universal Speech Model (USM), a essential first step in the direction of supporting 1,000 languages. USM is a household of state-of-the-art speech fashions with 2B parameters skilled on 12 million hours of speech and 28 billion sentences of textual content, spanning 300+ languages. USM, which is to be used in YouTube (e.g., for closed captions), can carry out computerized speech recognition (ASR) not solely on widely-spoken languages like English and Mandarin, but in addition on under-resourced languages like Amharic, Cebuano, Assamese, and Azerbaijani to call a couple of. In “Google USM: Scaling Automatic Speech Recognition Beyond 100 Languages”, we display that using a big unlabeled multilingual dataset to pre-train the encoder of the mannequin and fine-tuning on a smaller set of labeled knowledge permits us to acknowledge under-represented languages. Moreover, our mannequin coaching course of is efficient at adapting to new languages and knowledge.

|

| A pattern of the languages that USM helps. |

Challenges in present ASR

To accomplish this bold purpose, we have to deal with two important challenges in ASR.

First, there’s a lack of scalability with standard supervised studying approaches. A basic problem of scaling speech applied sciences to many languages is acquiring sufficient knowledge to coach high-quality fashions. With standard approaches, audio knowledge must be both manually labeled, which is time-consuming and dear, or collected from sources with pre-existing transcriptions, that are tougher to search out for languages that lack broad illustration. In distinction, self-supervised studying can leverage audio-only knowledge, which is accessible in a lot bigger portions throughout languages. This makes self-supervision a greater method to perform our purpose of scaling throughout lots of of languages.

Another problem is that fashions should enhance in a computationally environment friendly method whereas we develop the language protection and high quality. This requires the training algorithm to be versatile, environment friendly, and generalizable. More particularly, such an algorithm ought to be capable to use massive quantities of knowledge from a wide range of sources, allow mannequin updates with out requiring full retraining, and generalize to new languages and use instances.

Our method: Self-supervised studying with fine-tuning

USM makes use of the usual encoder-decoder structure, the place the decoder may be CTC, RNN-T, or LAS. For the encoder, USM makes use of the Conformer, or convolution-augmented transformer. The key part of the Conformer is the Conformer block, which consists of attention, feed-forward, and convolutional modules. It takes as enter the log-mel spectrogram of the speech sign and performs a convolutional sub-sampling, after which a sequence of Conformer blocks and a projection layer are utilized to acquire the ultimate embeddings.

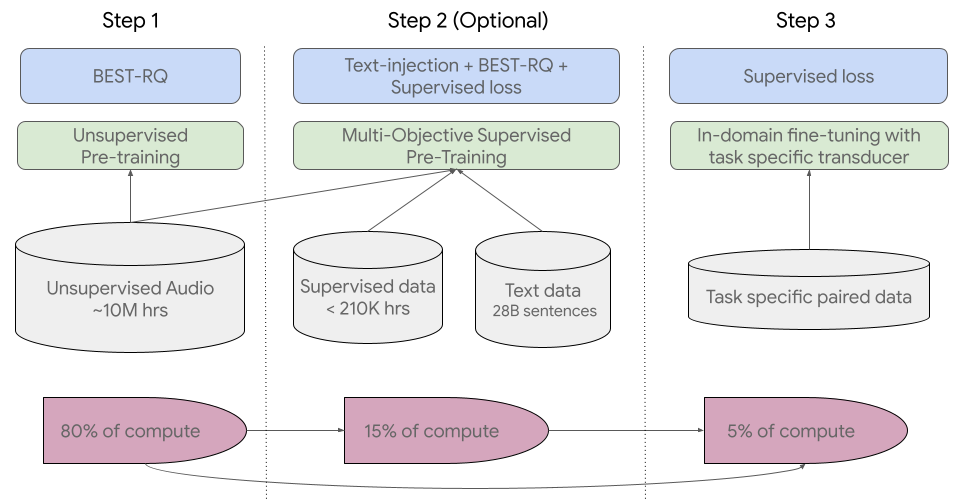

Our coaching pipeline begins with step one of self-supervised studying on speech audio overlaying lots of of languages. In the second optionally available step, the mannequin’s high quality and language protection may be improved by means of a further pre-training step with textual content knowledge. The determination to include the second step is dependent upon whether or not textual content knowledge is accessible. USM performs greatest with this second optionally available step. The final step of the coaching pipeline is to fine-tune on downstream duties (e.g., ASR or computerized speech translation) with a small quantity of supervised knowledge.

For step one, we use BEST-RQ, which has already demonstrated state-of-the-art outcomes on multilingual duties and has confirmed to be environment friendly when utilizing very massive quantities of unsupervised audio knowledge.

In the second (optionally available) step, we used multi-objective supervised pre-training to include data from further textual content knowledge. The mannequin introduces a further encoder module to take textual content as enter and extra layers to mix the output of the speech encoder and the textual content encoder, and trains the mannequin collectively on unlabeled speech, labeled speech, and textual content knowledge.

In the final stage, USM is fine-tuned on the downstream duties. The total coaching pipeline is illustrated beneath. With the data acquired throughout pre-training, USM fashions obtain good high quality with solely a small quantity of supervised knowledge from the downstream duties.

|

| USM’s total coaching pipeline. |

Key outcomes

Performance throughout a number of languages on YouTube Captions

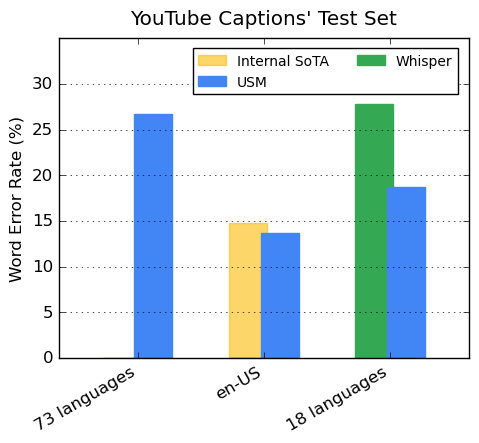

Our encoder incorporates 300+ languages by means of pre-training. We display the effectiveness of the pre-trained encoder by means of fine-tuning on YouTube Caption’s multilingual speech knowledge. The supervised YouTube knowledge contains 73 languages and has on common lower than three thousand hours of knowledge per language. Despite restricted supervised knowledge, the mannequin achieves lower than 30% phrase error fee (WER; decrease is healthier) on common throughout the 73 languages, a milestone we’ve by no means achieved earlier than. For en-US, USM has a 6% relative decrease WER in comparison with the present inside state-of-the-art mannequin. Lastly, we evaluate with the not too long ago launched massive mannequin, Whisper (large-v2), which was skilled with greater than 400k hours of labeled knowledge. For the comparability, we solely use the 18 languages that Whisper can efficiently decode with decrease than 40% WER. Our mannequin has, on common, a 32.7% relative decrease WER in comparison with Whisper for these 18 languages.

|

| USM helps all 73 languages within the YouTube Captions’ Test Set and outperforms Whisper on the languages it may well assist with decrease than 40% WER. Lower WER is healthier. |

Generalization to downstream ASR duties

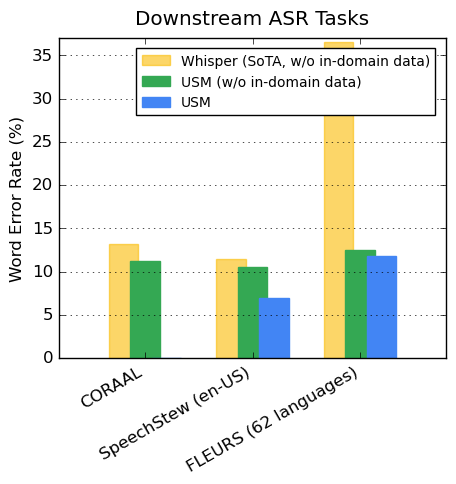

On publicly obtainable datasets, our mannequin exhibits decrease WER in comparison with Whisper on CORAAL (African American Vernacular English), SpeechStew (en-US), and FLEURS (102 languages). Our mannequin achieves decrease WER with and with out coaching on in-domain knowledge. The comparability on FLEURS stories the subset of languages (62) that overlaps with the languages supported by the Whisper mannequin. For FLEURS, USM with out in-domain knowledge has a 65.8% relative decrease WER in comparison with Whisper and has a 67.8% relative decrease WER with in-domain knowledge.

|

| Comparison of USM (with or with out in-domain knowledge) and Whisper outcomes on ASR benchmarks. Lower WER is healthier. |

Performance on computerized speech translation (AST)

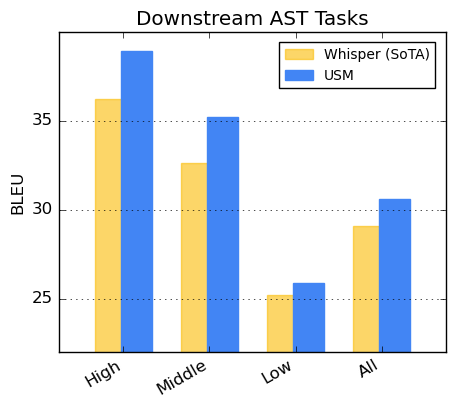

For speech translation, we fine-tune USM on the CoVoST dataset. Our mannequin, which incorporates textual content through the second stage of our pipeline, achieves state-of-the-art high quality with restricted supervised knowledge. To assess the breadth of the mannequin’s efficiency, we section the languages from the CoVoST dataset into excessive, medium, and low based mostly on useful resource availability and calculate the BLEU rating (greater is healthier) for every section. As proven beneath, USM outperforms Whisper for all segments.

|

| CoVoST BLEU rating. Higher BLEU is healthier. |

Toward 1,000 languages

The improvement of USM is a essential effort in the direction of realizing Google’s mission to prepare the world’s info and make it universally accessible. We imagine USM’s base mannequin structure and coaching pipeline comprise a basis on which we will construct to develop speech modeling to the subsequent 1,000 languages.

Learn More

Check out our paper right here. Researchers can request entry to the USM API right here.

Acknowledgements

We thank all of the co-authors for contributing to the venture and paper, together with Andrew Rosenberg, Ankur Bapna, Bhuvana Ramabhadran, Bo Li, Chung-Cheng Chiu, Daniel Park, Françoise Beaufays, Hagen Soltau, Gary Wang, Ginger Perng, James Qin, Jason Riesa, Johan Schalkwyk, Ke Hu, Nanxin Chen, Parisa Haghani, Pedro Moreno Mengibar, Rohit Prabhavalkar, Tara Sainath, Trevor Strohman, Vera Axelrod, Wei Han, Yonghui Wu, Yongqiang Wang, Yu Zhang, Zhehuai Chen, and Zhong Meng.

We additionally thank Alexis Conneau, Min Ma, Shikhar Bharadwaj, Sid Dalmia, Jiahui Yu, Jian Cheng, Paul Rubenstein, Ye Jia, Justin Snyder, Vincent Tsang, Yuanzhong Xu, Tao Wang for helpful discussions.

We admire worthwhile suggestions and assist from Eli Collins, Jeff Dean, Sissie Hsiao, Zoubin Ghahramani. Special because of Austin Tarango, Lara Tumeh, Amna Latif, and Jason Porta for his or her steerage round Responsible AI practices. We thank Elizabeth Adkison, James Cokerille for assist with naming the mannequin, Tom Small for the animated graphic, Abhishek Bapna for editorial assist, and Erica Moreira for useful resource administration . We thank Anusha Ramesh for suggestions, steerage, and help with the publication technique, and Calum Barnes and Salem Haykal for his or her worthwhile partnership.

[ad_2]