[ad_1]

Hyper-realistic digital worlds have been heralded as the most effective driving colleges for autonomous automobiles (AVs), since they’ve confirmed fruitful take a look at beds for safely attempting out harmful driving situations. Tesla, Waymo, and different self-driving firms all rely closely on knowledge to allow costly and proprietary photorealistic simulators, since testing and gathering nuanced I-almost-crashed knowledge normally isn’t essentially the most straightforward or fascinating to recreate.

To that finish, scientists from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) created “VISTA 2.0,” a data-driven simulation engine the place automobiles can be taught to drive in the true world and get better from near-crash situations. What’s extra, the entire code is being open-sourced to the general public.

“Today, only companies have software like the type of simulation environments and capabilities of VISTA 2.0, and this software is proprietary. With this release, the research community will have access to a powerful new tool for accelerating the research and development of adaptive robust control for autonomous driving,” says MIT Professor and CSAIL Director Daniela Rus, senior writer on a paper concerning the analysis.

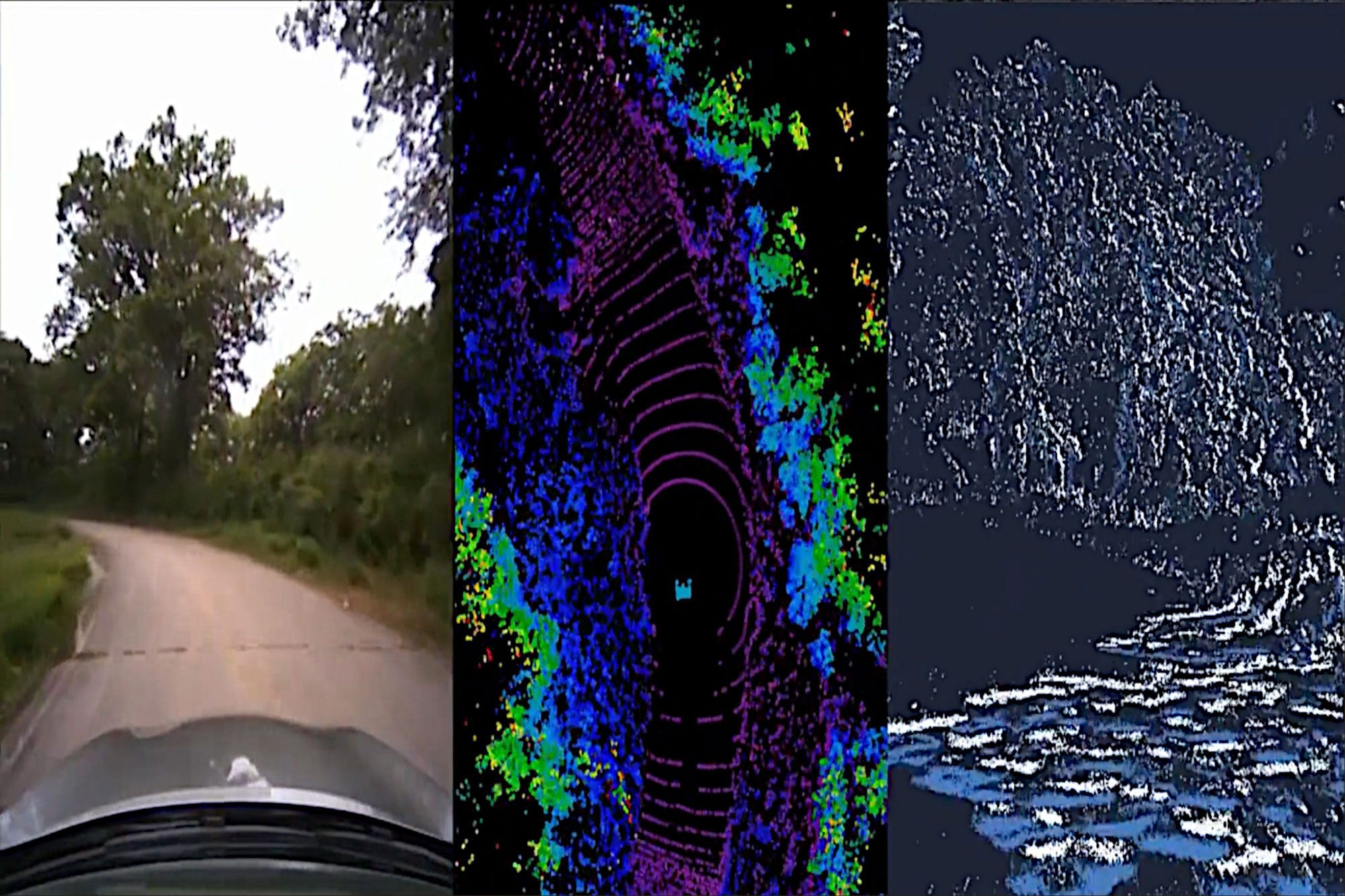

VISTA 2.0 builds off of the group’s earlier mannequin, VISTA, and it’s essentially completely different from present AV simulators because it’s data-driven — which means it was constructed and photorealistically rendered from real-world knowledge — thereby enabling direct switch to actuality. While the preliminary iteration supported solely single automotive lane-following with one digicam sensor, attaining high-fidelity data-driven simulation required rethinking the foundations of how completely different sensors and behavioral interactions will be synthesized.

Enter VISTA 2.0: a data-driven system that may simulate complicated sensor sorts and massively interactive situations and intersections at scale. With a lot much less knowledge than earlier fashions, the group was in a position to practice autonomous automobiles that may very well be considerably extra sturdy than these skilled on massive quantities of real-world knowledge.

“This is a massive jump in capabilities of data-driven simulation for autonomous vehicles, as well as the increase of scale and ability to handle greater driving complexity,” says Alexander Amini, CSAIL PhD pupil and co-lead writer on two new papers, along with fellow PhD pupil Tsun-Hsuan Wang. “VISTA 2.0 demonstrates the ability to simulate sensor data far beyond 2D RGB cameras, but also extremely high dimensional 3D lidars with millions of points, irregularly timed event-based cameras, and even interactive and dynamic scenarios with other vehicles as well.”

The group was in a position to scale the complexity of the interactive driving duties for issues like overtaking, following, and negotiating, together with multiagent situations in extremely photorealistic environments.

Training AI fashions for autonomous automobiles entails hard-to-secure fodder of various kinds of edge instances and unusual, harmful situations, as a result of most of our knowledge (fortunately) is simply run-of-the-mill, day-to-day driving. Logically, we will’t simply crash into different automobiles simply to show a neural community the best way to not crash into different automobiles.

Recently, there’s been a shift away from extra basic, human-designed simulation environments to these constructed up from real-world knowledge. The latter have immense photorealism, however the former can simply mannequin digital cameras and lidars. With this paradigm shift, a key query has emerged: Can the richness and complexity of the entire sensors that autonomous automobiles want, akin to lidar and event-based cameras which might be extra sparse, precisely be synthesized?

Lidar sensor knowledge is way tougher to interpret in a data-driven world — you’re successfully attempting to generate brand-new 3D level clouds with thousands and thousands of factors, solely from sparse views of the world. To synthesize 3D lidar level clouds, the group used the information that the automotive collected, projected it right into a 3D area coming from the lidar knowledge, after which let a brand new digital car drive round domestically from the place that unique car was. Finally, they projected all of that sensory data again into the body of view of this new digital car, with the assistance of neural networks.

Together with the simulation of event-based cameras, which function at speeds higher than hundreds of occasions per second, the simulator was able to not solely simulating this multimodal data, but additionally doing so all in actual time — making it attainable to coach neural nets offline, but additionally take a look at on-line on the automotive in augmented actuality setups for secure evaluations. “The question of if multisensor simulation at this scale of complexity and photorealism was possible in the realm of data-driven simulation was very much an open question,” says Amini.

With that, the driving college turns into a celebration. In the simulation, you possibly can transfer round, have several types of controllers, simulate several types of occasions, create interactive situations, and simply drop in model new automobiles that weren’t even within the unique knowledge. They examined for lane following, lane turning, automotive following, and extra dicey situations like static and dynamic overtaking (seeing obstacles and transferring round so that you don’t collide). With the multi-agency, each actual and simulated brokers work together, and new brokers will be dropped into the scene and managed any which method.

Taking their full-scale automotive out into the “wild” — a.okay.a. Devens, Massachusetts — the group noticed fast transferability of outcomes, with each failures and successes. They have been additionally in a position to display the bodacious, magic phrase of self-driving automotive fashions: “robust.” They confirmed that AVs, skilled fully in VISTA 2.0, have been so sturdy in the true world that they may deal with that elusive tail of difficult failures.

Now, one guardrail people depend on that may’t but be simulated is human emotion. It’s the pleasant wave, nod, or blinker swap of acknowledgement, that are the kind of nuances the group desires to implement in future work.

“The central algorithm of this research is how we can take a dataset and build a completely synthetic world for learning and autonomy,” says Amini. “It’s a platform that I believe one day could extend in many different axes across robotics. Not just autonomous driving, but many areas that rely on vision and complex behaviors. We’re excited to release VISTA 2.0 to help enable the community to collect their own datasets and convert them into virtual worlds where they can directly simulate their own virtual autonomous vehicles, drive around these virtual terrains, train autonomous vehicles in these worlds, and then can directly transfer them to full-sized, real self-driving cars.”

Amini and Wang wrote the paper alongside Zhijian Liu, MIT CSAIL PhD pupil; Igor Gilitschenski, assistant professor in laptop science on the University of Toronto; Wilko Schwarting, AI analysis scientist and MIT CSAIL PhD ’20; Song Han, affiliate professor at MIT’s Department of Electrical Engineering and Computer Science; Sertac Karaman, affiliate professor of aeronautics and astronautics at MIT; and Daniela Rus, MIT professor and CSAIL director. The researchers offered the work on the IEEE International Conference on Robotics and Automation (ICRA) in Philadelphia.

This work was supported by the National Science Foundation and Toyota Research Institute. The group acknowledges the assist of NVIDIA with the donation of the Drive AGX Pegasus.

[ad_2]