[ad_1]

Virtual assistants are more and more built-in into our day by day routines. They may also help with every little thing from setting alarms to giving map instructions and may even help folks with disabilities to extra simply handle their properties. As we use these assistants, we’re additionally changing into extra accustomed to utilizing pure language to perform duties that we as soon as did by hand.

One of the most important challenges in constructing a strong digital assistant is figuring out what a person desires and what data is required to carry out the duty at hand. In the pure language processing (NLP) literature, that is primarily framed as a task-oriented dialogue parsing activity, the place a given dialogue must be parsed by a system to grasp the person intent and perform the operation to meet that intent. While the educational group has made progress in dealing with task-oriented dialogue due to customized objective datasets, akin to MultiWOZ, TOP, SMCalFlow, and so on., progress is proscribed as a result of these datasets lack typical speech phenomena mandatory for mannequin coaching to optimize language mannequin efficiency. The ensuing fashions typically underperform, resulting in dissatisfaction with assistant interactions. Relevant speech patterns would possibly embrace revisions, disfluencies, code-mixing, and the usage of structured context surrounding the person’s atmosphere, which could embrace the person’s notes, good house gadgets, contact lists, and so on.

Consider the next dialogue that illustrates a standard occasion when a person must revise their utterance:

|

| A dialogue dialog with a digital assistant that features a person revision. |

The digital assistant misunderstands the request and makes an attempt to name the wrong contact. Hence, the person has to revise their utterance to repair the assistant’s mistake. To parse the final utterance accurately, the assistant would additionally have to interpret the particular context of the person — on this case, it might have to know that the person had a contact listing saved of their cellphone that it ought to reference.

Another widespread class of utterance that’s difficult for digital assistants is code-mixing, which happens when the person switches from one language to a different whereas addressing the assistant. Consider the utterance beneath:

|

| A dialogue denoting code-mixing between English and German. |

In this instance, the person switches from English to German, the place “vier Uhr” means “four o’clock” in German.

In an effort to advance analysis in parsing such reasonable and sophisticated utterances, we’re launching a brand new dataset known as PRESTO, a multilingual dataset for parsing reasonable task-oriented dialogues that features roughly half 1,000,000 reasonable conversations between folks and digital assistants. The dataset spans six completely different languages and contains a number of conversational phenomena that customers could encounter when utilizing an assistant, together with user-revisions, disfluencies, and code-mixing. The dataset additionally contains surrounding structured context, akin to customers’ contacts and lists related to every instance. The specific tagging of assorted phenomena in PRESTO permits us to create completely different take a look at units to individually analyze mannequin efficiency on these speech phenomena. We discover that a few of these phenomena are simpler to mannequin with few-shot examples, whereas others require rather more coaching information.

Dataset traits

- Conversations by native audio system in six languages

All conversations in our dataset are offered by native audio system of six languages — English, French, German, Hindi, Japanese, and Spanish. This is in distinction to different datasets, akin to MTOP and MASSIVE, that translate utterances solely from English to different languages, which doesn’t essentially mirror the speech patterns of native audio system in non-English languages. - Structured context

Users typically depend on the knowledge saved of their gadgets, akin to notes, contacts, and lists, when interacting with digital assistants. However, this context is commonly not accessible to the assistant, which may end up in parsing errors when processing person utterances. To tackle this challenge, PRESTO contains three kinds of structured context, notes, lists, and contacts, in addition to person utterances and their parses. The lists, notes, and contacts are authored by native audio system of every language throughout information assortment. Having such context permits us to look at how this data can be utilized to enhance efficiency on parsing task-oriented dialog fashions.

- User revisions

It is widespread for a person to revise or right their very own utterances whereas talking to a digital assistant. These revisions occur for quite a lot of causes — the assistant may have made a mistake in understanding the utterance or the person may need modified their thoughts whereas making an utterance. One such instance is within the determine above. Other examples of revisions embrace canceling one’s request (‘’Don’t add something.”) or correcting oneself in the identical utterance (“Add bread — no, no wait — add wheat bread to my shopping list.”). Roughly 27% of all examples in PRESTO have some kind of person revision that’s explicitly labeled within the dataset. - Code-mixing

As of 2022, roughly 43% of the world’s inhabitants is bilingual. As a outcome, many customers swap languages whereas talking to digital assistants. In constructing PRESTO, we requested bilingual information contributors to annotate code-mixed utterances, which amounted to roughly 14% of all utterances within the dataset.

Examples of Hindi-English, Spanish-English, and German-English code-switched utterances from PRESTO. - Disfluencies

Disfluencies, like repeated phrases or filler phrases, are ubiquitous in person utterances because of the spoken nature of the conversations that the digital assistants obtain. Datasets akin to DISFL-QA observe the shortage of such phenomena in current NLP literature and contribute in direction of the purpose of assuaging that hole. In our work, we embrace conversations concentrating on this specific phenomenon throughout all six languages.

Examples of utterances in English, Japanese, and French with filler phrases or repetitions.

Key findings

We carried out focused experiments to concentrate on every of the phenomena described above. We ran mT5-based fashions skilled utilizing the PRESTO dataset and evaluated them utilizing a precise match between the anticipated parse and the human annotated parse. Below we present the relative efficiency enhancements as we scale the coaching information on every of the focused phenomena — person revisions, disfluencies, and code-mixing.

|

| Ok-shot outcomes on varied linguistic phenomena and the complete take a look at set throughout rising coaching information measurement. |

The ok-shot outcomes yield the next takeaways:

- Zero-shot efficiency on the marked phenomenon is poor, emphasizing the necessity for such utterances within the dataset to enhance efficiency.

- Disfluencies and code-mixing have a a lot better zero-shot efficiency than user-revisions (over 40 factors distinction in exact-match accuracy).

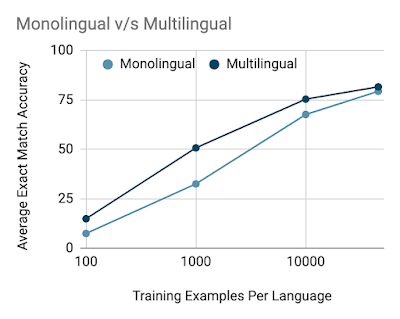

We additionally examine the distinction between coaching monolingual and multilingual fashions on the practice set and discover that with fewer information multilingual fashions have a bonus over monolingual fashions, however the hole shrinks as the info measurement is elevated.

Additional particulars on information high quality, information assortment methodology, and modeling experiments might be present in our paper.

Conclusion

We created PRESTO, a multilingual dataset for parsing task-oriented dialogues that features reasonable conversations representing quite a lot of ache factors that customers typically face of their day by day conversations with digital assistants which can be missing in current datasets within the NLP group. PRESTO contains roughly half 1,000,000 utterances which can be contributed by native audio system of six languages — English, French, German, Hindi, Japanese, and Spanish. We created devoted take a look at units to concentrate on every focused phenomenon — person revisions, disfluencies, code-mixing, and structured context. Our outcomes point out that the zero-shot efficiency is poor when the focused phenomenon just isn’t included within the coaching set, indicating a necessity for such utterances to enhance efficiency. We discover that person revisions and disfluencies are simpler to mannequin with extra information versus code-mixed utterances, that are tougher to mannequin, even with a excessive variety of examples. With the discharge of this dataset, we open extra questions than we reply and we hope the analysis group makes progress on utterances which can be extra in keeping with what customers are dealing with day-after-day.

Acknowledgements

It was a privilege to collaborate on this work with Waleed Ammar, Siddharth Vashishtha, Motoki Sano, Faiz Surani, Max Chang, HyunJeong Choe, David Greene, Kyle He, Rattima Nitisaroj, Anna Trukhina, Shachi Paul, Pararth Shah, Rushin Shah, and Zhou Yu. We’d additionally wish to thank Tom Small for the animations on this weblog put up. Finally, an enormous due to all of the professional linguists and information annotators for making this a actuality.

[ad_2]