[ad_1]

Introduction

Word embedding is a technique used to map phrases of a vocabulary to

dense vectors of actual numbers the place semantically comparable phrases are mapped to

close by factors. Representing phrases on this vector house assist

algorithms obtain higher efficiency in pure language

processing duties like syntactic parsing and sentiment evaluation by grouping

comparable phrases. For instance, we anticipate that within the embedding house

“cats” and “dogs” are mapped to close by factors since they’re

each animals, mammals, pets, and so forth.

In this tutorial we’ll implement the skip-gram mannequin created by Mikolov et al in R utilizing the keras bundle.

The skip-gram mannequin is a taste of word2vec, a category of

computationally-efficient predictive fashions for studying phrase

embeddings from uncooked textual content. We received’t handle theoretical particulars about embeddings and

the skip-gram mannequin. If you wish to get extra particulars you’ll be able to learn the paper

linked above. The TensorFlow Vector Representation of Words tutorial consists of further particulars as does the Deep Learning With R pocket book about embeddings.

There are different methods to create vector representations of phrases. For instance,

GloVe Embeddings are carried out within the text2vec bundle by Dmitriy Selivanov.

There’s additionally a tidy method described in Julia Silge’s weblog put up Word Vectors with Tidy Data Principles.

Getting the Data

We will use the Amazon Fine Foods Reviews dataset.

This dataset consists of evaluations of fantastic meals from Amazon. The information span a interval of greater than 10 years, together with all ~500,000 evaluations as much as October 2012. Reviews embrace product and consumer data, scores, and narrative textual content.

Data could be downloaded (~116MB) by operating:

download.file("https://snap.stanford.edu/data/finefoods.txt.gz", "finefoods.txt.gz")We will now load the plain textual content evaluations into R.

Let’s check out some evaluations we’ve got within the dataset.

[1] "I've purchased a number of of the Vitality canned pet food merchandise ...

[2] "Product arrived labeled as Jumbo Salted Peanuts...the peanuts ... Preprocessing

We’ll start with some textual content pre-processing utilizing a keras text_tokenizer(). The tokenizer will likely be

liable for reworking every assessment right into a sequence of integer tokens (which is able to subsequently be used as

enter into the skip-gram mannequin).

Note that the tokenizer object is modified in place by the decision to fit_text_tokenizer().

An integer token will likely be assigned for every of the 20,000 commonest phrases (the opposite phrases will

be assigned to token 0).

Skip-Gram Model

In the skip-gram mannequin we’ll use every phrase as enter to a log-linear classifier

with a projection layer, then predict phrases inside a sure vary earlier than and after

this phrase. It can be very computationally costly to output a chance

distribution over all of the vocabulary for every goal phrase we enter into the mannequin. Instead,

we’re going to use destructive sampling, which means we’ll pattern some phrases that don’t

seem within the context and prepare a binary classifier to foretell if the context phrase we

handed is actually from the context or not.

In extra sensible phrases, for the skip-gram mannequin we’ll enter a 1d integer vector of

the goal phrase tokens and a 1d integer vector of sampled context phrase tokens. We will

generate a prediction of 1 if the sampled phrase actually appeared within the context and 0 if it didn’t.

We will now outline a generator operate to yield batches for mannequin coaching.

library(reticulate)

library(purrr)

skipgrams_generator <- operate(textual content, tokenizer, window_size, negative_samples) {

gen <- texts_to_sequences_generator(tokenizer, pattern(textual content))

operate() {

skip <- generator_next(gen) %>%

skipgrams(

vocabulary_size = tokenizer$num_words,

window_size = window_size,

negative_samples = 1

)

x <- transpose(skip${couples}) %>% map(. %>% unlist %>% as.matrix(ncol = 1))

y <- skip$labels %>% as.matrix(ncol = 1)

record(x, y)

}

}A generator operate

is a operate that returns a distinct worth every time it’s referred to as (generator features are sometimes used to supply streaming or dynamic information for coaching fashions). Our generator operate will obtain a vector of texts,

a tokenizer and the arguments for the skip-gram (the dimensions of the window round every

goal phrase we study and what number of destructive samples we would like

to pattern for every goal phrase).

Now let’s begin defining the keras mannequin. We will use the Keras functional API.

embedding_size <- 128 # Dimension of the embedding vector.

skip_window <- 5 # How many phrases to contemplate left and proper.

num_sampled <- 1 # Number of destructive examples to pattern for every phrase.We will first write placeholders for the inputs utilizing the layer_input operate.

input_target <- layer_input(form = 1)

input_context <- layer_input(form = 1)Now let’s outline the embedding matrix. The embedding is a matrix with dimensions

(vocabulary, embedding_size) that acts as lookup desk for the phrase vectors.

The subsequent step is to outline how the target_vector will likely be associated to the context_vector

with the intention to make our community output 1 when the context phrase actually appeared within the

context and 0 in any other case. We need target_vector to be comparable to the context_vector

in the event that they appeared in the identical context. A typical measure of similarity is the cosine

similarity. Give two vectors (A) and (B)

the cosine similarity is outlined by the Euclidean Dot product of (A) and (B) normalized by their

magnitude. As we don’t want the similarity to be normalized contained in the community, we’ll solely calculate

the dot product after which output a dense layer with sigmoid activation.

dot_product <- layer_dot(record(target_vector, context_vector), axes = 1)

output <- layer_dense(dot_product, models = 1, activation = "sigmoid")Now we’ll create the mannequin and compile it.

We can see the total definition of the mannequin by calling abstract:

_________________________________________________________________________________________

Layer (sort) Output Shape Param # Connected to

=========================================================================================

input_1 (InputLayer) (None, 1) 0

_________________________________________________________________________________________

input_2 (InputLayer) (None, 1) 0

_________________________________________________________________________________________

embedding (Embedding) (None, 1, 128) 2560128 input_1[0][0]

input_2[0][0]

_________________________________________________________________________________________

flatten_1 (Flatten) (None, 128) 0 embedding[0][0]

_________________________________________________________________________________________

flatten_2 (Flatten) (None, 128) 0 embedding[1][0]

_________________________________________________________________________________________

dot_1 (Dot) (None, 1) 0 flatten_1[0][0]

flatten_2[0][0]

_________________________________________________________________________________________

dense_1 (Dense) (None, 1) 2 dot_1[0][0]

=========================================================================================

Total params: 2,560,130

Trainable params: 2,560,130

Non-trainable params: 0

_________________________________________________________________________________________Model Training

We will match the mannequin utilizing the fit_generator() operate We have to specify the variety of

coaching steps in addition to variety of epochs we wish to prepare. We will prepare for

100,000 steps for five epochs. This is sort of gradual (~1000 seconds per epoch on a contemporary GPU). Note that you simply

might also get affordable outcomes with only one epoch of coaching.

mannequin %>%

fit_generator(

skipgrams_generator(evaluations, tokenizer, skip_window, negative_samples),

steps_per_epoch = 100000, epochs = 5

)Epoch 1/1

100000/100000 [==============================] - 1092s - loss: 0.3749

Epoch 2/5

100000/100000 [==============================] - 1094s - loss: 0.3548

Epoch 3/5

100000/100000 [==============================] - 1053s - loss: 0.3630

Epoch 4/5

100000/100000 [==============================] - 1020s - loss: 0.3737

Epoch 5/5

100000/100000 [==============================] - 1017s - loss: 0.3823 We can now extract the embeddings matrix from the mannequin through the use of the get_weights()

operate. We additionally added row.names to our embedding matrix so we are able to simply discover

the place every phrase is.

Understanding the Embeddings

We can now discover phrases which are shut to one another within the embedding. We will

use the cosine similarity, since that is what we skilled the mannequin to

reduce.

find_similar_words("2", embedding_matrix) 2 4 3 two 6

1.0000000 0.9830254 0.9777042 0.9765668 0.9722549 find_similar_words("little", embedding_matrix) little bit few small deal with

1.0000000 0.9501037 0.9478287 0.9309829 0.9286966 find_similar_words("scrumptious", embedding_matrix)scrumptious tasty fantastic superb yummy

1.0000000 0.9632145 0.9619508 0.9617954 0.9529505 find_similar_words("cats", embedding_matrix) cats canines children cat canine

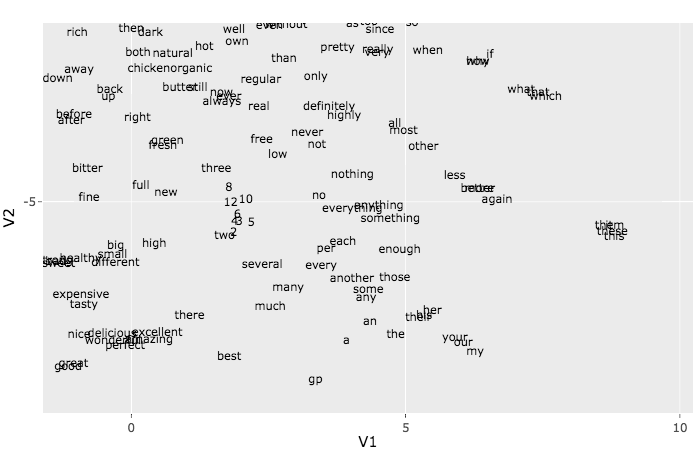

1.0000000 0.9844937 0.9743756 0.9676026 0.9624494 The t-SNE algorithm can be utilized to visualise the embeddings. Because of time constraints we

will solely use it with the primary 500 phrases. To perceive extra in regards to the t-SNE technique see the article How to Use t-SNE Effectively.

This plot might seem like a multitude, however should you zoom into the small teams you find yourself seeing some good patterns.

Try, for instance, to discover a group of net associated phrases like http, href, and so forth. Another group

that could be simple to pick is the pronouns group: she, he, her, and so forth.

[ad_2]