[ad_1]

An illustration of the RvS coverage we study with simply supervised studying and a depth-two MLP. It makes use of no TD studying, benefit reweighting, or Transformers!

Offline reinforcement studying (RL) is conventionally approached utilizing value-based strategies based mostly on temporal distinction (TD) studying. However, many latest algorithms reframe RL as a supervised studying drawback. These algorithms study conditional insurance policies by conditioning on objective states (Lynch et al., 2019; Ghosh et al., 2021), reward-to-go (Kumar et al., 2019; Chen et al., 2021), or language descriptions of the duty (Lynch and Sermanet, 2021).

We discover the simplicity of those strategies fairly interesting. If supervised studying is sufficient to resolve RL issues, then offline RL might grow to be extensively accessible and (comparatively) simple to implement. Whereas TD studying should delicately stability an actor coverage with an ensemble of critics, these supervised studying strategies practice only one (conditional) coverage, and nothing else!

So, how can we use these strategies to successfully resolve offline RL issues? Prior work places ahead a variety of intelligent suggestions and methods, however these methods are generally contradictory, making it difficult for practitioners to determine tips on how to efficiently apply these strategies. For instance, RCPs (Kumar et al., 2019) require rigorously reweighting the coaching information, GCSL (Ghosh et al., 2021) requires iterative, on-line information assortment, and Decision Transformer (Chen et al., 2021) makes use of a Transformer sequence mannequin because the coverage community.

Which, if any, of those hypotheses are appropriate? Do we have to reweight our coaching information based mostly on estimated benefits? Are Transformers essential to get a high-performing coverage? Are there different vital design selections which have been ignored of prior work?

Our work goals to reply these questions by making an attempt to establish the important parts of offline RL through supervised studying. We run experiments throughout 4 suites, 26 environments, and eight algorithms. When the mud settles, we get aggressive efficiency in each setting suite we think about using remarkably easy parts. The video above exhibits the advanced habits we study utilizing simply supervised studying with a depth-two MLP – no TD studying, information reweighting, or Transformers!

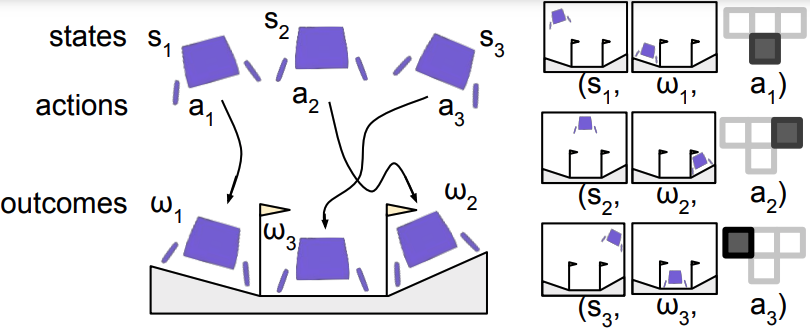

Let’s start with an summary of the algorithm we examine. While numerous prior work (Kumar et al., 2019; Ghosh et al., 2021; and Chen et al., 2021) share the identical core algorithm, it lacks a standard identify. To fill this hole, we suggest the time period RL through Supervised Learning (RvS). We will not be proposing any new algorithm however moderately displaying how prior work could be seen from a unifying framework; see Figure 1.

Figure 1. (Left) A replay buffer of expertise (Right) Hindsight relabelled coaching information

RL through Supervised Learning takes as enter a replay buffer of expertise together with states, actions, and outcomes. The outcomes could be an arbitrary operate of the trajectory, together with a objective state, reward-to-go, or language description. Then, RvS performs hindsight relabeling to generate a dataset of state, motion, and final result triplets. The instinct is that the actions which are noticed present supervision for the outcomes which are reached. With this coaching dataset, RvS performs supervised studying by maximizing the probability of the actions given the states and outcomes. This yields a conditional coverage that may situation on arbitrary outcomes at take a look at time.

In our experiments, we concentrate on the next three key questions.

- Which design selections are vital for RL through supervised studying?

- How nicely does RL through supervised studying really work? We can do RL through supervised studying, however would utilizing a special offline RL algorithm carry out higher?

- What sort of final result variable ought to we situation on? (And does it even matter?)

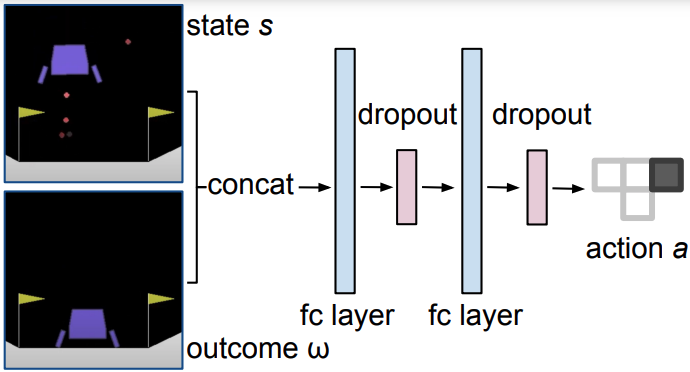

Figure 2. Our RvS structure. A depth-two MLP suffices in each setting suite we contemplate.

We get good efficiency utilizing only a depth-two multi-layer perceptron. In truth, that is aggressive with all beforehand printed architectures we’re conscious of, together with a Transformer sequence mannequin. We simply concatenate the state and final result earlier than passing them via two fully-connected layers (see Figure 2). The keys that we establish are having a community with massive capability – we use width 1024 – in addition to dropout in some environments. We discover that this works nicely with out reweighting the coaching information or performing any extra regularization.

After figuring out these key design selections, we examine the general efficiency of RvS compared to earlier strategies. This weblog put up will overview outcomes from two of the suites we contemplate within the paper.

The first suite is D4RL Gym, which accommodates the usual MuJoCo halfcheetah, hopper, and walker robots. The problem in D4RL Gym is to study locomotion insurance policies from offline datasets of various high quality. For instance, one offline dataset accommodates rollouts from a very random coverage. Another dataset accommodates rollouts from a “medium” coverage educated partway to convergence, whereas one other dataset is a mix of rollouts from medium and knowledgeable insurance policies.

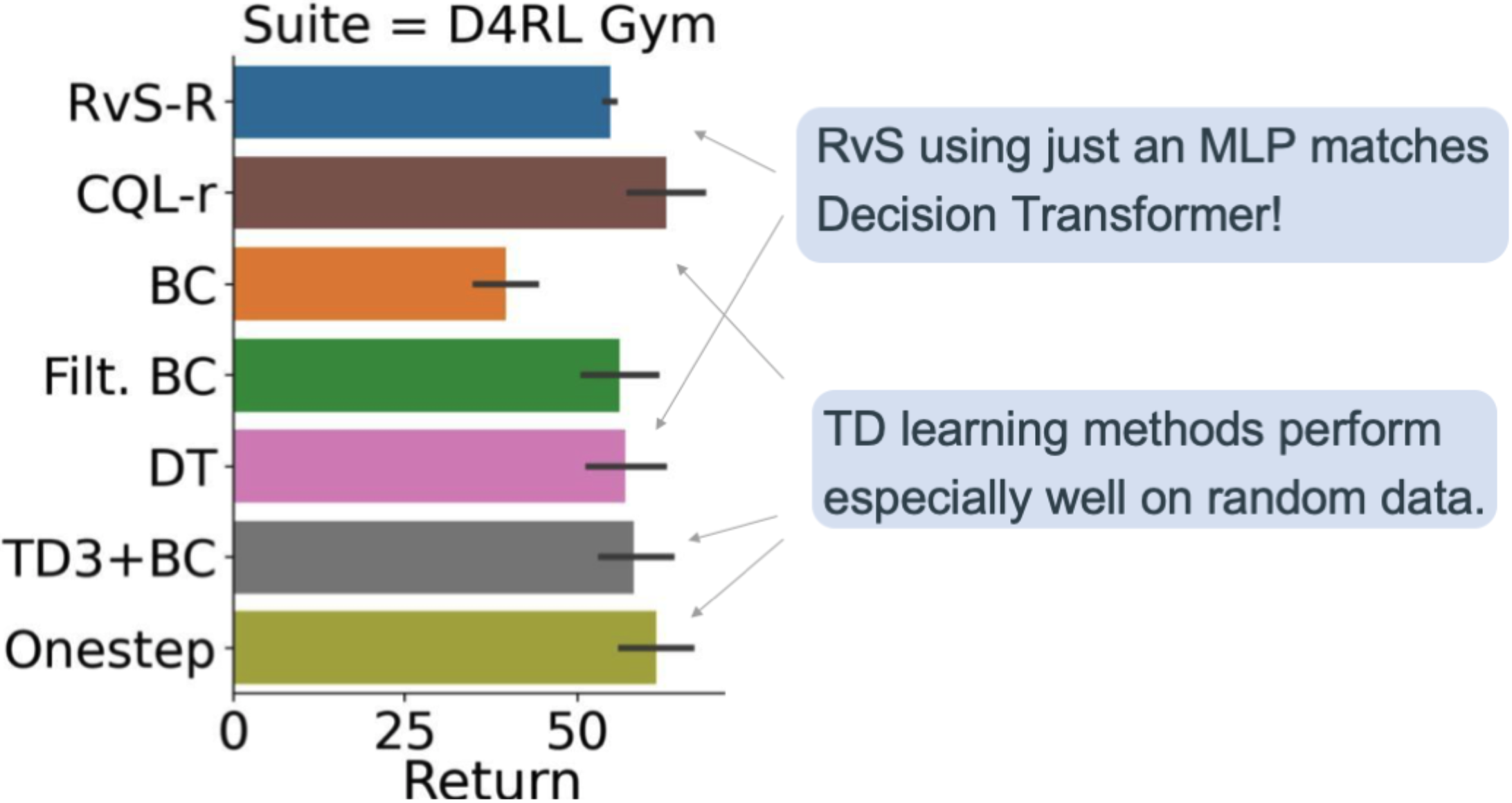

Figure 3. Overall efficiency in D4RL Gym.

Figure 3 exhibits our leads to D4RL Gym. RvS-R is our implementation of RvS conditioned on rewards (illustrated in Figure 2). On common throughout all 12 duties within the suite, we see that RvS-R, which makes use of only a depth-two MLP, is aggressive with Decision Transformer (DT; Chen et al., 2021). We additionally see that RvS-R is aggressive with the strategies that use temporal distinction (TD) studying, together with CQL-R (Kumar et al., 2020), TD3+BC (Fujimoto et al., 2021), and Onestep (Brandfonbrener et al., 2021). However, the TD studying strategies have an edge as a result of they carry out particularly nicely on the random datasets. This means that one would possibly desire TD studying over RvS when coping with low-quality information.

The second suite is D4RL AntMaze. This suite requires a quadruped to navigate to a goal location in mazes of various dimension. The problem of AntMaze is that many trajectories comprise solely items of the complete path from the begin to the objective location. Learning from these trajectories requires stitching collectively these items to get the complete, profitable path.

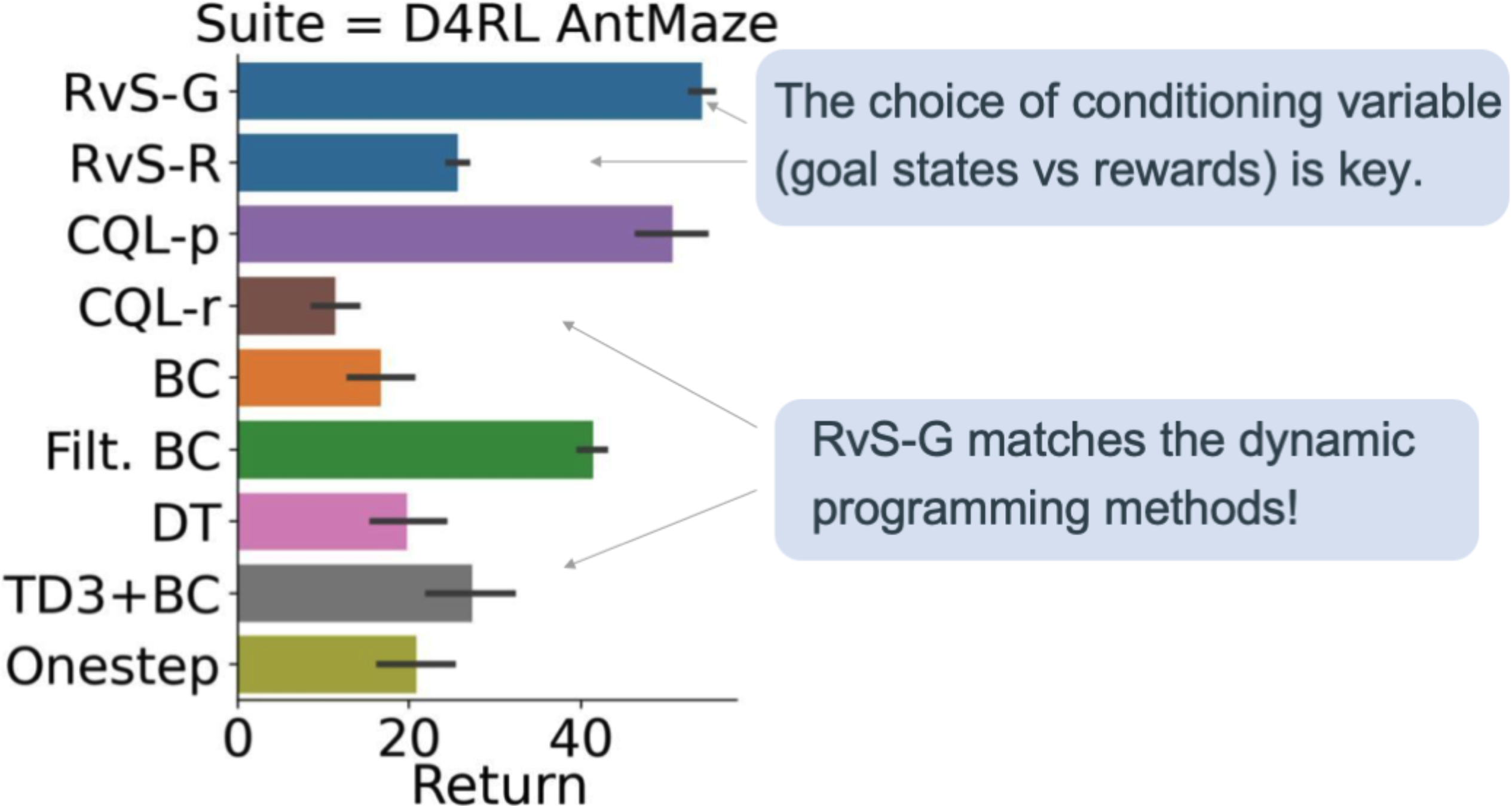

Figure 4. Overall efficiency in D4RL AntMaze.

Our AntMaze leads to Figure 4 spotlight the significance of the conditioning variable. Whereas conditioning RvS on rewards (RvS-R) was the only option of the conditioning variable in D4RL Gym, we discover that in D4RL AntMaze, it’s significantly better to situation RvS on $(x, y)$ objective coordinates (RvS-G). When we do that, we see that RvS-G compares favorably to TD studying! This was shocking to us as a result of TD studying explicitly performs dynamic programming utilizing the Bellman equation.

Why does goal-conditioning carry out higher than reward conditioning on this setting? Recall that AntMaze is designed so that easy imitation is just not sufficient: optimum strategies should sew collectively elements of suboptimal trajectories to determine tips on how to attain the objective. In precept, TD studying can resolve this with temporal compositionality. With the Bellman equation, TD studying can mix a path from A to B with a path from B to C, yielding a path from A to C. RvS-R, together with different habits cloning strategies, doesn’t profit from this temporal compositionality. We hypothesize that RvS-G, then again, advantages from spatial compositionality. This is as a result of, in AntMaze, the coverage wanted to achieve one objective is just like the coverage wanted to achieve a close-by objective. We see correspondingly that RvS-G beats RvS-R.

Of course, conditioning RvS-G on $(x, y)$ coordinates represents a type of prior data in regards to the process. But this additionally highlights an vital consideration for RvS strategies: the selection of conditioning info is critically vital, and it could rely considerably on the duty.

Overall, we discover that in a various set of environments, RvS works nicely while not having any fancy algorithmic methods (comparable to information reweighting) or fancy architectures (comparable to Transformers). Indeed, our easy RvS setup can match, and even outperform, strategies that make the most of (conservative) TD studying. The keys for RvS that we establish are mannequin capability, regularization, and the conditioning variable.

In our work, we handcraft the conditioning variable, comparable to $(x, y)$ coordinates in AntMaze. Beyond the usual offline RL setup, this introduces an extra assumption, particularly, that we’ve got some prior details about the construction of the duty. We suppose an thrilling course for future work could be to take away this assumption by automating the educational of the objective house.

We packaged our open-source code in order that it could possibly routinely deal with all of the dependencies for you. After downloading the code, you may run these 5 instructions to breed our experiments:

docker construct -t rvs:newest .

docker run -it --rm -v $(pwd):/rvs rvs:newest bash

cd rvs

pip set up -e .

bash experiments/launch_gym_rvs_r.sh

This put up is predicated on the paper:

RvS: What is Essential for Offline RL through Supervised Learning?

Scott Emmons, Benjamin Eysenbach, Ilya Kostrikov, Sergey Levine

International Conference on Learning Representations (ICLR), 2022

[Paper] [Code]

[ad_2]