[ad_1]

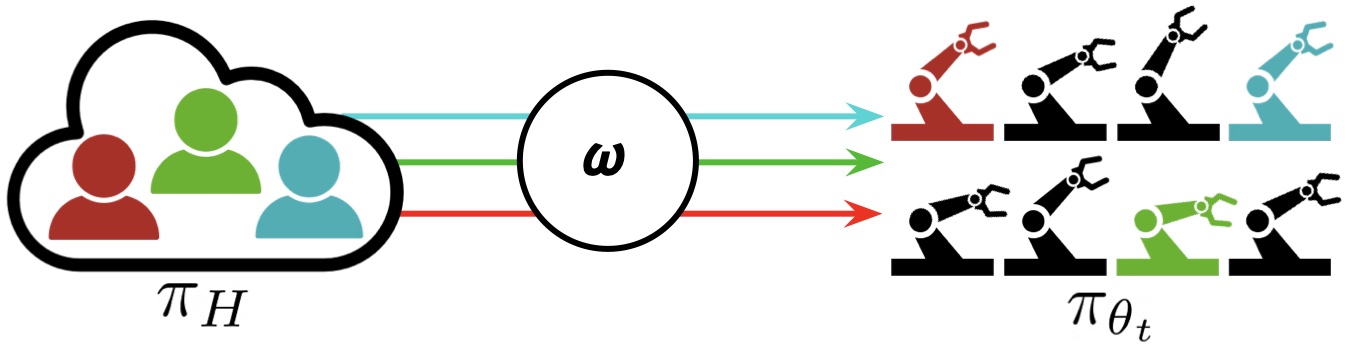

Figure 1: “Interactive Fleet Learning” (IFL) refers to robotic fleets in business and academia that fall again on human teleoperators when obligatory and regularly study from them over time.

In the previous few years now we have seen an thrilling improvement in robotics and synthetic intelligence: giant fleets of robots have left the lab and entered the true world. Waymo, for instance, has over 700 self-driving vehicles working in Phoenix and San Francisco and is currently increasing to Los Angeles. Other industrial deployments of robotic fleets embody functions like e-commerce order success at Amazon and Ambi Robotics in addition to meals supply at Nuro and Kiwibot.

Commercial and industrial deployments of robotic fleets: bundle supply (prime left), meals supply (backside left), e-commerce order success at Ambi Robotics (prime proper), autonomous taxis at Waymo (backside proper).

These robots use current advances in deep studying to function autonomously in unstructured environments. By pooling knowledge from all robots within the fleet, the whole fleet can effectively study from the expertise of every particular person robotic. Furthermore, on account of advances in cloud robotics, the fleet can offload knowledge, reminiscence, and computation (e.g., coaching of enormous fashions) to the cloud through the Internet. This strategy is called “Fleet Learning,” a time period popularized by Elon Musk in 2016 press releases about Tesla Autopilot and utilized in press communications by Toyota Research Institute, Wayve AI, and others. A robotic fleet is a contemporary analogue of a fleet of ships, the place the phrase fleet has an etymology tracing again to flēot (‘ship’) and flēotan (‘float’) in Old English.

Data-driven approaches like fleet studying, nonetheless, face the issue of the “long tail”: the robots inevitably encounter new eventualities and edge circumstances that aren’t represented within the dataset. Naturally, we will’t anticipate the longer term to be the identical because the previous! How, then, can these robotics corporations guarantee adequate reliability for his or her providers?

One reply is to fall again on distant people over the Internet, who can interactively take management and “tele-operate” the system when the robotic coverage is unreliable throughout process execution. Teleoperation has a wealthy historical past in robotics: the world’s first robots had been teleoperated throughout WWII to deal with radioactive supplies, and the Telegarden pioneered robotic management over the Internet in 1994. With continuous studying, the human teleoperation knowledge from these interventions can iteratively enhance the robotic coverage and cut back the robots’ reliance on their human supervisors over time. Rather than a discrete soar to full robotic autonomy, this technique provides a steady different that approaches full autonomy over time whereas concurrently enabling reliability in robotic programs at this time.

The use of human teleoperation as a fallback mechanism is more and more widespread in fashionable robotics corporations: Waymo calls it “fleet response,” Zoox calls it “TeleGuidance,” and Amazon calls it “continual studying.” Last yr, a software program platform for distant driving referred to as Phantom Auto was acknowledged by Time Magazine as considered one of their Top 10 Inventions of 2022. And simply final month, John Deere acquired SparkAI, a startup that develops software program for resolving edge circumstances with people within the loop.

A distant human teleoperator at Phantom Auto, a software program platform for enabling distant driving over the Internet.

Despite this rising development in business, nonetheless, there was comparatively little deal with this subject in academia. As a outcome, robotics corporations have needed to depend on advert hoc options for figuring out when their robots ought to cede management. The closest analogue in academia is interactive imitation studying (IIL), a paradigm through which a robotic intermittently cedes management to a human supervisor and learns from these interventions over time. There have been a variety of IIL algorithms lately for the single-robot, single-human setting together with DAgger and variants reminiscent of HG-DAgger, SafeDAgger, EnsembleDAgger, and ThriftyDAgger; nonetheless, when and methods to change between robotic and human management remains to be an open downside. This is even much less understood when the notion is generalized to robotic fleets, with a number of robots and a number of human supervisors.

IFL Formalism and Algorithms

To this finish, in a current paper on the Conference on Robot Learning we launched the paradigm of Interactive Fleet Learning (IFL), the primary formalism within the literature for interactive studying with a number of robots and a number of people. As we’ve seen that this phenomenon already happens in business, we will now use the phrase “interactive fleet learning” as unified terminology for robotic fleet studying that falls again on human management, fairly than hold observe of the names of each particular person company answer (“fleet response”, “TeleGuidance”, and many others.). IFL scales up robotic studying with 4 key parts:

- On-demand supervision. Since people can’t successfully monitor the execution of a number of robots directly and are liable to fatigue, the allocation of robots to people in IFL is automated by some allocation coverage $omega$. Supervision is requested “on-demand” by the robots fairly than inserting the burden of steady monitoring on the people.

- Fleet supervision. On-demand supervision permits efficient allocation of restricted human consideration to giant robotic fleets. IFL permits the variety of robots to considerably exceed the variety of people (e.g., by an element of 10:1 or extra).

- Continual studying. Each robotic within the fleet can study from its personal errors in addition to the errors of the opposite robots, permitting the quantity of required human supervision to taper off over time.

- The Internet. Thanks to mature and ever-improving Internet expertise, the human supervisors don’t must be bodily current. Modern laptop networks allow real-time distant teleoperation at huge distances.

In the Interactive Fleet Learning (IFL) paradigm, M people are allotted to the robots that want probably the most assist in a fleet of N robots (the place N might be a lot bigger than M). The robots share coverage $pi_{theta_t}$ and study from human interventions over time.

We assume that the robots share a typical management coverage $pi_{theta_t}$ and that the people share a typical management coverage $pi_H$. We additionally assume that the robots function in unbiased environments with equivalent state and motion areas (however not equivalent states). Unlike a robotic swarm of sometimes low-cost robots that coordinate to realize a typical goal in a shared surroundings, a robotic fleet concurrently executes a shared coverage in distinct parallel environments (e.g., totally different bins on an meeting line).

The aim in IFL is to search out an optimum supervisor allocation coverage $omega$, a mapping from $mathbf{s}^t$ (the state of all robots at time t) and the shared coverage $pi_{theta_t}$ to a binary matrix that signifies which human will probably be assigned to which robotic at time t. The IFL goal is a novel metric we name the “return on human effort” (ROHE):

[max_{omega in Omega} mathbb{E}_{tau sim p_{omega, theta_0}(tau)} left[frac{M}{N} cdot frac{sum_{t=0}^T bar{r}( mathbf{s}^t, mathbf{a}^t)}{1+sum_{t=0}^T |omega(mathbf{s}^t, pi_{theta_t}, cdot) |^2 _F} right]]

the place the numerator is the whole reward throughout robots and timesteps and the denominator is the whole quantity of human actions throughout robots and timesteps. Intuitively, the ROHE measures the efficiency of the fleet normalized by the whole human supervision required. See the paper for extra of the mathematical particulars.

Using this formalism, we will now instantiate and evaluate IFL algorithms (i.e., allocation insurance policies) in a principled manner. We suggest a household of IFL algorithms referred to as Fleet-DAgger, the place the coverage studying algorithm is interactive imitation studying and every Fleet-DAgger algorithm is parameterized by a singular precedence perform $hat p: (s, pi_{theta_t}) rightarrow [0, infty)$ that each robot in the fleet uses to assign itself a priority score. Similar to scheduling theory, higher priority robots are more likely to receive human attention. Fleet-DAgger is general enough to model a wide range of IFL algorithms, including IFL adaptations of existing single-robot, single-human IIL algorithms such as EnsembleDAgger and ThriftyDAgger. Note, however, that the IFL formalism isn’t limited to Fleet-DAgger: policy learning could be performed with a reinforcement learning algorithm like PPO, for instance.

IFL Benchmark and Experiments

To determine how to best allocate limited human attention to large robot fleets, we need to be able to empirically evaluate and compare different IFL algorithms. To this end, we introduce the IFL Benchmark, an open-source Python toolkit available on Github to facilitate the development and standardized evaluation of new IFL algorithms. We extend NVIDIA Isaac Gym, a highly optimized software library for end-to-end GPU-accelerated robot learning released in 2021, without which the simulation of hundreds or thousands of learning robots would be computationally intractable. Using the IFL Benchmark, we run large-scale simulation experiments with N = 100 robots, M = 10 algorithmic humans, 5 IFL algorithms, and 3 high-dimensional continuous control environments (Figure 1, left).

We also evaluate IFL algorithms in a real-world image-based block pushing task with N = 4 robot arms and M = 2 remote human teleoperators (Figure 1, right). The 4 arms belong to 2 bimanual ABB YuMi robots operating simultaneously in 2 separate labs about 1 kilometer apart, and remote humans in a third physical location perform teleoperation through a keyboard interface when requested. Each robot pushes a cube toward a unique goal position randomly sampled in the workspace; the goals are programmatically generated in the robots’ overhead image observations and automatically resampled when the previous goals are reached. Physical experiment results suggest trends that are approximately consistent with those observed in the benchmark environments.

Takeaways and Future Directions

To address the gap between the theory and practice of robot fleet learning as well as facilitate future research, we introduce new formalisms, algorithms, and benchmarks for Interactive Fleet Learning. Since IFL does not dictate a specific form or architecture for the shared robot control policy, it can be flexibly synthesized with other promising research directions. For instance, diffusion policies, recently demonstrated to gracefully handle multimodal data, can be used in IFL to allow heterogeneous human supervisor policies. Alternatively, multi-task language-conditioned Transformers like RT-1 and PerAct can be effective “data sponges” that enable the robots in the fleet to perform heterogeneous tasks despite sharing a single policy. The systems aspect of IFL is another compelling research direction: recent developments in cloud and fog robotics enable robot fleets to offload all supervisor allocation, model training, and crowdsourced teleoperation to centralized servers in the cloud with minimal network latency.

While Moravec’s Paradox has so far prevented robotics and embodied AI from fully enjoying the recent spectacular success that Large Language Models (LLMs) like GPT-4 have demonstrated, the “bitter lesson” of LLMs is that supervised learning at unprecedented scale is what ultimately leads to the emergent properties we observe. Since we don’t yet have a supply of robot control data nearly as plentiful as all the text and image data on the Internet, the IFL paradigm offers one path forward for scaling up supervised robot learning and deploying robot fleets reliably in today’s world.

This post is based on the paper “Fleet-DAgger: Interactive Robot Fleet Learning with Scalable Human Supervision” by Ryan Hoque, Lawrence Chen, Satvik Sharma, Karthik Dharmarajan, Brijen Thananjeyan, Pieter Abbeel, and Ken Goldberg, presented at the Conference on Robot Learning (CoRL) 2022. For more details, see the paper on arXiv, CoRL presentation video on YouTube, open-source codebase on Github, high-level summary on Twitter, and project website.

If you would like to cite this article, please use the following bibtex:

@article{ifl_blog,

title={Interactive Fleet Learning},

author={Hoque, Ryan},

url={https://bair.berkeley.edu/blog/2023/04/06/ifl/},

journal={Berkeley Artificial Intelligence Research Blog},

year={2023}

}

[ad_2]