[ad_1]

Wednesday, May tenth was an thrilling day for the Google Research neighborhood as we watched the outcomes of months and years of our foundational and utilized work get introduced on the Google I/O stage. With the fast tempo of bulletins on stage, it may be tough to convey the substantial effort and distinctive improvements that underlie the applied sciences we introduced. So right this moment, we’re excited to disclose extra concerning the analysis efforts behind a few of the many thrilling bulletins at this yr’s I/O.

PaLM 2

Our next-generation giant language mannequin (LLM), PaLM 2, is constructed on advances in compute-optimal scaling, scaled instruction-fine tuning and improved dataset combination. By fine-tuning and instruction-tuning the mannequin for various functions, we have now been capable of combine state-of-the-art capabilities into over 25 Google merchandise and options, the place it’s already serving to to tell, help and delight customers. For instance:

- Bard is an early experiment that allows you to collaborate with generative AI and helps to spice up productiveness, speed up concepts and gas curiosity. It builds on advances in deep studying effectivity and leverages reinforcement studying from human suggestions to supply extra related responses and improve the mannequin’s means to observe directions. Bard is now out there in 180 international locations, the place customers can work together with it in English, Japanese and Korean, and because of the multilingual capabilities afforded by PaLM 2, assist for 40 languages is coming quickly.

- With Search Generative Experience we’re taking extra of the work out of looking out, so that you’ll be capable to perceive a subject quicker, uncover new viewpoints and insights, and get issues finished extra simply. As a part of this experiment, you’ll see an AI-powered snapshot of key data to think about, with hyperlinks to dig deeper.

- MakerSuite is an easy-to-use prototyping atmosphere for the PaLM API, powered by PaLM 2. In reality, inner person engagement with early prototypes of MakerSuite accelerated the event of our PaLM 2 mannequin itself. MakerSuite grew out of analysis centered on prompting instruments, or instruments explicitly designed for customizing and controlling LLMs. This line of analysis consists of PromptMaker (precursor to MakerSuite), and AI Chains and PromptChainer (one of many first analysis efforts demonstrating the utility of LLM chaining).

- Project Tailwind additionally made use of early analysis prototypes of MakerSuite to develop options to assist writers and researchers discover concepts and enhance their prose; its AI-first pocket book prototype used PaLM 2 to permit customers to ask questions of the mannequin grounded in paperwork they outline.

- Med-PaLM 2 is our state-of-the-art medical LLM, constructed on PaLM 2. Med-PaLM 2 achieved 86.5% efficiency on U.S. Medical Licensing Exam–type questions, illustrating its thrilling potential for well being. We’re now exploring multimodal capabilities to synthesize inputs like X-rays.

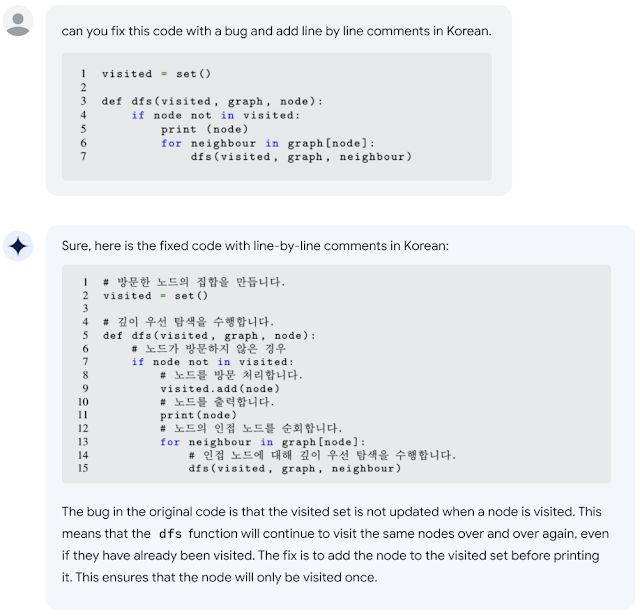

- Codey is a model of PaLM 2 fine-tuned on supply code to perform as a developer assistant. It helps a broad vary of Code AI options, together with code completions, code clarification, bug fixing, supply code migration, error explanations, and extra. Codey is offered via our trusted tester program by way of IDEs (Colab, Android Studio, Duet AI for Cloud, Firebase) and by way of a 3P-facing API.

Perhaps much more thrilling for builders, we have now opened up the PaLM APIs & MakerSuite to supply the neighborhood alternatives to innovate utilizing this groundbreaking expertise.

|

| PaLM 2 has superior coding capabilities that allow it to seek out code errors and make recommendations in a lot of completely different languages. |

Imagen

Our Imagen household of picture technology and modifying fashions builds on advances in giant Transformer-based language fashions and diffusion fashions. This household of fashions is being integrated into a number of Google merchandise, together with:

- Image technology in Google Slides and Android’s Generative AI wallpaper are powered by our text-to-image technology options.

- Google Cloud’s Vertex AI permits picture technology, picture modifying, picture upscaling and fine-tuning to assist enterprise clients meet their enterprise wants.

- I/O Flip, a digital tackle a traditional card recreation, options Google developer mascots on playing cards that have been completely AI generated. This recreation showcased a fine-tuning approach known as DreamBooth for adapting pre-trained picture technology fashions. Using only a handful of photos as inputs for fine-tuning, it permits customers to generate customized photos in minutes. With DreamBooth, customers can synthesize a topic in various scenes, poses, views, and lighting circumstances that don’t seem within the reference photos.

I/O Flip presents customized card decks designed utilizing DreamBooth.

Phenaki

Phenaki, Google’s Transformer-based text-to-video technology mannequin was featured within the I/O pre-show. Phenaki is a mannequin that may synthesize life like movies from textual immediate sequences by leveraging two important parts: an encoder-decoder mannequin that compresses movies to discrete embeddings and a transformer mannequin that interprets textual content embeddings to video tokens.

|

|

ARCore and the Scene Semantic API

Among the brand new options of ARCore introduced by the AR staff at I/O, the Scene Semantic API can acknowledge pixel-wise semantics in an out of doors scene. This helps customers create customized AR experiences based mostly on the options within the surrounding space. This API is empowered by the out of doors semantic segmentation mannequin, leveraging our current works across the DeepLab structure and an selfish out of doors scene understanding dataset. The newest ARCore launch additionally consists of an improved monocular depth mannequin that gives larger accuracy in out of doors scenes.

|

| Scene Semantics API makes use of DeepLab-based semantic segmentation mannequin to supply correct pixel-wise labels in a scene open air. |

Chirp

Chirp is Google’s household of state-of-the-art Universal Speech Models skilled on 12 million hours of speech to allow automated speech recognition (ASR) for 100+ languages. The fashions can carry out ASR on under-resourced languages, equivalent to Amharic, Cebuano, and Assamese, along with extensively spoken languages like English and Mandarin. Chirp is ready to cowl such all kinds of languages by leveraging self-supervised studying on unlabeled multilingual dataset with fine-tuning on a smaller set of labeled knowledge. Chirp is now out there within the Google Cloud Speech-to-Text API, permitting customers to carry out inference on the mannequin via a easy interface. You can get began with Chirp right here.

MusicLM

At I/O, we launched MusicLM, a text-to-music mannequin that generates 20 seconds of music from a textual content immediate. You can attempt it your self on AI Test Kitchen, or see it featured throughout the I/O preshow, the place digital musician and composer Dan Deacon used MusicLM in his efficiency.

MusicLM, which consists of fashions powered by AudioLM and MuLAN, could make music (from textual content, buzzing, photos or video) and musical accompaniments to singing. AudioLM generates top quality audio with long-term consistency. It maps audio to a sequence of discrete tokens and casts audio technology as a language modeling job. To synthesize longer outputs effectively, it used a novel strategy we’ve developed known as SoundStorm.

Universal Translator dubbing

Our dubbing efforts leverage dozens of ML applied sciences to translate the complete expressive vary of video content material, making movies accessible to audiences internationally. These applied sciences have been used to dub movies throughout quite a lot of merchandise and content material sorts, together with instructional content material, promoting campaigns, and creator content material, with extra to return. We use deep studying expertise to attain voice preservation and lip matching and allow high-quality video translation. We’ve constructed this product to incorporate human evaluation for high quality, security checks to assist stop misuse, and we make it accessible solely to approved companions.

AI for international societal good

We are making use of our AI applied sciences to unravel a few of the largest international challenges, like mitigating local weather change, adapting to a warming planet and enhancing human well being and wellbeing. For instance:

- Traffic engineers use our Green Light suggestions to scale back stop-and-go visitors at intersections and enhance the circulate of visitors in cities from Bangalore to Rio de Janeiro and Hamburg. Green Light fashions every intersection, analyzing visitors patterns to develop suggestions that make visitors lights extra environment friendly — for instance, by higher synchronizing timing between adjoining lights, or adjusting the “green time” for a given road and course.

- We’ve additionally expanded international protection on the Flood Hub to 80 international locations, as a part of our efforts to foretell riverine floods and alert people who find themselves about to be impacted earlier than catastrophe strikes. Our flood forecasting efforts depend on hydrological fashions knowledgeable by satellite tv for pc observations, climate forecasts and in-situ measurements.

Technologies for inclusive and honest ML purposes

With our continued funding in AI applied sciences, we’re emphasizing accountable AI growth with the purpose of constructing our fashions and instruments helpful and impactful whereas additionally guaranteeing equity, security and alignment with our AI Principles. Some of those efforts have been highlighted at I/O, together with:

- The launch of the Monk Skin Tone Examples (MST-E) Dataset to assist practitioners acquire a deeper understanding of the MST scale and practice human annotators for extra constant, inclusive, and significant pores and skin tone annotations. You can learn extra about this and different developments on our web site. This is an development on the open supply launch of the Monk Skin Tone (MST) Scale we launched final yr to allow builders to construct merchandise which are extra inclusive and that higher characterize their various customers.

- A new Kaggle competitors (open till August tenth) through which the ML neighborhood is tasked with making a mannequin that may shortly and precisely determine American Sign Language (ASL) fingerspelling — the place every letter of a phrase is spelled out in ASL quickly utilizing a single hand, moderately than utilizing the precise indicators for total phrases — and translate it into written textual content. Learn extra concerning the fingerspelling Kaggle competitors, which encompasses a track from Sean Forbes, a deaf musician and rapper. We additionally showcased at I/O the profitable algorithm from the prior yr’s competitors powers PopSign, an ASL studying app for folks of deaf or arduous of listening to kids created by Georgia Tech and Rochester Institute of Technology (RIT).

Building the way forward for AI collectively

It’s inspiring to be a part of a neighborhood of so many gifted people who’re main the way in which in creating state-of-the-art applied sciences, accountable AI approaches and thrilling person experiences. We are within the midst of a interval of unimaginable and transformative change for AI. Stay tuned for extra updates concerning the methods through which the Google Research neighborhood is boldly exploring the frontiers of those applied sciences and utilizing them responsibly to learn folks’s lives world wide. We hope you are as excited as we’re about the way forward for AI applied sciences and we invite you to interact with our groups via the references, websites and instruments that we’ve highlighted right here.

[ad_2]