[ad_1]

By Andre He, Vivek Myers

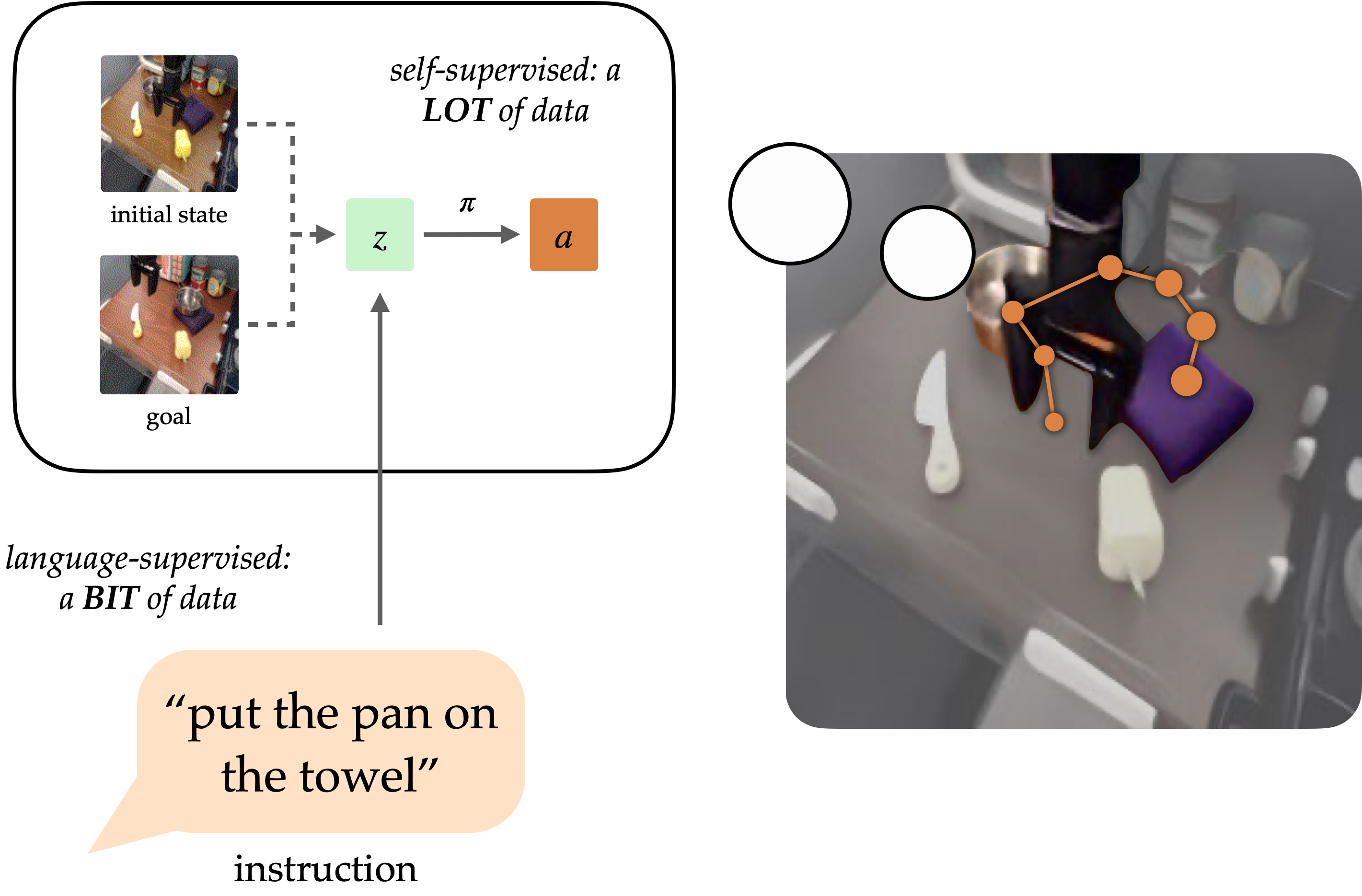

A longstanding aim of the sector of robotic studying has been to create generalist brokers that may carry out duties for people. Natural language has the potential to be an easy-to-use interface for people to specify arbitrary duties, however it’s tough to coach robots to comply with language directions. Approaches like language-conditioned behavioral cloning (LCBC) practice insurance policies to immediately imitate knowledgeable actions conditioned on language, however require people to annotate all coaching trajectories and generalize poorly throughout scenes and behaviors. Meanwhile, current goal-conditioned approaches carry out significantly better at normal manipulation duties, however don’t allow straightforward process specification for human operators. How can we reconcile the convenience of specifying duties by way of LCBC-like approaches with the efficiency enhancements of goal-conditioned studying?

Conceptually, an instruction-following robotic requires two capabilities. It must floor the language instruction within the bodily setting, after which have the ability to perform a sequence of actions to finish the meant process. These capabilities don’t have to be discovered end-to-end from human-annotated trajectories alone, however can as a substitute be discovered individually from the suitable knowledge sources. Vision-language knowledge from non-robot sources may also help study language grounding with generalization to various directions and visible scenes. Meanwhile, unlabeled robotic trajectories can be utilized to coach a robotic to succeed in particular aim states, even when they don’t seem to be related to language directions.

Conditioning on visible objectives (i.e. aim pictures) offers complementary advantages for coverage studying. As a type of process specification, objectives are fascinating for scaling as a result of they are often freely generated hindsight relabeling (any state reached alongside a trajectory could be a aim). This permits insurance policies to be educated by way of goal-conditioned behavioral cloning (GCBC) on massive quantities of unannotated and unstructured trajectory knowledge, together with knowledge collected autonomously by the robotic itself. Goals are additionally simpler to floor since, as pictures, they are often immediately in contrast pixel-by-pixel with different states.

However, objectives are much less intuitive for human customers than pure language. In most circumstances, it’s simpler for a person to explain the duty they need carried out than it’s to supply a aim picture, which might seemingly require performing the duty anyhow to generate the picture. By exposing a language interface for goal-conditioned insurance policies, we are able to mix the strengths of each goal- and language- process specification to allow generalist robots that may be simply commanded. Our methodology, mentioned under, exposes such an interface to generalize to various directions and scenes utilizing vision-language knowledge, and enhance its bodily expertise by digesting massive unstructured robotic datasets.

Goal representations for instruction following

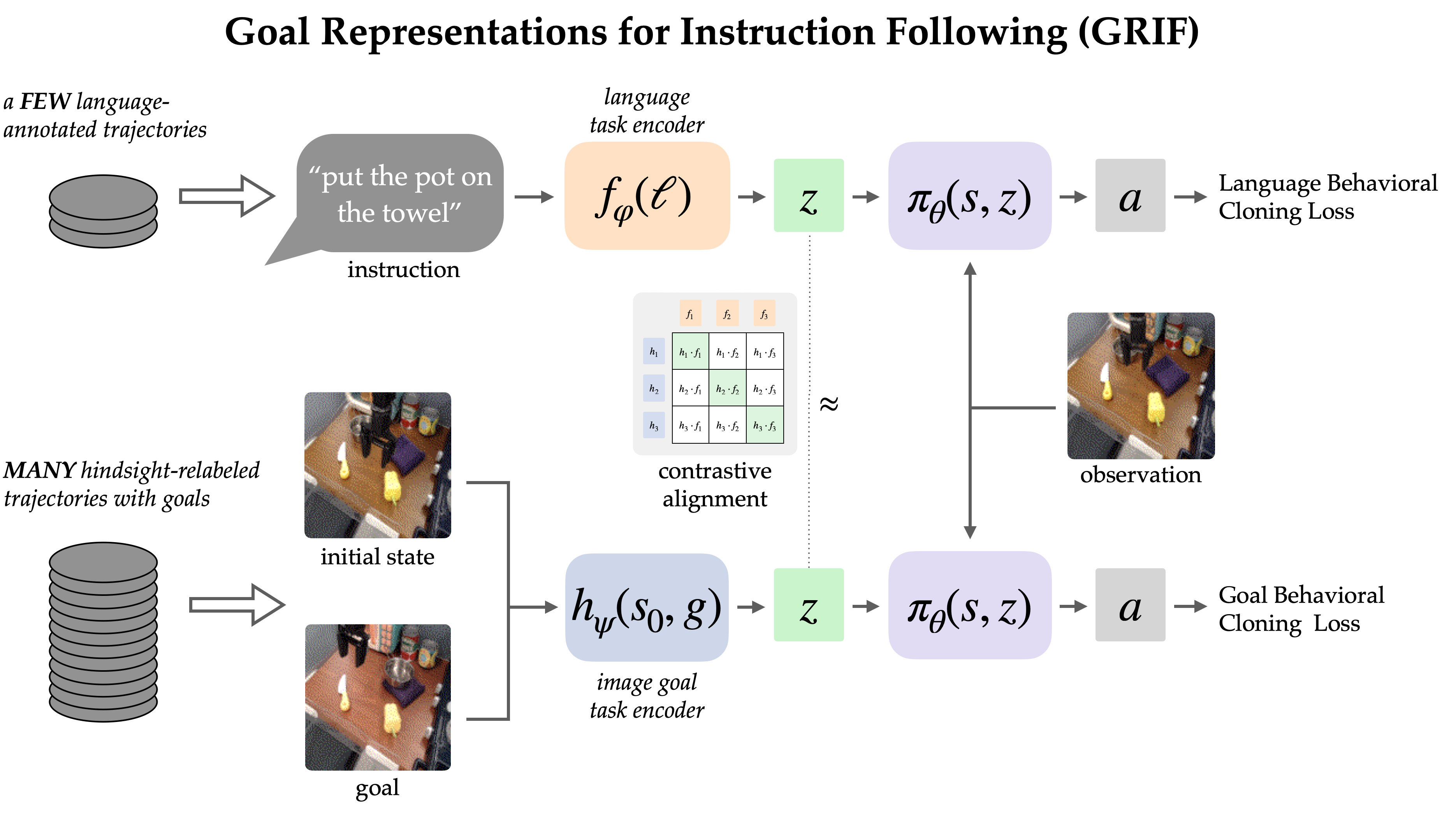

The GRIF mannequin consists of a language encoder, a aim encoder, and a coverage community. The encoders respectively map language directions and aim pictures right into a shared process illustration area, which circumstances the coverage community when predicting actions. The mannequin can successfully be conditioned on both language directions or aim pictures to foretell actions, however we’re primarily utilizing goal-conditioned coaching as a means to enhance the language-conditioned use case.

Our strategy, Goal Representations for Instruction Following (GRIF), collectively trains a language- and a goal- conditioned coverage with aligned process representations. Our key perception is that these representations, aligned throughout language and aim modalities, allow us to successfully mix the advantages of goal-conditioned studying with a language-conditioned coverage. The discovered insurance policies are then in a position to generalize throughout language and scenes after coaching on largely unlabeled demonstration knowledge.

We educated GRIF on a model of the Bridge-v2 dataset containing 7k labeled demonstration trajectories and 47k unlabeled ones inside a kitchen manipulation setting. Since all of the trajectories on this dataset needed to be manually annotated by people, with the ability to immediately use the 47k trajectories with out annotation considerably improves effectivity.

To study from each kinds of knowledge, GRIF is educated collectively with language-conditioned behavioral cloning (LCBC) and goal-conditioned behavioral cloning (GCBC). The labeled dataset accommodates each language and aim process specs, so we use it to oversee each the language- and goal-conditioned predictions (i.e. LCBC and GCBC). The unlabeled dataset accommodates solely objectives and is used for GCBC. The distinction between LCBC and GCBC is only a matter of choosing the duty illustration from the corresponding encoder, which is handed right into a shared coverage community to foretell actions.

By sharing the coverage community, we are able to count on some enchancment from utilizing the unlabeled dataset for goal-conditioned coaching. However,GRIF allows a lot stronger switch between the 2 modalities by recognizing that some language directions and aim pictures specify the identical conduct. In specific, we exploit this construction by requiring that language- and goal- representations be related for a similar semantic process. Assuming this construction holds, unlabeled knowledge can even profit the language-conditioned coverage because the aim illustration approximates that of the lacking instruction.

Alignment by way of contrastive studying

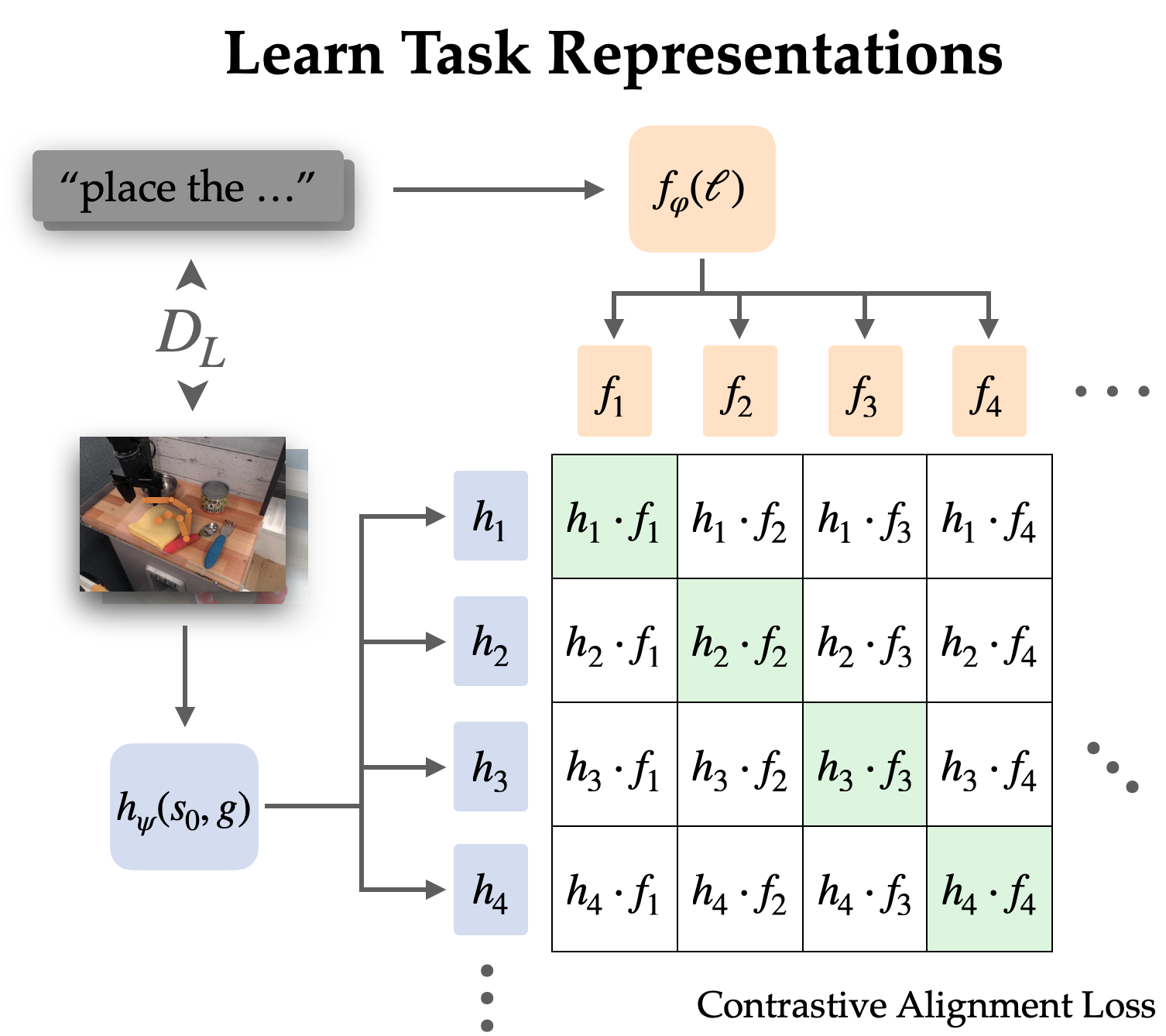

We explicitly align representations between goal-conditioned and language-conditioned duties on the labeled dataset by way of contrastive studying.

Since language usually describes relative change, we select to align representations of state-goal pairs with the language instruction (versus simply aim with language). Empirically, this additionally makes the representations simpler to study since they’ll omit most data within the pictures and give attention to the change from state to aim.

We study this alignment construction by way of an infoNCE goal on directions and pictures from the labeled dataset. We practice twin picture and textual content encoders by doing contrastive studying on matching pairs of language and aim representations. The goal encourages excessive similarity between representations of the identical process and low similarity for others, the place the unfavourable examples are sampled from different trajectories.

When utilizing naive unfavourable sampling (uniform from the remainder of the dataset), the discovered representations usually ignored the precise process and easily aligned directions and objectives that referred to the identical scenes. To use the coverage in the true world, it’s not very helpful to affiliate language with a scene; fairly we want it to disambiguate between completely different duties in the identical scene. Thus, we use a tough unfavourable sampling technique, the place as much as half the negatives are sampled from completely different trajectories in the identical scene.

Naturally, this contrastive studying setup teases at pre-trained vision-language fashions like CLIP. They show efficient zero-shot and few-shot generalization functionality for vision-language duties, and provide a strategy to incorporate information from internet-scale pre-training. However, most vision-language fashions are designed for aligning a single static picture with its caption with out the flexibility to know adjustments within the setting, and so they carry out poorly when having to concentrate to a single object in cluttered scenes.

To handle these points, we devise a mechanism to accommodate and fine-tune CLIP for aligning process representations. We modify the CLIP structure in order that it could possibly function on a pair of pictures mixed with early fusion (stacked channel-wise). This seems to be a succesful initialization for encoding pairs of state and aim pictures, and one which is especially good at preserving the pre-training advantages from CLIP.

Robot coverage outcomes

For our major end result, we consider the GRIF coverage in the true world on 15 duties throughout 3 scenes. The directions are chosen to be a mixture of ones which are well-represented within the coaching knowledge and novel ones that require a point of compositional generalization. One of the scenes additionally options an unseen mixture of objects.

We examine GRIF towards plain LCBC and stronger baselines impressed by prior work like LangLfP and BC-Z. LLfP corresponds to collectively coaching with LCBC and GCBC. BC-Z is an adaptation of the namesake methodology to our setting, the place we practice on LCBC, GCBC, and a easy alignment time period. It optimizes the cosine distance loss between the duty representations and doesn’t use image-language pre-training.

The insurance policies had been prone to 2 major failure modes. They can fail to know the language instruction, which ends up in them trying one other process or performing no helpful actions in any respect. When language grounding will not be strong, insurance policies may even begin an unintended process after having performed the proper process, because the authentic instruction is out of context.

Examples of grounding failures

“put the mushroom in the metal pot”

“put the spoon on the towel”

“put the yellow bell pepper on the cloth”

“put the yellow bell pepper on the cloth”

The different failure mode is failing to control objects. This will be because of lacking a grasp, transferring imprecisely, or releasing objects on the incorrect time. We observe that these aren’t inherent shortcomings of the robotic setup, as a GCBC coverage educated on your complete dataset can constantly reach manipulation. Rather, this failure mode typically signifies an ineffectiveness in leveraging goal-conditioned knowledge.

Examples of manipulation failures

“move the bell pepper to the left of the table”

“put the bell pepper in the pan”

“move the towel next to the microwave”

Comparing the baselines, they every suffered from these two failure modes to completely different extents. LCBC depends solely on the small labeled trajectory dataset, and its poor manipulation functionality prevents it from finishing any duties. LLfP collectively trains the coverage on labeled and unlabeled knowledge and exhibits considerably improved manipulation functionality from LCBC. It achieves affordable success charges for widespread directions, however fails to floor extra complicated directions. BC-Z’s alignment technique additionally improves manipulation functionality, seemingly as a result of alignment improves the switch between modalities. However, with out exterior vision-language knowledge sources, it nonetheless struggles to generalize to new directions.

GRIF exhibits the most effective generalization whereas additionally having sturdy manipulation capabilities. It is ready to floor the language directions and perform the duty even when many distinct duties are potential within the scene. We present some rollouts and the corresponding directions under.

Policy Rollouts from GRIF

“move the pan to the front”

“put the bell pepper in the pan”

“put the knife on the purple cloth”

“put the spoon on the towel”

Conclusion

GRIF allows a robotic to make the most of massive quantities of unlabeled trajectory knowledge to study goal-conditioned insurance policies, whereas offering a “language interface” to those insurance policies by way of aligned language-goal process representations. In distinction to prior language-image alignment strategies, our representations align adjustments in state to language, which we present results in vital enhancements over normal CLIP-style image-language alignment targets. Our experiments show that our strategy can successfully leverage unlabeled robotic trajectories, with massive enhancements in efficiency over baselines and strategies that solely use the language-annotated knowledge

Our methodology has numerous limitations that might be addressed in future work. GRIF will not be well-suited for duties the place directions say extra about tips on how to do the duty than what to do (e.g., “pour the water slowly”)—such qualitative directions may require different kinds of alignment losses that contemplate the intermediate steps of process execution. GRIF additionally assumes that each one language grounding comes from the portion of our dataset that’s absolutely annotated or a pre-trained VLM. An thrilling course for future work could be to increase our alignment loss to make the most of human video knowledge to study wealthy semantics from Internet-scale knowledge. Such an strategy might then use this knowledge to enhance grounding on language outdoors the robotic dataset and allow broadly generalizable robotic insurance policies that may comply with person directions.

This publish relies on the next paper:

BAIR Blog

is the official weblog of the Berkeley Artificial Intelligence Research (BAIR) Lab.

BAIR Blog

is the official weblog of the Berkeley Artificial Intelligence Research (BAIR) Lab.

[ad_2]