[ad_1]

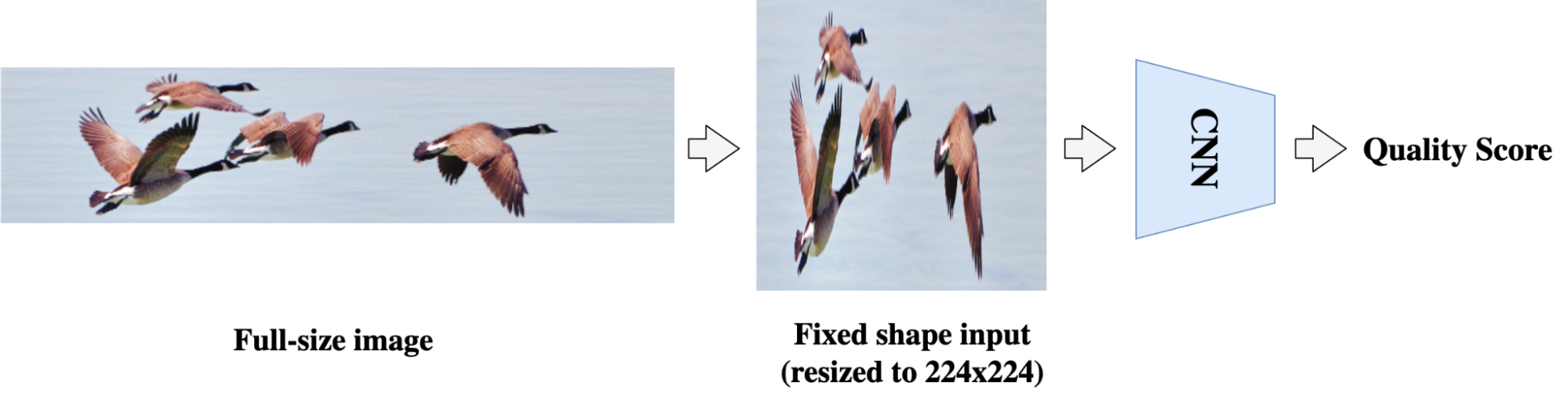

Understanding the aesthetic and technical high quality of photographs is necessary for offering a greater consumer visible expertise. Image high quality evaluation (IQA) makes use of fashions to construct a bridge between a picture and a consumer’s subjective notion of its high quality. In the deep studying period, many IQA approaches, equivalent to NIMA, have achieved success by leveraging the ability of convolutional neural networks (CNNs). However, CNN-based IQA fashions are sometimes constrained by the fixed-size enter requirement in batch coaching, i.e., the enter photographs must be resized or cropped to a set dimension form. This preprocessing is problematic for IQA as a result of photographs can have very completely different side ratios and resolutions. Resizing and cropping can affect picture composition or introduce distortions, thus altering the standard of the picture.

|

| In CNN-based fashions, photographs must be resized or cropped to a set form for batch coaching. However, such preprocessing can alter the picture side ratio and composition, thus impacting picture high quality. Original picture used underneath CC BY 2.0 license. |

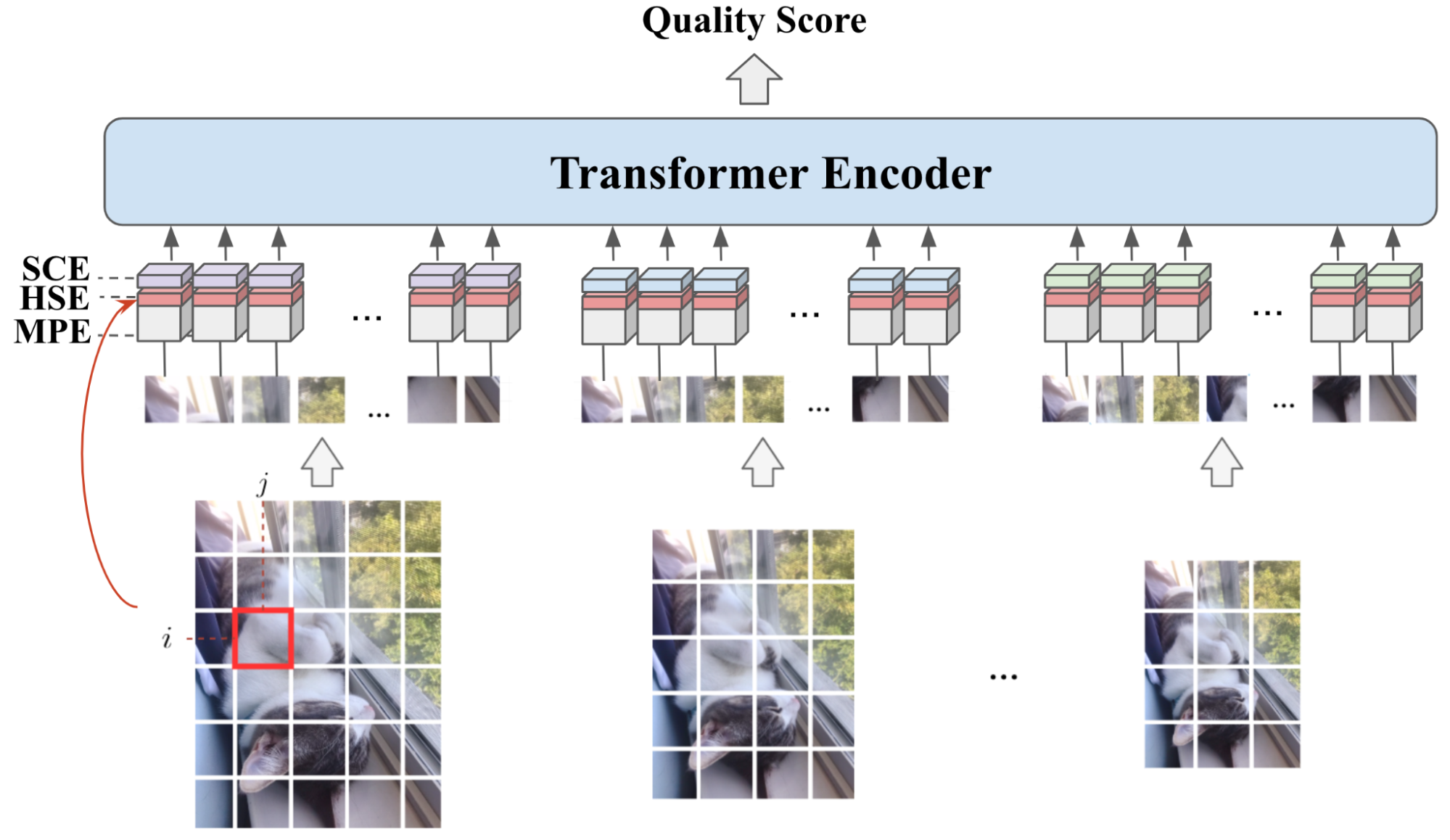

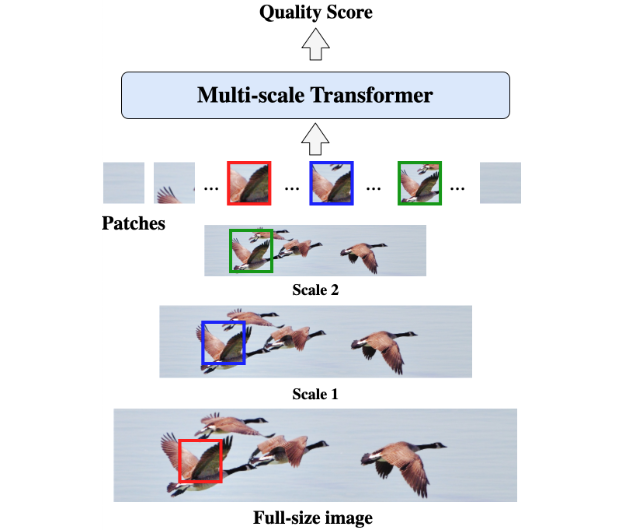

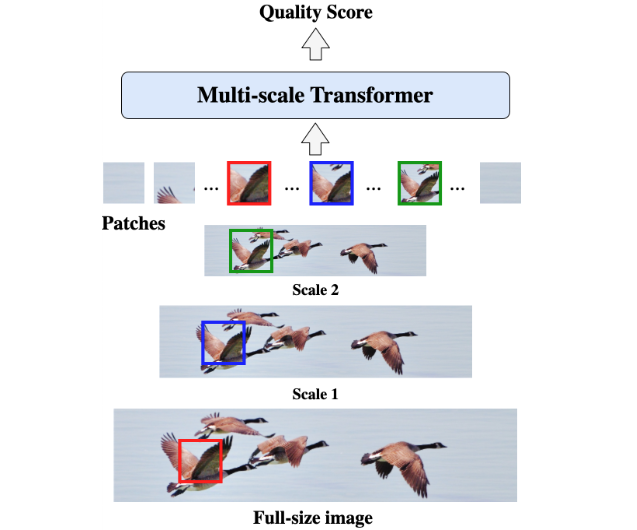

In “MUSIQ: Multi-scale Image Quality Transformer”, revealed at ICCV 2021, we suggest a patch-based multi-scale picture high quality transformer (MUSIQ) to bypass the CNN constraints on mounted enter dimension and predict the picture high quality successfully on native-resolution photographs. The MUSIQ mannequin helps the processing of full-size picture inputs with various side ratios and resolutions and permits multi-scale function extraction to seize picture high quality at completely different granularities. To assist positional encoding within the multi-scale illustration, we suggest a novel hash-based 2D spatial embedding mixed with an embedding that captures the picture scaling. We apply MUSIQ on 4 large-scale IQA datasets, demonstrating constant state-of-the-art outcomes throughout three technical high quality datasets (PaQ-2-PiQ, KonIQ-10k, and SPAQ) and comparable efficiency to that of state-of-the-art fashions on the aesthetic high quality dataset AVA.

|

| The patch-based MUSIQ mannequin can course of the full-size picture and extract multi-scale options, which higher aligns with an individual’s typical visible response. |

In the next determine, we present a pattern of photographs, their MUSIQ rating, and their imply opinion rating (MOS) from a number of human raters within the brackets. The vary of the rating is from 0 to 100, with 100 being the best perceived high quality. As we will see from the determine, MUSIQ predicts excessive scores for photographs with excessive aesthetic high quality and excessive technical high quality, and it predicts low scores for photographs that aren’t aesthetically pleasing (low aesthetic high quality) or that include seen distortions (low technical high quality).

| Predicted MUSIQ rating (and floor reality) on photographs from the KonIQ-10k dataset. Top: MUSIQ predicts excessive scores for prime quality photographs. Middle: MUSIQ predicts low scores for photographs with low aesthetic high quality, equivalent to photographs with poor composition or lighting. Bottom: MUSIQ predicts low scores for photographs with low technical high quality, equivalent to photographs with seen distortion artifacts (e.g., blurry, noisy). |

The Multi-scale Image Quality Transformer

MUSIQ tackles the problem of studying IQA on full-size photographs. Unlike CNN-models which are usually constrained to mounted decision, MUSIQ can deal with inputs with arbitrary side ratios and resolutions.

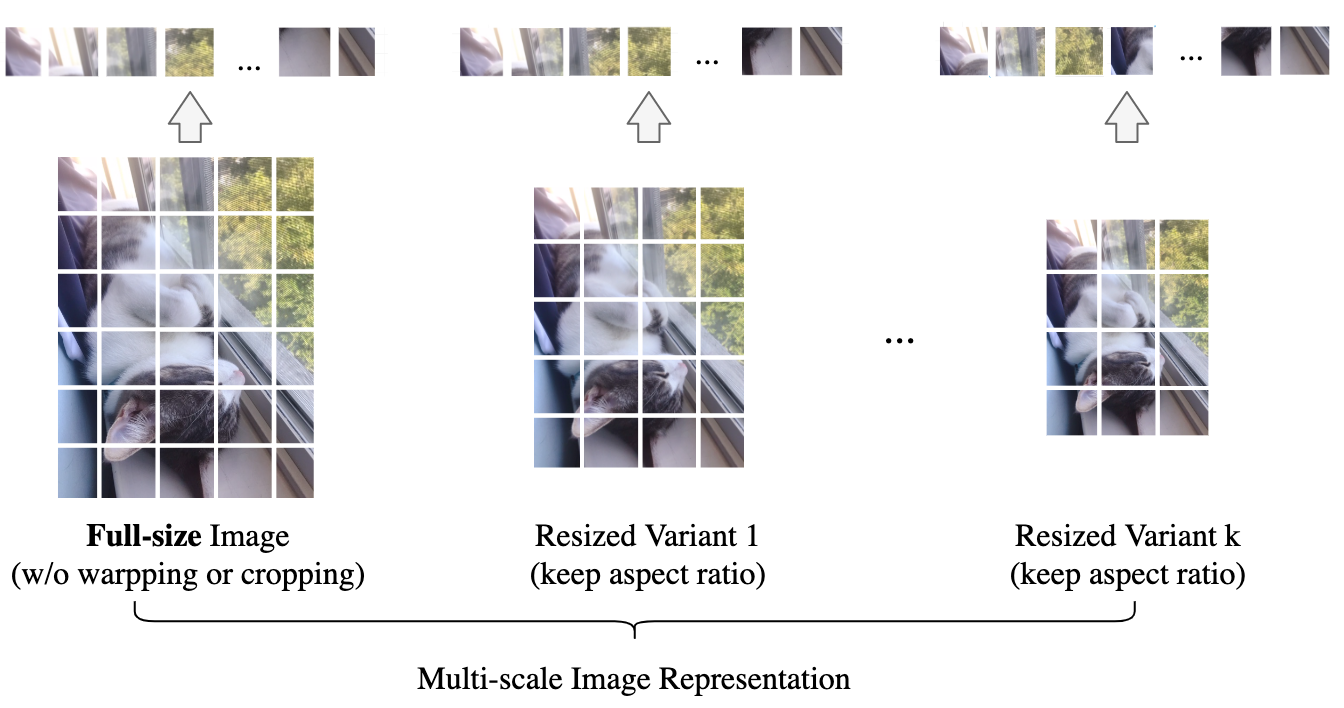

To accomplish this, we first make a multi-scale illustration of the enter picture, containing the native decision picture and its resized variants. To protect the picture composition, we keep its side ratio throughout resizing. After acquiring the pyramid of photographs, we then partition the photographs at completely different scales into fixed-size patches which are fed into the mannequin.

|

| Illustration of the multi-scale picture illustration in MUSIQ. |

Since patches are from photographs of various resolutions, we have to successfully encode the multi-aspect-ratio multi-scale enter right into a sequence of tokens, capturing each the pixel, spatial, and scale info. To obtain this, we design three encoding parts in MUSIQ, together with: 1) a patch encoding module to encode patches extracted from the multi-scale illustration; 2) a novel hash-based spatial embedding module to encode the 2D spatial place for every patch; and three) a learnable scale embedding to encode completely different scales. In this fashion, we will successfully encode the multi-scale enter as a sequence of tokens, serving because the enter to the Transformer encoder.

To predict the ultimate picture high quality rating, we use the usual strategy of prepending a further learnable “classification token” (CLS). The CLS token state on the output of the Transformer encoder serves as the ultimate picture illustration. We then add a totally related layer on prime to foretell the IQS. The determine beneath offers an outline of the MUSIQ mannequin.

Since MUSIQ solely modifications the enter encoding, it’s suitable with any Transformer variants. To show the effectiveness of the proposed technique, in our experiments we use the basic Transformer with a comparatively light-weight setting in order that the mannequin dimension is corresponding to ResNet-50.

Benchmark and Evaluation

To consider MUSIQ, we run experiments on a number of large-scale IQA datasets. On every dataset, we report the Spearman’s rank correlation coefficient (SRCC) and Pearson linear correlation coefficient (PLCC) between our mannequin prediction and the human evaluators’ imply opinion rating. SRCC and PLCC are correlation metrics starting from -1 to 1. Higher PLCC and SRCC means higher alignment between mannequin prediction and human analysis. The graph beneath reveals that MUSIQ outperforms different strategies on PaQ-2-PiQ, KonIQ-10k, and SPAQ.

Notably, the PaQ-2-PiQ check set is totally composed of enormous photos having no less than one dimension exceeding 640 pixels. This may be very difficult for conventional deep studying approaches, which require resizing. MUSIQ can outperform earlier strategies by a big margin on the full-size check set, which verifies its robustness and effectiveness.

It can be value mentioning that earlier CNN-based strategies usually required sampling as many as 20 crops for every picture throughout testing. This sort of multi-crop ensemble is a approach to mitigate the mounted form constraint within the CNN fashions. But since every crop is just a sub-view of the entire picture, the ensemble continues to be an approximate strategy. Moreover, CNN-based strategies each add extra inference price for each crop and, as a result of they pattern completely different crops, they will introduce randomness within the consequence. In distinction, as a result of MUSIQ takes the full-size picture as enter, it might probably instantly study the very best aggregation of knowledge throughout the total picture and it solely must run the inference as soon as.

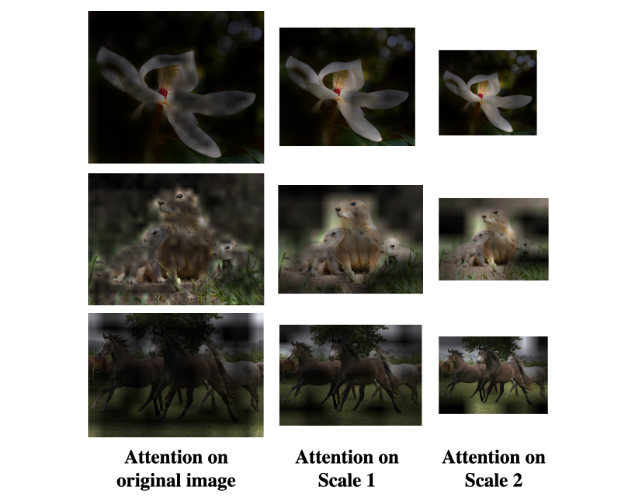

To additional confirm that the MUSIQ mannequin captures completely different info at completely different scales, we visualize the eye weights on every picture at completely different scales.

|

| Attention visualization from the output tokens to the multi-scale illustration, together with the unique decision picture and two proportionally resized photographs. Brighter areas point out increased consideration, which signifies that these areas are extra necessary for the mannequin output. Images for illustration are taken from the AVA dataset. |

We observe that MUSIQ tends to give attention to extra detailed areas within the full, high-resolution photographs and on extra world areas on the resized ones. For instance, for the flower photograph above, the mannequin’s consideration on the unique picture is specializing in the pedal particulars, and the eye shifts to the buds at decrease resolutions. This reveals that the mannequin learns to seize picture high quality at completely different granularities.

Conclusion

We suggest a multi-scale picture high quality transformer (MUSIQ), which might deal with full-size picture enter with various resolutions and side ratios. By reworking the enter picture to a multi-scale illustration with each world and native views, the mannequin can seize the picture high quality at completely different granularities. Although MUSIQ is designed for IQA, it may be utilized to different eventualities the place process labels are delicate to picture decision and side ratio. The MUSIQ mannequin and checkpoints can be found at our GitHub repository.

Acknowledgements

This work is made doable by way of a collaboration spanning a number of groups throughout Google. We’d wish to acknowledge contributions from Qifei Wang, Yilin Wang and Peyman Milanfar.

[ad_2]