Marc Olson has been a part of the crew shaping Elastic Block Store (EBS) for over a decade. In that point, he’s helped to drive the dramatic evolution of EBS from a easy block storage service counting on shared drives to an enormous community storage system that delivers over 140 trillion day by day operations.

In this put up, Marc supplies an interesting insider’s perspective on the journey of EBS. He shares hard-won classes in areas akin to queueing principle, the significance of complete instrumentation, and the worth of incrementalism versus radical adjustments. Most importantly, he emphasizes how constraints can usually breed inventive options. It’s an insightful have a look at how one among AWS’s foundational providers has advanced to fulfill the wants of our prospects (and the tempo at which they’re innovating).

–W

Continuous reinvention: A quick historical past of block storage at AWS

I’ve constructed system software program for many of my profession, and earlier than becoming a member of AWS it was principally within the networking and safety areas. When I joined AWS practically 13 years in the past, I entered a brand new area—storage—and stepped into a brand new problem. Even again then the size of AWS dwarfed something I had labored on, however lots of the similar methods I had picked up till that time remained relevant—distilling issues right down to first rules, and utilizing successive iteration to incrementally resolve issues and enhance efficiency.

If you go searching at AWS providers right this moment, you’ll discover a mature set of core constructing blocks, but it surely wasn’t at all times this fashion. EBS launched on August 20, 2008, practically two years after EC2 turned accessible in beta, with a easy concept to offer community connected block storage for EC2 situations. We had one or two storage specialists, and some distributed programs people, and a stable information of pc programs and networks. How laborious might it’s? In retrospect, if we knew on the time how a lot we didn’t know, we might not have even began the undertaking!

Since I’ve been at EBS, I’ve had the chance to be a part of the crew that’s advanced EBS from a product constructed utilizing shared laborious disk drives (HDDs), to 1 that’s able to delivering a whole bunch of hundreds of IOPS (IO operations per second) to a single EC2 occasion. It’s exceptional to mirror on this as a result of EBS is able to delivering extra IOPS to a single occasion right this moment than it might ship to a complete Availability Zone (AZ) within the early years on high of HDDs. Even extra amazingly, right this moment EBS in combination delivers over 140 trillion operations day by day throughout a distributed SSD fleet. But we undoubtedly didn’t do it in a single day, or in a single huge bang, and even completely. When I began on the EBS crew, I initially labored on the EBS shopper, which is the piece of software program chargeable for changing occasion IO requests into EBS storage operations. Since then I’ve labored on nearly each element of EBS and have been delighted to have had the chance to take part so straight within the evolution and development of EBS.

As a storage system, EBS is a bit distinctive. It’s distinctive as a result of our main workload is system disks for EC2 situations, motivated by the laborious disks that used to take a seat inside bodily datacenter servers. Quite a lot of storage providers place sturdiness as their main design aim, and are prepared to degrade efficiency or availability in an effort to defend bytes. EBS prospects care about sturdiness, and we offer the primitives to assist them obtain excessive sturdiness with io2 Block Express volumes and quantity snapshots, however additionally they care lots concerning the efficiency and availability of EBS volumes. EBS is so intently tied as a storage primitive for EC2, that the efficiency and availability of EBS volumes tends to translate nearly on to the efficiency and availability of the EC2 expertise, and by extension the expertise of operating functions and providers which are constructed utilizing EC2. The story of EBS is the story of understanding and evolving efficiency in a really large-scale distributed system that spans layers from visitor working programs on the high, all the best way right down to customized SSD designs on the backside. In this put up I’d wish to let you know concerning the journey that we’ve taken, together with some memorable classes which may be relevant to your programs. After all, programs efficiency is a posh and actually difficult space, and it’s a posh language throughout many domains.

Queueing principle, briefly

Before we dive too deep, let’s take a step again and have a look at how pc programs work together with storage. The high-level fundamentals haven’t modified by means of the years—a storage system is related to a bus which is related to the CPU. The CPU queues requests that journey the bus to the system. The storage system both retrieves the information from CPU reminiscence and (ultimately) locations it onto a sturdy substrate, or retrieves the information from the sturdy media, after which transfers it to the CPU’s reminiscence.

You can consider this like a financial institution. You stroll into the financial institution with a deposit, however first it’s important to traverse a queue earlier than you may converse with a financial institution teller who will help you along with your transaction. In an ideal world, the variety of patrons getting into the financial institution arrive on the precise charge at which their request might be dealt with, and also you by no means have to face in a queue. But the actual world isn’t good. The actual world is asynchronous. It’s extra doubtless that a couple of folks enter the financial institution on the similar time. Perhaps they’ve arrived on the identical streetcar or practice. When a bunch of individuals all stroll into the financial institution on the similar time, a few of them are going to have to attend for the teller to course of the transactions forward of them.

As we take into consideration the time to finish every transaction, and empty the queue, the typical time ready in line (latency) throughout all prospects might look acceptable, however the first individual within the queue had the very best expertise, whereas the final had a for much longer delay. There are a lot of issues the financial institution can do to enhance the expertise for all prospects. The financial institution might add extra tellers to course of extra requests in parallel, it might rearrange the teller workflows so that every transaction takes much less time, decreasing each the full time and the typical time, or it might create totally different queues for both latency insensitive prospects or consolidating transactions which may be quicker to maintain the queue low. But every of those choices comes at an extra price—hiring extra tellers for a peak which will by no means happen, or including extra actual property to create separate queues. While imperfect, except you have got infinite assets, queues are needed to soak up peak load.

In community storage programs, now we have a number of queues within the stack, together with these between the working system kernel and the storage adapter, the host storage adapter to the storage cloth, the goal storage adapter, and the storage media. In legacy community storage programs, there could also be totally different distributors for every element, and totally different ways in which they consider servicing the queue. You could also be utilizing a devoted, lossless community cloth like fiber channel, or utilizing iSCSI or NFS over TCP, both with the working system community stack, or a customized driver. In both case, tuning the storage community usually takes specialised information, separate from tuning the applying or the storage media.

When we first constructed EBS in 2008, the storage market was largely HDDs, and the latency of our service was dominated by the latency of this storage media. Last 12 months, Andy Warfield went in-depth concerning the fascinating mechanical engineering behind HDDs. As an engineer, I nonetheless marvel at all the things that goes into a tough drive, however on the finish of the day they’re mechanical units and physics limits their efficiency. There’s a stack of platters which are spinning at excessive velocity. These platters have tracks that comprise the information. Relative to the dimensions of a observe (<100 nanometers), there’s a big arm that swings forwards and backwards to search out the suitable observe to learn or write your information. Because of the physics concerned, the IOPS efficiency of a tough drive has remained comparatively fixed for the previous few a long time at roughly 120-150 operations per second, or 6-8 ms common IO latency. One of the largest challenges with HDDs is that tail latencies can simply drift into the a whole bunch of milliseconds with the affect of queueing and command reordering within the drive.

We didn’t have to fret a lot concerning the community getting in the best way since end-to-end EBS latency was dominated by HDDs and measured within the 10s of milliseconds. Even our early information heart networks had been beefy sufficient to deal with our consumer’s latency and throughput expectations. The addition of 10s of microseconds on the community was a small fraction of total latency.

Compounding this latency, laborious drive efficiency can also be variable relying on the opposite transactions within the queue. Smaller requests which are scattered randomly on the media take longer to search out and entry than a number of massive requests which are all subsequent to one another. This random efficiency led to wildly inconsistent conduct. Early on, we knew that we would have liked to unfold prospects throughout many disks to realize affordable efficiency. This had a profit, it dropped the height outlier latency for the most popular workloads, however sadly it unfold the inconsistent conduct out in order that it impacted many purchasers.

When one workload impacts one other, we name this a “noisy neighbor.” Noisy neighbors turned out to be a essential drawback for the enterprise. As AWS advanced, we discovered that we needed to focus ruthlessly on a high-quality buyer expertise, and that inevitably meant that we would have liked to realize robust efficiency isolation to keep away from noisy neighbors inflicting interference with different buyer workloads.

At the size of AWS, we frequently run into challenges which are laborious and sophisticated as a result of scale and breadth of our programs, and our deal with sustaining the shopper expertise. Surprisingly, the fixes are sometimes fairly easy when you deeply perceive the system, and have huge affect as a result of scaling components at play. We had been capable of make some enhancements by altering scheduling algorithms to the drives and balancing buyer workloads throughout much more spindles. But all of this solely resulted in small incremental positive aspects. We weren’t actually hitting the breakthrough that really eradicated noisy neighbors. Customer workloads had been too unpredictable to realize the consistency we knew they wanted. We wanted to discover one thing fully totally different.

Set long run objectives, however don’t be afraid to enhance incrementally

Around the time I began at AWS in 2011, stable state disks (SSDs) turned extra mainstream, and had been accessible in sizes that began to make them engaging to us. In an SSD, there isn’t any bodily arm to maneuver to retrieve information—random requests are practically as quick as sequential requests—and there are a number of channels between the controller and NAND chips to get to the information. If we revisit the financial institution instance from earlier, changing an HDD with an SSD is like constructing a financial institution the dimensions of a soccer stadium and staffing it with superhumans that may full transactions orders of magnitude quicker. A 12 months later we began utilizing SSDs, and haven’t regarded again.

We began with a small, however significant milestone: we constructed a brand new storage server kind constructed on SSDs, and a brand new EBS quantity kind referred to as Provisioned IOPS. Launching a brand new quantity kind isn’t any small job, and it additionally limits the workloads that may benefit from it. For EBS, there was an instantaneous enchancment, but it surely wasn’t all the things we anticipated.

We thought that simply dropping SSDs in to exchange HDDs would resolve nearly all of our issues, and it actually did tackle the issues that got here from the mechanics of laborious drives. But what stunned us was that the system didn’t enhance practically as a lot as we had hoped and noisy neighbors weren’t mechanically mounted. We needed to flip our consideration to the remainder of our stack—the community and our software program—that the improved storage media all of the sudden put a highlight on.

Even although we would have liked to make these adjustments, we went forward and launched in August 2012 with a most of 1,000 IOPS, 10x higher than present EBS normal volumes, and ~2-3 ms common latency, a 5-10x enchancment with considerably improved outlier management. Our prospects had been excited for an EBS quantity that they might start to construct their mission essential functions on, however we nonetheless weren’t glad and we realized that the efficiency engineering work in our system was actually simply starting. But to do this, we needed to measure our system.

If you may’t measure it, you may’t handle it

At this level in EBS’s historical past (2012), we solely had rudimentary telemetry. To know what to repair, we needed to know what was damaged, after which prioritize these fixes primarily based on effort and rewards. Our first step was to construct a way to instrument each IO at a number of factors in each subsystem—in our shopper initiator, community stack, storage sturdiness engine, and in our working system. In addition to monitoring buyer workloads, we additionally constructed a set of canary checks that run constantly and allowed us to observe affect of adjustments—each optimistic and detrimental—below well-known workloads.

With our new telemetry we recognized a couple of main areas for preliminary funding. We knew we would have liked to scale back the variety of queues in your complete system. Additionally, the Xen hypervisor had served us properly in EC2, however as a general-purpose hypervisor, it had totally different design objectives and plenty of extra options than we would have liked for EC2. We suspected that with some funding we might scale back complexity of the IO path within the hypervisor, resulting in improved efficiency. Moreover, we would have liked to optimize the community software program, and in our core sturdiness engine we would have liked to do plenty of work organizationally and in code, together with on-disk information structure, cache line optimization, and absolutely embracing an asynchronous programming mannequin.

A very constant lesson at AWS is that system efficiency points nearly universally span plenty of layers in our {hardware} and software program stack, however even nice engineers are likely to have jobs that focus their consideration on particular narrower areas. While the a lot celebrated supreme of a “full stack engineer” is efficacious, in deep and sophisticated programs it’s usually much more worthwhile to create cohorts of specialists who can collaborate and get actually inventive throughout your complete stack and all their particular person areas of depth.

By this level, we already had separate groups for the storage server and for the shopper, so we had been capable of deal with these two areas in parallel. We additionally enlisted the assistance of the EC2 hypervisor engineers and shaped a cross-AWS community efficiency cohort. We began to construct a blueprint of each short-term, tactical fixes and longer-term architectural adjustments.

Divide and conquer

When I used to be an undergraduate scholar, whereas I cherished most of my lessons, there have been a pair that I had a love-hate relationship with. “Algorithms” was taught at a graduate stage at my college for each undergraduates and graduates. I discovered the coursework intense, however I finally fell in love with the subject, and Introduction to Algorithms, generally known as CLR, is among the few textbooks I retained, and nonetheless often reference. What I didn’t understand till I joined Amazon, and appears apparent in hindsight, is you can design a company a lot the identical approach you may design a software program system. Different algorithms have totally different advantages and tradeoffs in how your group capabilities. Where sensible, Amazon chooses a divide and conquer strategy, and retains groups small and centered on a self-contained element with well-defined APIs.

This works properly when utilized to parts of a retail web site and management airplane programs, but it surely’s much less intuitive in how you could possibly construct a high-performance information airplane this fashion, and on the similar time enhance efficiency. In the EBS storage server, we reorganized our monolithic growth crew into small groups centered on particular areas, akin to information replication, sturdiness, and snapshot hydration. Each crew centered on their distinctive challenges, dividing the efficiency optimization into smaller sized bites. These groups are capable of iterate and commit their adjustments independently—made doable by rigorous testing that we’ve constructed up over time. It was essential for us to make continuous progress for our prospects, so we began with a blueprint for the place we needed to go, after which started the work of separating out parts whereas deploying incremental adjustments.

The finest a part of incremental supply is you can make a change and observe its affect earlier than making the subsequent change. If one thing doesn’t work such as you anticipated, then it’s simple to unwind it and go in a distinct route. In our case, the blueprint that we specified by 2013 ended up wanting nothing like what EBS appears to be like like right this moment, but it surely gave us a route to start out transferring towards. For instance, again then we by no means would have imagined that Amazon would at some point construct its personal SSDs, with a expertise stack that could possibly be tailor-made particularly to the wants of EBS.

Always query your assumptions!

Challenging our assumptions led to enhancements in each single a part of the stack.

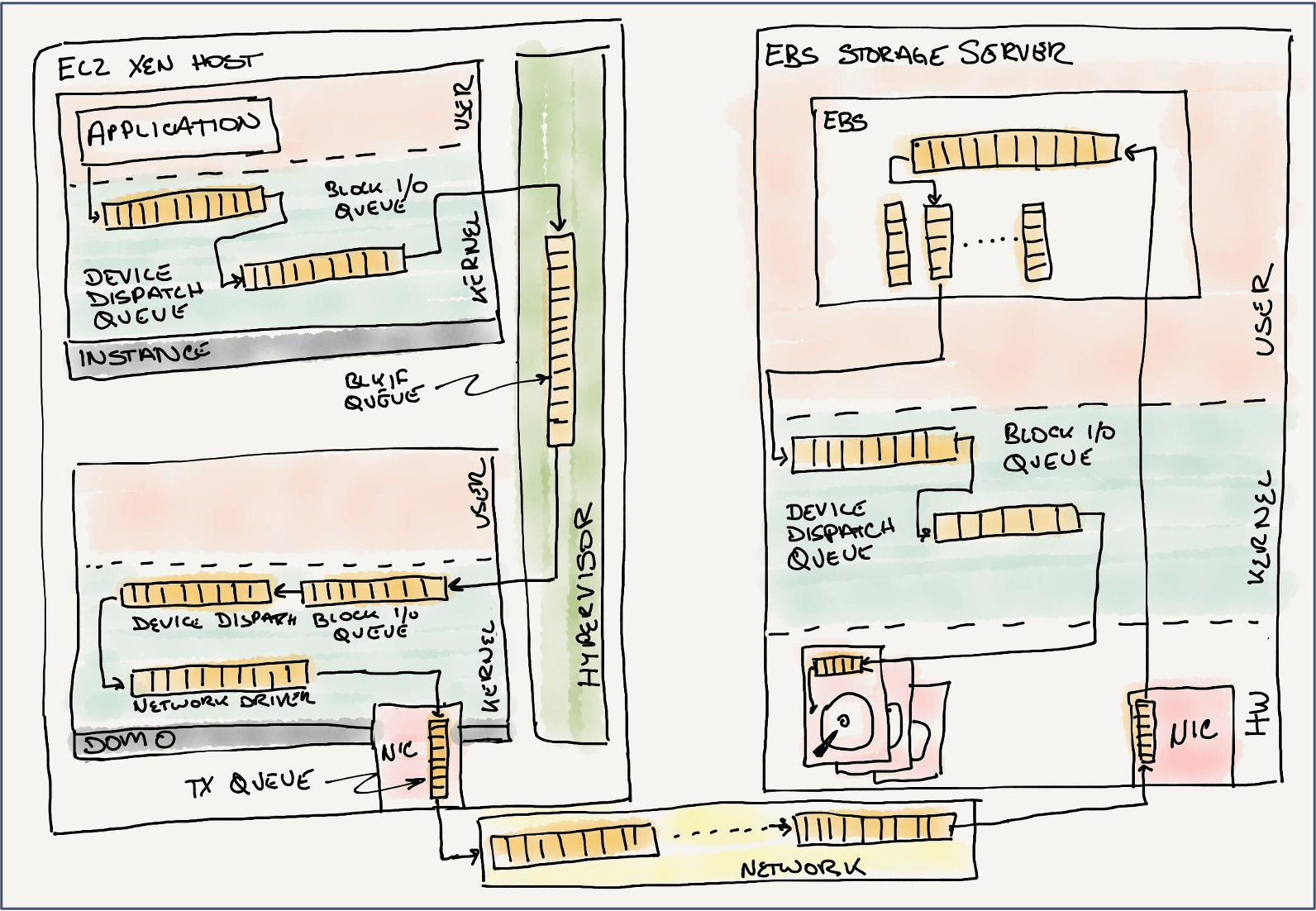

We began with software program virtualization. Until late 2017 all EC2 situations ran on the Xen hypervisor. With units in Xen, there’s a ring queue setup that enables visitor situations, or domains, to share info with a privileged driver area (dom0) for the needs of IO and different emulated units. The EBS shopper ran in dom0 as a kernel block system. If we observe an IO request from the occasion, simply to get off of the EC2 host there are various queues: the occasion block system queue, the Xen ring, the dom0 kernel block system queue, and the EBS shopper community queue. In most programs, efficiency points are compounding, and it’s useful to deal with parts in isolation.

One of the primary issues that we did was to jot down a number of “loopback” units in order that we might isolate every queue to gauge the affect of the Xen ring, the dom0 block system stack, and the community. We had been nearly instantly stunned that with nearly no latency within the dom0 system driver, when a number of situations tried to drive IO, they might work together with one another sufficient that the goodput of your complete system would decelerate. We had discovered one other noisy neighbor! Embarrassingly, we had launched EC2 with the Xen defaults for the variety of block system queues and queue entries, which had been set a few years prior primarily based on the restricted storage {hardware} that was accessible to the Cambridge lab constructing Xen. This was very sudden, particularly after we realized that it restricted us to solely 64 IO excellent requests for a complete host, not per system—actually not sufficient for our most demanding workloads.

We mounted the principle points with software program virtualization, however even that wasn’t sufficient. In 2013, we had been properly into the event of our first Nitro offload card devoted to networking. With this primary card, we moved the processing of VPC, our software program outlined community, from the Xen dom0 kernel, right into a devoted {hardware} pipeline. By isolating the packet processing information airplane from the hypervisor, we not wanted to steal CPU cycles from buyer situations to drive community site visitors. Instead, we leveraged Xen’s means to go a digital PCI system on to the occasion.

This was a incredible win for latency and effectivity, so we determined to do the identical factor for EBS storage. By transferring extra processing to {hardware}, we eliminated a number of working system queues within the hypervisor, even when we weren’t able to go the system on to the occasion simply but. Even with out passthrough, by offloading extra of the interrupt pushed work, the hypervisor spent much less time servicing the requests—the {hardware} itself had devoted interrupt processing capabilities. This second Nitro card additionally had {hardware} functionality to deal with EBS encrypted volumes with no affect to EBS quantity efficiency. Leveraging our {hardware} for encryption additionally meant that the encryption key materials is saved separate from the hypervisor, which additional protects buyer information.

Moving EBS to Nitro was an enormous win, but it surely nearly instantly shifted the overhead to the community itself. Here the issue appeared easy on the floor. We simply wanted to tune our wire protocol with the newest and biggest information heart TCP tuning parameters, whereas selecting the very best congestion management algorithm. There had been a couple of shifts that had been working towards us: AWS was experimenting with totally different information heart cabling topology, and our AZs, as soon as a single information heart, had been rising past these boundaries. Our tuning can be helpful, as within the instance above, the place including a small quantity of random latency to requests to storage servers counter-intuitively diminished the typical latency and the outliers as a result of smoothing impact it has on the community. These adjustments had been finally quick lived as we constantly elevated the efficiency and scale of our system, and we needed to frequently measure and monitor to ensure we didn’t regress.

Knowing that we would want one thing higher than TCP, in 2014 we began laying the inspiration for Scalable Reliable Datagram (SRD) with “A Cloud-Optimized Transport Protocol for Elastic and Scalable HPC”. Early on we set a couple of necessities, together with a protocol that might enhance our means to get well and route round failures, and we needed one thing that could possibly be simply offloaded into {hardware}. As we had been investigating, we made two key observations: 1/ we didn’t must design for the final web, however we might focus particularly on our information heart community designs, and a couple of/ in storage, the execution of IO requests which are in flight could possibly be reordered. We didn’t must pay the penalty of TCP’s strict in-order supply ensures, however might as a substitute ship totally different requests down totally different community paths, and execute them upon arrival. Any limitations could possibly be dealt with on the shopper earlier than they had been despatched on the community. What we ended up with is a protocol that’s helpful not only for storage, however for networking, too. When utilized in Elastic Network Adapter (ENA) Express, SRD improves the efficiency of your TCP stacks in your visitor. SRD can drive the community at greater utilization by benefiting from a number of community paths and decreasing the overflow and queues within the intermediate community units.

Performance enhancements are by no means a couple of single focus. It’s a self-discipline of constantly difficult your assumptions, measuring and understanding, and shifting focus to probably the most significant alternatives.

Constraints breed innovation

We weren’t glad that solely a comparatively small variety of volumes and prospects had higher efficiency. We needed to carry the advantages of SSDs to everybody. This is an space the place scale makes issues tough. We had a big fleet of hundreds of storage servers operating thousands and thousands of non-provisioned IOPS buyer volumes. Some of those self same volumes nonetheless exist right this moment. It can be an costly proposition to throw away all of that {hardware} and substitute it.

There was empty house within the chassis, however the one location that didn’t trigger disruption within the cooling airflow was between the motherboard and the followers. The good factor about SSDs is that they’re sometimes small and lightweight, however we couldn’t have them flopping round unfastened within the chassis. After some trial and error—and assist from our materials scientists—we discovered warmth resistant, industrial energy hook and loop fastening tape, which additionally allow us to service these SSDs for the remaining lifetime of the servers.

Armed with this information, and plenty of human effort, over the course of some months in 2013, EBS was capable of put a single SSD into each a kind of hundreds of servers. We made a small change to our software program that staged new writes onto that SSD, permitting us to return completion again to your utility, after which flushed the writes to the slower laborious disk asynchronously. And we did this with no disruption to prospects—we had been changing a propeller plane to a jet whereas it was in flight. The factor that made this doable is that we designed our system from the beginning with non-disruptive upkeep occasions in thoughts. We might retarget EBS volumes to new storage servers, and replace software program or rebuild the empty servers as wanted.

This means emigrate buyer volumes to new storage servers has come in useful a number of instances all through EBS’s historical past as we’ve recognized new, extra environment friendly information buildings for our on-disk format, or introduced in new {hardware} to exchange the previous {hardware}. There are volumes nonetheless lively from the primary few months of EBS’s launch in 2008. These volumes have doubtless been on a whole bunch of various servers and a number of generations of {hardware} as we’ve up to date and rebuilt our fleet, all with out impacting the workloads on these volumes.

Reflecting on scaling efficiency

There’s another journey over this time that I’d wish to share, and that’s a private one. Most of my profession previous to Amazon had been in both early startup or equally small firm cultures. I had constructed managed providers, and even distributed programs out of necessity, however I had by no means labored on something near the size of EBS, even the EBS of 2011, each in expertise and group dimension. I used to be used to fixing issues on my own, or possibly with one or two different equally motivated engineers.

I actually take pleasure in going tremendous deep into issues and attacking them till they’re full, however there was a pivotal second when a colleague that I trusted identified that I used to be turning into a efficiency bottleneck for our group. As an engineer who had grown to be an professional within the system, but additionally who cared actually, actually deeply about all features of EBS, I discovered myself on each escalation and likewise eager to evaluation each commit and each proposed design change. If we had been going to achieve success, then I needed to discover ways to scale myself–I wasn’t going to unravel this with simply possession and bias for motion.

This led to much more experimentation, however not within the code. I knew I used to be working with different good people, however I additionally wanted to take a step again and take into consideration the way to make them efficient. One of my favourite instruments to return out of this was peer debugging. I keep in mind a session with a handful of engineers in one among our lounge rooms, with code and some terminals projected on a wall. One of the engineers exclaimed, “Uhhhh, there’s no way that’s right!” and we had discovered one thing that had been nagging us for some time. We had missed the place and the way we had been locking updates to essential information buildings. Our design didn’t normally trigger points, however often we might see sluggish responses to requests, and fixing this eliminated one supply of jitter. We don’t at all times use this method, however the neat factor is that we’re capable of mix our shared programs information when issues get actually tough.

Through all of this, I spotted that empowering folks, giving them the flexibility to securely experiment, can usually result in outcomes which are even higher than what was anticipated. I’ve spent a big portion of my profession since then specializing in methods to take away roadblocks, however depart the guardrails in place, pushing engineers out of their consolation zone. There’s a little bit of psychology to engineering management that I hadn’t appreciated. I by no means anticipated that probably the most rewarding elements of my profession can be encouraging and nurturing others, watching them personal and resolve issues, and most significantly celebrating the wins with them!

Conclusion

Reflecting again on the place we began, we knew we might do higher, however we weren’t positive how a lot better. We selected to strategy the issue, not as a giant monolithic change, however as a collection of incremental enhancements over time. This allowed us to ship buyer worth sooner, and course right as we discovered extra about altering buyer workloads. We’ve improved the form of the EBS latency expertise from one averaging greater than 10 ms per IO operation to constant sub-millisecond IO operations with our highest performing io2 Block Express volumes. We completed all this with out taking the service offline to ship a brand new structure.

We know we’re not finished. Our prospects will at all times need extra, and that problem is what retains us motivated to innovate and iterate.