[ad_1]

Last Updated on October 29, 2022

We have seen learn how to practice the Transformer mannequin on a dataset of English and German sentence pairs and learn how to plot the coaching and validation loss curves to diagnose the mannequin’s studying efficiency and determine at which epoch to run inference on the skilled mannequin. We are actually able to run inference on the skilled Transformer mannequin to translate an enter sentence.

In this tutorial, you’ll uncover learn how to run inference on the skilled Transformer mannequin for neural machine translation.

After finishing this tutorial, you’ll know:

- How to run inference on the skilled Transformer mannequin

- How to generate textual content translations

Let’s get began.

Inferencing the Transformer mannequin

Photo by Karsten Würth, some rights reserved.

Tutorial Overview

This tutorial is split into three components; they’re:

- Recap of the Transformer Architecture

- Inferencing the Transformer Model

- Testing Out the Code

Prerequisites

For this tutorial, we assume that you’re already acquainted with:

Recap of the Transformer Architecture

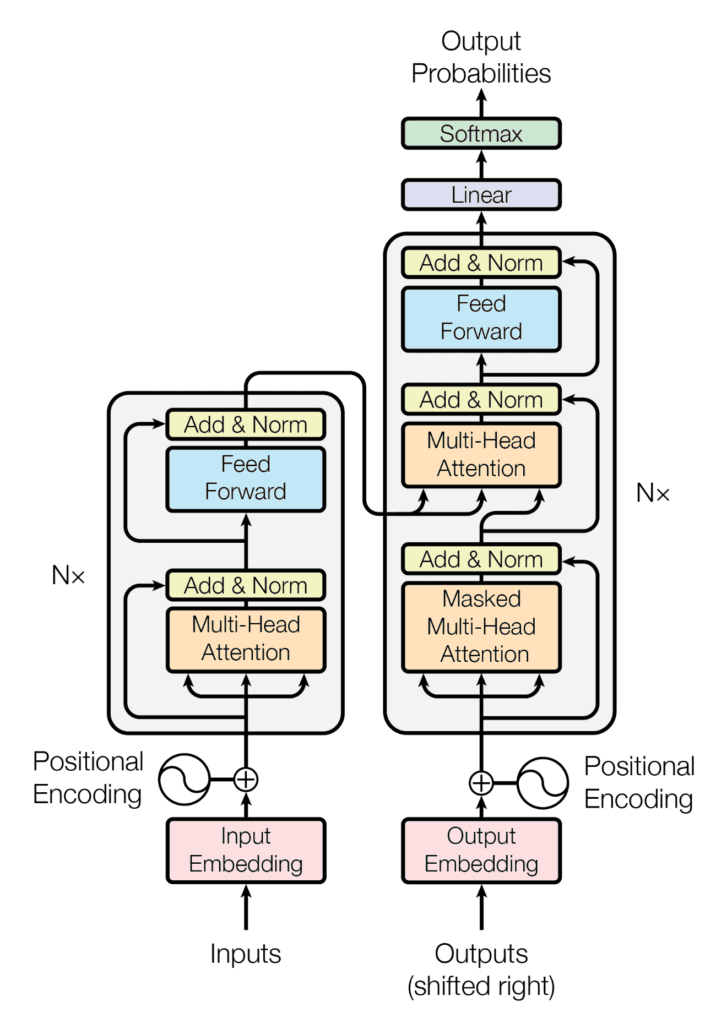

Recall having seen that the Transformer structure follows an encoder-decoder construction. The encoder, on the left-hand aspect, is tasked with mapping an enter sequence to a sequence of steady representations; the decoder, on the right-hand aspect, receives the output of the encoder along with the decoder output on the earlier time step to generate an output sequence.

The encoder-decoder construction of the Transformer structure

Taken from “Attention Is All You Need“

In producing an output sequence, the Transformer doesn’t depend on recurrence and convolutions.

You have seen learn how to implement the whole Transformer mannequin and subsequently practice it on a dataset of English and German sentence pairs. Let’s now proceed to run inference on the skilled mannequin for neural machine translation.

Inferencing the Transformer Model

Let’s begin by creating a brand new occasion of the TransformerModel class that was beforehand applied in this tutorial.

You will feed into it the related enter arguments as specified within the paper of Vaswani et al. (2017) and the related details about the dataset in use:

|

# Define the mannequin parameters h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_model = 512 # Dimensionality of mannequin layers’ outputs d_ff = 2048 # Dimensionality of the inside totally linked layer n = 6 # Number of layers within the encoder stack

# Define the dataset parameters enc_seq_length = 7 # Encoder sequence size dec_seq_length = 12 # Decoder sequence size enc_vocab_size = 2405 # Encoder vocabulary measurement dec_vocab_size = 3858 # Decoder vocabulary measurement

# Create mannequin inferencing_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, 0) |

Here, observe that the final enter being fed into the TransformerModel corresponded to the dropout charge for every of the Dropout layers within the Transformer mannequin. These Dropout layers is not going to be used throughout mannequin inferencing (you’ll finally set the coaching argument to False), so you might safely set the dropout charge to 0.

Furthermore, the TransformerModel class was already saved right into a separate script named mannequin.py. Hence, to have the ability to use the TransformerModel class, you could embody from mannequin import TransformerModel.

Next, let’s create a category, Translate, that inherits from the Module base class in Keras and assign the initialized inferencing mannequin to the variable transformer:

|

class Translate(Module): def __init__(self, inferencing_model, **kwargs): tremendous(Translate, self).__init__(**kwargs) self.transformer = inferencing_mannequin ... |

When you skilled the Transformer mannequin, you noticed that you simply first wanted to tokenize the sequences of textual content that have been to be fed into each the encoder and decoder. You achieved this by making a vocabulary of phrases and changing every phrase with its corresponding vocabulary index.

You might want to implement an analogous course of in the course of the inferencing stage earlier than feeding the sequence of textual content to be translated into the Transformer mannequin.

For this objective, you’ll embody throughout the class the next load_tokenizer methodology, which can serve to load the encoder and decoder tokenizers that you’d have generated and saved in the course of the coaching stage:

|

def load_tokenizer(self, title): with open(title, ‘rb’) as deal with: return load(deal with) |

It is necessary that you simply tokenize the enter textual content on the inferencing stage utilizing the identical tokenizers generated on the coaching stage of the Transformer mannequin since these tokenizers would have already been skilled on textual content sequences just like your testing information.

The subsequent step is to create the category methodology, name(), that may take care to:

- Append the beginning (<START>) and end-of-string (<EOS>) tokens to the enter sentence:

|

def __call__(self, sentence): sentence[0] = “<START> “ + sentence[0] + ” <EOS>” |

- Load the encoder and decoder tokenizers (on this case, saved within the

enc_tokenizer.pklanddec_tokenizer.pklpickle information, respectively):

|

enc_tokenizer = self.load_tokenizer(‘enc_tokenizer.pkl’) dec_tokenizer = self.load_tokenizer(‘dec_tokenizer.pkl’) |

- Prepare the enter sentence by tokenizing it first, then padding it to the utmost phrase size, and subsequently changing it to a tensor:

|

encoder_input = enc_tokenizer.texts_to_sequences(sentence) encoder_input = pad_sequences(encoder_input, maxlen=enc_seq_length, padding=‘put up’) encoder_input = convert_to_tensor(encoder_input, dtype=int64) |

- Repeat an analogous tokenization and tensor conversion process for the <START> and <EOS> tokens on the output:

|

output_start = dec_tokenizer.texts_to_sequences([“<START>”]) output_start = convert_to_tensor(output_start[0], dtype=int64)

output_end = dec_tokenizer.texts_to_sequences([“<EOS>”]) output_end = convert_to_tensor(output_end[0], dtype=int64) |

- Prepare the output array that may comprise the translated textual content. Since you have no idea the size of the translated sentence upfront, you’ll initialize the dimensions of the output array to 0, however set its

dynamic_sizeparameter toTruein order that it could develop previous its preliminary measurement. You will then set the primary worth on this output array to the <START> token:

|

decoder_output = TensorArray(dtype=int64, measurement=0, dynamic_size=True) decoder_output = decoder_output.write(0, output_start) |

- Iterate, as much as the decoder sequence size, every time calling the Transformer mannequin to foretell an output token. Here, the

coachingenter, which is then handed on to every of the Transformer’sDropoutlayers, is about toFalsein order that no values are dropped throughout inference. The prediction with the very best rating is then chosen and written on the subsequent accessible index of the output array. Theforloop is terminated with abreakassertion as quickly as an <EOS> token is predicted:

|

for i in vary(dec_seq_length):

prediction = self.transformer(encoder_input, transpose(decoder_output.stack()), coaching=False)

prediction = prediction[:, –1, :]

predicted_id = argmax(prediction, axis=–1) predicted_id = predicted_id[0][newaxis]

decoder_output = decoder_output.write(i + 1, predicted_id)

if predicted_id == output_end: break |

- Decode the anticipated tokens into an output record and return it:

|

output = transpose(decoder_output.stack())[0] output = output.numpy()

output_str = []

# Decode the anticipated tokens into an output record for i in vary(output.form[0]):

key = output[i] translation = dec_tokenizer.index_word[key] output_str.append(translation)

return output_str |

The full code itemizing, to date, is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 |

from pickle import load from tensorflow import Module from keras.preprocessing.sequence import pad_sequences from tensorflow import convert_to_tensor, int64, TensorArray, argmax, newaxis, transpose from mannequin import TransformerModel

# Define the mannequin parameters h = 8 # Number of self-attention heads d_k = 64 # Dimensionality of the linearly projected queries and keys d_v = 64 # Dimensionality of the linearly projected values d_model = 512 # Dimensionality of mannequin layers’ outputs d_ff = 2048 # Dimensionality of the inside totally linked layer n = 6 # Number of layers within the encoder stack

# Define the dataset parameters enc_seq_length = 7 # Encoder sequence size dec_seq_length = 12 # Decoder sequence size enc_vocab_size = 2405 # Encoder vocabulary measurement dec_vocab_size = 3858 # Decoder vocabulary measurement

# Create mannequin inferencing_model = TransformerModel(enc_vocab_size, dec_vocab_size, enc_seq_length, dec_seq_length, h, d_k, d_v, d_model, d_ff, n, 0)

class Translate(Module): def __init__(self, inferencing_model, **kwargs): tremendous(Translate, self).__init__(**kwargs) self.transformer = inferencing_model

def load_tokenizer(self, title): with open(title, ‘rb’) as deal with: return load(deal with)

def __call__(self, sentence): # Append begin and finish of string tokens to the enter sentence sentence[0] = “<START> “ + sentence[0] + ” <EOS>”

# Load encoder and decoder tokenizers enc_tokenizer = self.load_tokenizer(‘enc_tokenizer.pkl’) dec_tokenizer = self.load_tokenizer(‘dec_tokenizer.pkl’)

# Prepare the enter sentence by tokenizing, padding and changing to tensor encoder_input = enc_tokenizer.texts_to_sequences(sentence) encoder_input = pad_sequences(encoder_input, maxlen=enc_seq_length, padding=‘put up’) encoder_input = convert_to_tensor(encoder_input, dtype=int64)

# Prepare the output <START> token by tokenizing, and changing to tensor output_start = dec_tokenizer.texts_to_sequences([“<START>”]) output_start = convert_to_tensor(output_start[0], dtype=int64)

# Prepare the output <EOS> token by tokenizing, and changing to tensor output_end = dec_tokenizer.texts_to_sequences([“<EOS>”]) output_end = convert_to_tensor(output_end[0], dtype=int64)

# Prepare the output array of dynamic measurement decoder_output = TensorArray(dtype=int64, measurement=0, dynamic_size=True) decoder_output = decoder_output.write(0, output_start)

for i in vary(dec_seq_length):

# Predict an output token prediction = self.transformer(encoder_input, transpose(decoder_output.stack()), coaching=False)

prediction = prediction[:, –1, :]

# Select the prediction with the very best rating predicted_id = argmax(prediction, axis=–1) predicted_id = predicted_id[0][newaxis]

# Write the chosen prediction to the output array on the subsequent accessible index decoder_output = decoder_output.write(i + 1, predicted_id)

# Break if an <EOS> token is predicted if predicted_id == output_end: break

output = transpose(decoder_output.stack())[0] output = output.numpy()

output_str = []

# Decode the anticipated tokens into an output string for i in vary(output.form[0]):

key = output[i] print(dec_tokenizer.index_word[key])

return output_str |

Testing Out the Code

In order to check out the code, let’s take a look on the test_dataset.txt file that you’d have saved when preparing the dataset for coaching. This textual content file comprises a set of English-German sentence pairs which have been reserved for testing, from which you’ll be able to choose a few sentences to check.

Let’s begin with the primary sentence:

|

# Sentence to translate sentence = [‘im thirsty’] |

The corresponding floor fact translation in German for this sentence, together with the <START> and <EOS> decoder tokens, must be: <START> ich bin durstig <EOS>.

If you take a look on the plotted coaching and validation loss curves for this mannequin (right here, you’re coaching for 20 epochs), you might discover that the validation loss curve slows down significantly and begins plateauing at round epoch 16.

So let’s proceed to load the saved mannequin’s weights on the sixteenth epoch and take a look at the prediction that’s generated by the mannequin:

|

# Load the skilled mannequin’s weights on the specified epoch inferencing_model.load_weights(‘weights/wghts16.ckpt’)

# Create a brand new occasion of the ‘Translate’ class translator = Translate(inferencing_model)

# Translate the enter sentence print(translator(sentence)) |

Running the strains of code above produces the next translated record of phrases:

|

[‘start’, ‘ich’, ‘bin’, ‘durstig’, ‘eos’] |

Which is equal to the bottom fact German sentence that was anticipated (all the time remember that since you’re coaching the Transformer mannequin from scratch, you might arrive at totally different outcomes relying on the random initialization of the mannequin weights).

Let’s try what would have occurred if you happen to had, as a substitute, loaded a set of weights comparable to a a lot earlier epoch, such because the 4th epoch. In this case, the generated translation is the next:

|

[‘start’, ‘ich’, ‘bin’, ‘nicht’, ‘nicht’, ‘eos’] |

In English, this interprets to: I in not not, which is clearly far off from the enter English sentence, however which is predicted since, at this epoch, the training means of the Transformer mannequin continues to be on the very early levels.

Let’s attempt once more with a second sentence from the check dataset:

|

# Sentence to translate sentence = [‘are we done’] |

The corresponding floor fact translation in German for this sentence, together with the <START> and <EOS> decoder tokens, must be: <START> sind wir dann durch <EOS>.

The mannequin’s translation for this sentence, utilizing the weights saved at epoch 16, is:

|

[‘start’, ‘ich’, ‘war’, ‘fertig’, ‘eos’] |

Which, as a substitute, interprets to: I used to be prepared. While that is additionally not equal to the bottom fact, it’s shut to its that means.

What the final check suggests, nonetheless, is that the Transformer mannequin might need required many extra information samples to coach successfully. This can also be corroborated by the validation loss at which the validation loss curve plateaus stay comparatively excessive.

Indeed, Transformer fashions are infamous for being very information hungry. Vaswani et al. (2017), for instance, skilled their English-to-German translation mannequin utilizing a dataset containing round 4.5 million sentence pairs.

We skilled on the usual WMT 2014 English-German dataset consisting of about 4.5 million sentence pairs…For English-French, we used the considerably bigger WMT 2014 English-French dataset consisting of 36M sentences…

– Attention Is All You Need, 2017.

They reported that it took them 3.5 days on 8 P100 GPUs to coach the English-to-German translation mannequin.

In comparability, you have got solely skilled on a dataset comprising 10,000 information samples right here, cut up between coaching, validation, and check units.

So the subsequent job is definitely for you. If you have got the computational sources accessible, attempt to practice the Transformer mannequin on a a lot bigger set of sentence pairs and see if you happen to can acquire higher outcomes than the translations obtained right here with a restricted quantity of information.

Further Reading

This part gives extra sources on the subject in case you are trying to go deeper.

Books

Papers

Summary

In this tutorial, you found learn how to run inference on the skilled Transformer mannequin for neural machine translation.

Specifically, you discovered:

- How to run inference on the skilled Transformer mannequin

- How to generate textual content translations

Do you have got any questions?

Ask your questions within the feedback under, and I’ll do my greatest to reply.

[ad_2]