[ad_1]

In December 2022, we introduced our partnership with Isovalent to deliver subsequent technology prolonged Berkeley Packet Filter (eBPF) dataplane for cloud-native purposes in Microsoft Azure and it was revealed that the subsequent technology of Azure Container Network Interface (CNI) dataplane can be powered by eBPF and Cilium.

Today, we’re thrilled to announce the overall availability of Azure CNI powered by Cilium. Azure CNI powered by Cilium is a next-generation networking platform that mixes two highly effective applied sciences: Azure CNI for scalable and versatile Pod networking management, built-in with the Azure Virtual Network stack, and Cilium, an open-source challenge that makes use of eBPF-powered information airplane for networking, safety, and observability in Kubernetes. Azure CNI powered by Cilium takes benefit of Cilium’s direct routing mode inside visitor digital machines and combines it with the Azure native routing contained in the Azure community, enabling improved community efficiency for workloads deployed in Azure Kubernetes Service (AKS) clusters, and with inbuilt assist for implementing networking safety.

In this weblog, we’ll delve additional into the efficiency and scalability outcomes achieved by way of this highly effective networking providing in Azure Kubernetes Service.

Performance and scale outcomes

Performance exams are carried out in AKS clusters in overlay mode to research system conduct and consider efficiency beneath heavy load situations. These exams simulate eventualities the place the cluster is subjected to excessive ranges of useful resource utilization, resembling massive concurrent requests or excessive workloads. The goal is to measure numerous efficiency metrics like response instances, throughput, scalability, and useful resource utilization to know the cluster’s conduct and establish any efficiency bottlenecks.

Service routing latency

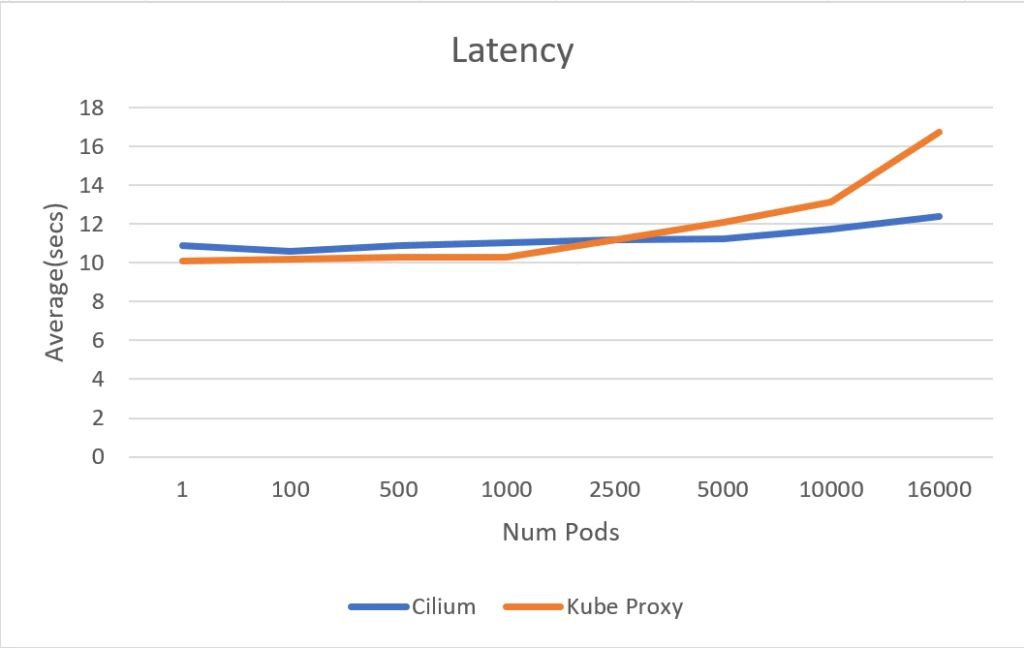

The experiment utilized the Standard D4 v3 SKU nodepool (16 GB mem, 4 vCPU) in an AKS cluster. The apachebench software, generally used for benchmarking and cargo testing net servers, was used for measuring service routing latency. A complete of fifty,000 requests had been generated and measured for general completion time. It has been noticed that the service routing latency of Azure CNI powered by Cilium and kube-proxy initially exhibit related efficiency till the variety of pods reaches 5000. Beyond this threshold, the latency for the service routing for kube-proxy based mostly cluster begins to extend, whereas it maintains a constant latency degree for Cilium based mostly clusters.

Notably, when scaling as much as 16,000 pods, the Azure CNI powered by Cilium cluster demonstrates a major enchancment with a 30 % discount in service routing latency in comparison with the kube-proxy cluster. These outcomes reconfirm that eBPF based mostly service routing performs higher at scale in comparison with IPTables based mostly service routing utilized by kube-proxy.

Service routing latency in seconds

Scale check efficiency

The scale check was carried out in an Azure CNI powered by Cilium Azure Kubernetes Service cluster, using the Standard D4 v3 SKU nodepool (16 GB mem, 4 vCPU). The function of the check was to guage the efficiency of the cluster beneath excessive scale situations. The check targeted on capturing the central processing unit (CPU) and reminiscence utilization of the nodes, in addition to monitoring the load on the API server and Cilium.

The check encompassed three distinct eventualities, every designed to evaluate totally different points of the cluster’s efficiency beneath various situations.

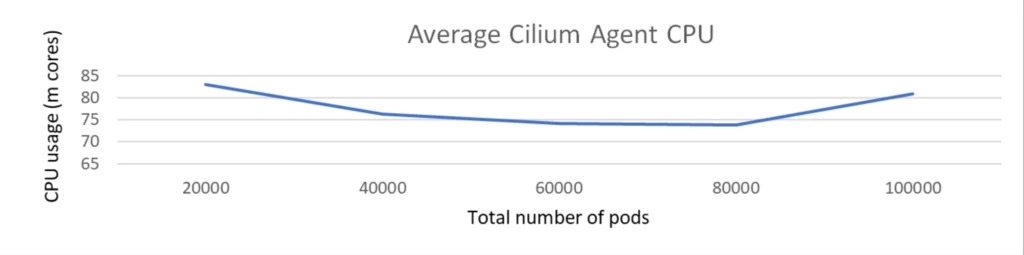

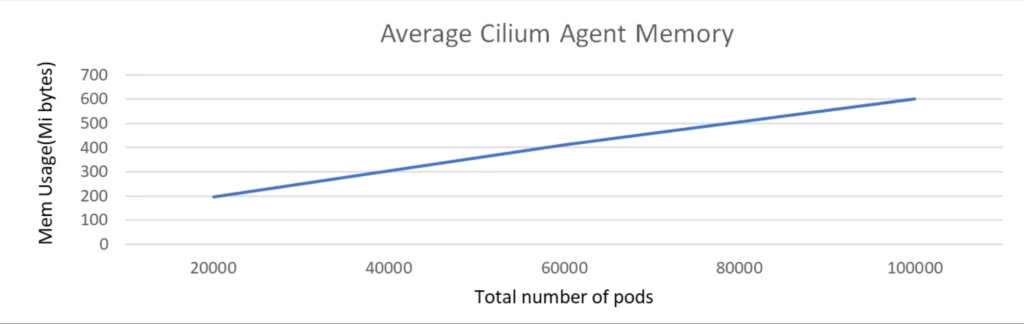

Scale check with 100k pods with no community coverage

The scale check was executed with a cluster comprising 1k nodes and a complete of 100k pods. The check was carried out with none community insurance policies and Kubernetes providers deployed.

During the dimensions check, because the variety of pods elevated from 20K to 100K, the CPU utilization of the Cilium agent remained persistently low, not exceeding 100 milli cores and reminiscence is round 500 MiB.

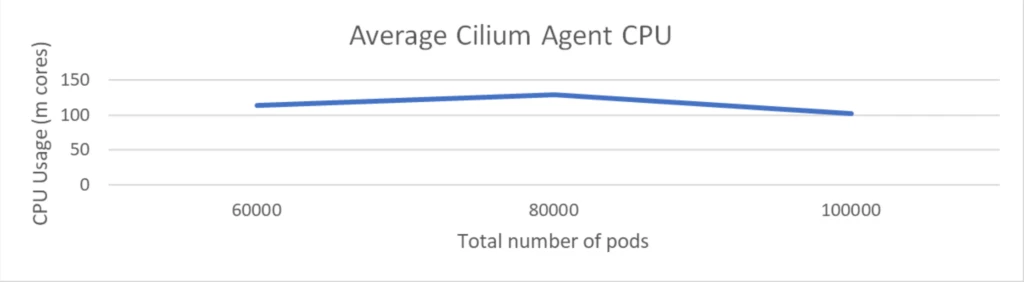

Scale check with 100k pods with 2k community insurance policies

The scale check was executed with a cluster comprising 1K nodes and a complete of 100K pods. The check concerned the deployment of 2K community insurance policies however didn’t embrace any Kubernetes providers.

The CPU utilization of the Cilium agent remained beneath 150 milli cores and reminiscence is round 1 GiB. This demonstrated that Cilium maintained low overhead although the variety of community insurance policies received doubled.

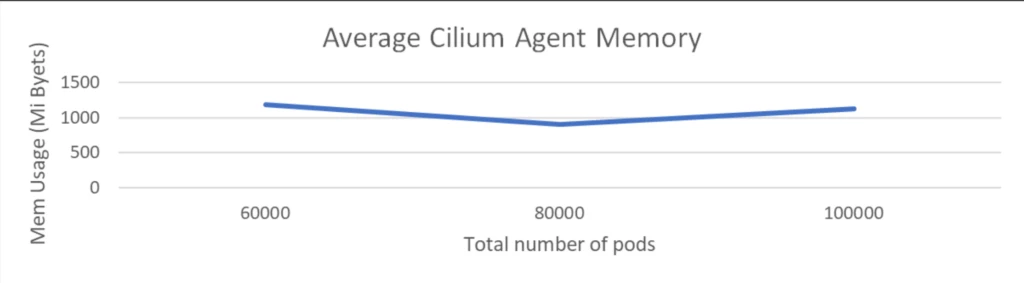

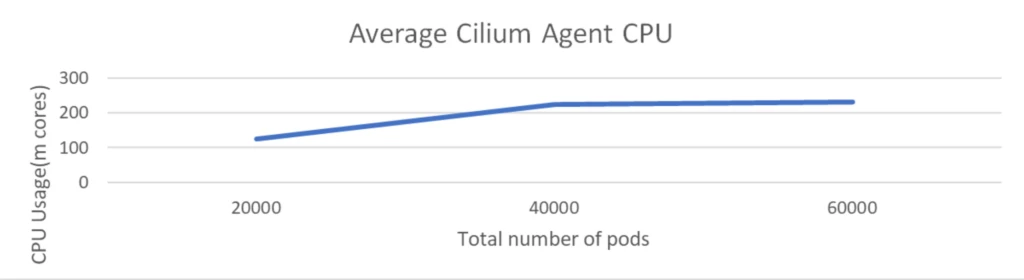

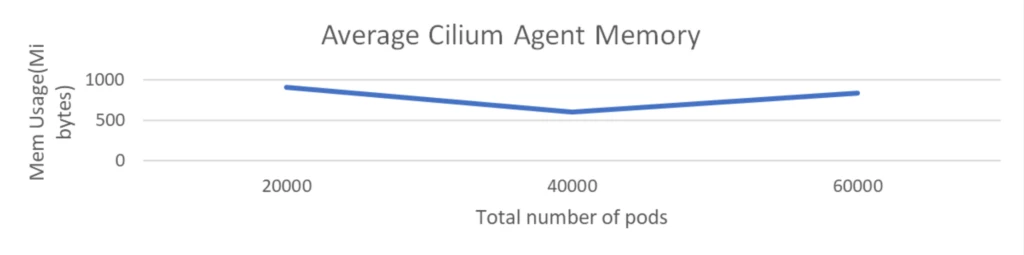

Scale check with 1k providers with 60k pods backend and 2k community insurance policies

This check is executed with 1K nodes and 60K pods, accompanied by 2K community insurance policies and 1K providers, every having 60 pods related to it.

The CPU utilization of the Cilium agent remained at round 200 milli cores and reminiscence stays at round 1 GiB. This demonstrates that Cilium continues to keep up low overhead even when massive variety of providers received deployed and as we’ve seen beforehand service routing by way of eBPF offers vital latency positive aspects for purposes and it’s good to see that’s achieved with very low overhead at infra layer.

Get began with Azure CNI powered by Cilium

To wrap up, as evident from above outcomes, Azure CNI with eBPF dataplane of Cilium is most performant and scales a lot better with nodes, pods, providers, and community insurance policies whereas retaining overhead low. This product providing is now usually accessible in Azure Kubernetes Service (AKS) and works with each Overlay and VNET mode for CNI. We are excited to ask you to attempt Azure CNI powered by Cilium and expertise the advantages in your AKS atmosphere.

To get began at this time, go to the documentation accessible on Azure CNI powered by Cilium.

[ad_2]