[ad_1]

Bayesian optimization (BayesOpt) is a robust device extensively used for world optimization duties, reminiscent of hyperparameter tuning, protein engineering, synthetic chemistry, robotic studying, and even baking cookies. BayesOpt is a good technique for these issues as a result of all of them contain optimizing black-box features which are costly to guage. A black-box operate’s underlying mapping from inputs (configurations of the factor we wish to optimize) to outputs (a measure of efficiency) is unknown. However, we are able to try to grasp its inside workings by evaluating the operate for various combos of inputs. Because every analysis may be computationally costly, we have to discover the perfect inputs in as few evaluations as doable. BayesOpt works by repeatedly developing a surrogate mannequin of the black-box operate and strategically evaluating the operate on the most promising or informative enter location, given the data noticed to this point.

Gaussian processes are widespread surrogate fashions for BayesOpt as a result of they’re simple to make use of, may be up to date with new knowledge, and supply a confidence stage about every of their predictions. The Gaussian course of mannequin constructs a probability distribution over doable features. This distribution is specified by a imply operate (what these doable features appear to be on common) and a kernel operate (how a lot these features can differ throughout inputs). The efficiency of BayesOpt relies on whether or not the boldness intervals predicted by the surrogate mannequin include the black-box operate. Traditionally, consultants use area information to quantitatively outline the imply and kernel parameters (e.g., the vary or smoothness of the black-box operate) to specific their expectations about what the black-box operate ought to appear to be. However, for a lot of real-world purposes like hyperparameter tuning, it is extremely obscure the landscapes of the tuning goals. Even for consultants with related expertise, it may be difficult to slender down applicable mannequin parameters.

In “Pre-trained Gaussian processes for Bayesian optimization”, we think about the problem of hyperparameter optimization for deep neural networks utilizing BayesOpt. We suggest Hyper BayesOpt (HyperBO), a extremely customizable interface with an algorithm that removes the necessity for quantifying mannequin parameters for Gaussian processes in BayesOpt. For new optimization issues, consultants can merely choose earlier duties which are related to the present process they’re making an attempt to unravel. HyperBO pre-trains a Gaussian course of mannequin on knowledge from these chosen duties, and routinely defines the mannequin parameters earlier than operating BayesOpt. HyperBO enjoys theoretical ensures on the alignment between the pre-trained mannequin and the bottom reality, in addition to the standard of its options for black-box optimization. We share robust outcomes of HyperBO each on our new tuning benchmarks for close to–state-of-the-art deep studying fashions and basic multi-task black-box optimization benchmarks (HPO-B). We additionally exhibit that HyperBO is strong to the collection of related duties and has low necessities on the quantity of knowledge and duties for pre-training.

Loss features for pre-training

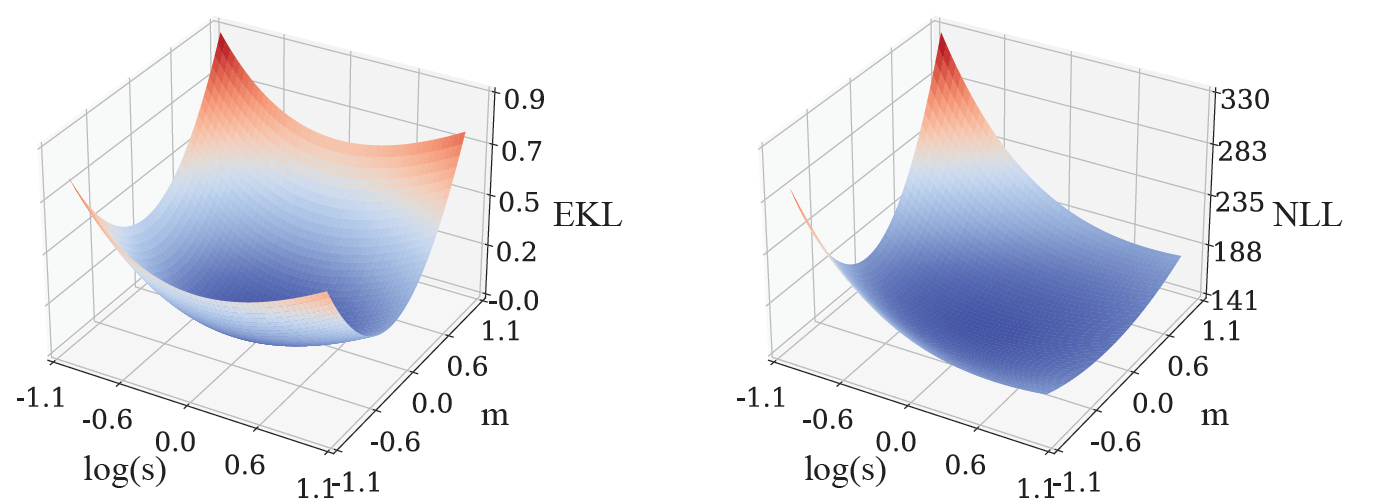

We pre-train a Gaussian course of mannequin by minimizing the Kullback–Leibler divergence (a generally used divergence) between the bottom reality mannequin and the pre-trained mannequin. Since the bottom reality mannequin is unknown, we can’t immediately compute this loss operate. To remedy for this, we introduce two data-driven approximations: (1) Empirical Kullback–Leibler divergence (EKL), which is the divergence between an empirical estimate of the bottom reality mannequin and the pre-trained mannequin; (2) Negative log chance (NLL), which is the the sum of detrimental log likelihoods of the pre-trained mannequin for all coaching features. The computational value of EKL or NLL scales linearly with the variety of coaching features. Moreover, stochastic gradient–primarily based strategies like Adam may be employed to optimize the loss features, which additional lowers the price of computation. In well-controlled environments, optimizing EKL and NLL result in the identical consequence, however their optimization landscapes may be very totally different. For instance, within the easiest case the place the operate solely has one doable enter, its Gaussian course of mannequin turns into a Gaussian distribution, described by the imply (m) and variance (s). Hence the loss operate solely has these two parameters, m and s, and we are able to visualize EKL and NLL as follows:

Pre-training improves Bayesian optimization

In the BayesOpt algorithm, selections on the place to guage the black-box operate are made iteratively. The choice standards are primarily based on the boldness ranges offered by the Gaussian course of, that are up to date in every iteration by conditioning on earlier knowledge factors acquired by BayesOpt. Intuitively, the up to date confidence ranges ought to be excellent: not overly assured or too not sure, since in both of those two circumstances, BayesOpt can’t make the selections that may match what an professional would do.

In HyperBO, we change the hand-specified mannequin in conventional BayesOpt with the pre-trained Gaussian course of. Under delicate circumstances and with sufficient coaching features, we are able to mathematically confirm good theoretical properties of HyperBO: (1) Alignment: the pre-trained Gaussian course of ensures to be near the bottom reality mannequin when each are conditioned on noticed knowledge factors; (2) Optimality: HyperBO ensures to discover a near-optimal resolution to the black-box optimization downside for any features distributed in response to the unknown floor reality Gaussian course of.

|

| We visualize the Gaussian course of (areas shaded in purple are 95% and 99% confidence intervals) conditional on observations (black dots) from an unknown check operate (orange line). Compared to the normal BayesOpt with out pre-training, the expected confidence ranges in HyperBO captures the unknown check operate significantly better, which is a vital prerequisite for Bayesian optimization. |

Empirically, to outline the construction of pre-trained Gaussian processes, we select to make use of very expressive imply features modeled by neural networks, and apply well-defined kernel features on inputs encoded to the next dimensional house with neural networks.

To consider HyperBO on difficult and real looking black-box optimization issues, we created the PD1 benchmark, which accommodates a dataset for multi-task hyperparameter optimization for deep neural networks. PD1 was developed by coaching tens of hundreds of configurations of near–state-of-the-art deep studying fashions on widespread picture and textual content datasets, in addition to a protein sequence dataset. PD1 accommodates roughly 50,000 hyperparameter evaluations from 24 totally different duties (e.g., tuning Wide ResNet on CIFAR100) with roughly 12,000 machine days of computation.

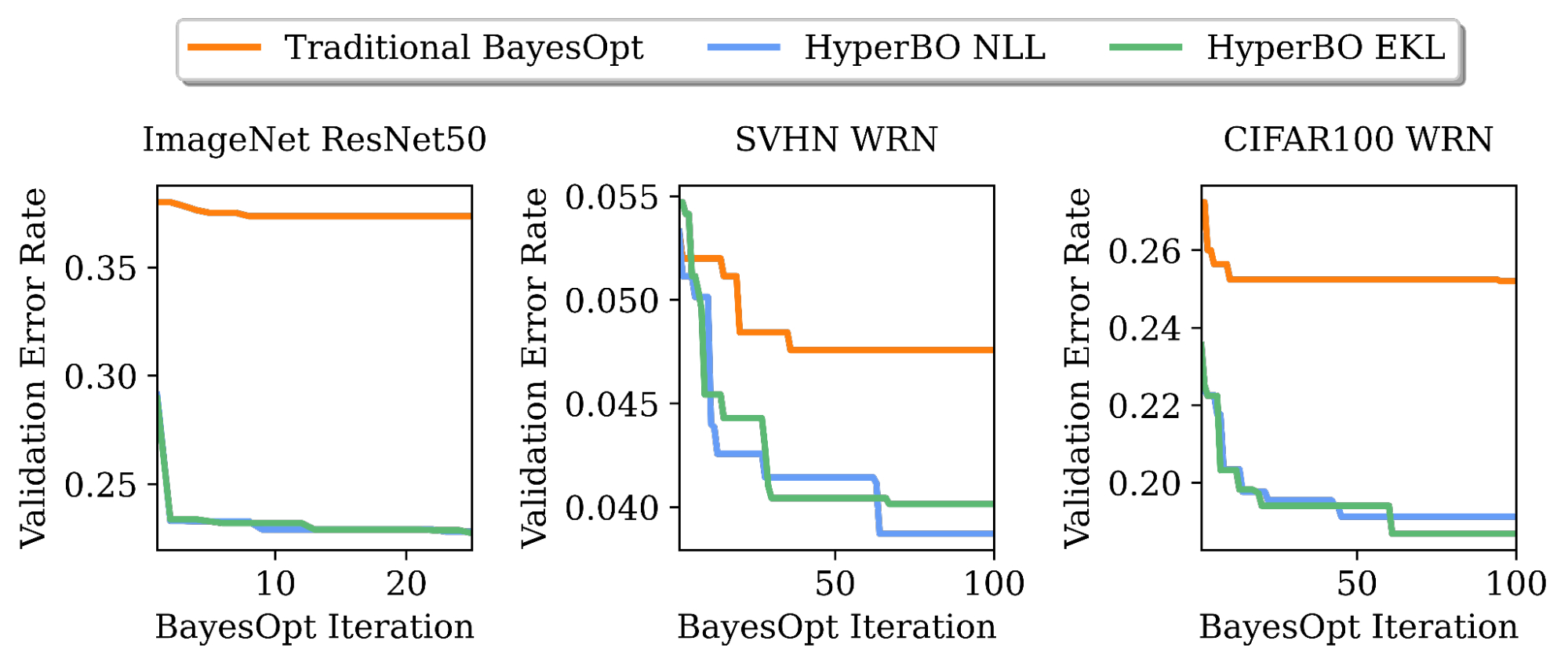

We exhibit that when pre-training for just a few hours on a single CPU, HyperBO can considerably outperform BayesOpt with rigorously hand-tuned fashions on unseen difficult duties, together with tuning ResNet50 on ImageNet. Even with solely ~100 knowledge factors per coaching operate, HyperBO can carry out competitively in opposition to baselines.

|

| Tuning validation error charges of ResNet50 on ImageNet and Wide ResNet (WRN) on the Street View House Numbers (SVHN) dataset and CIFAR100. By pre-training on solely ~20 duties and ~100 knowledge factors per process, HyperBO can considerably outperform conventional BayesOpt (with a rigorously hand-tuned Gaussian course of) on beforehand unseen duties. |

Conclusion and future work

HyperBO is a framework that pre-trains a Gaussian course of and subsequently performs Bayesian optimization with a pre-trained mannequin. With HyperBO, we not must hand-specify the precise quantitative parameters in a Gaussian course of. Instead, we solely have to determine associated duties and their corresponding knowledge for pre-training. This makes BayesOpt each extra accessible and more practical. An essential future course is to allow HyperBO to generalize over heterogeneous search areas, for which we’re creating new algorithms by pre-training a hierarchical probabilistic mannequin.

Acknowledgements

The following members of the Google Research Brain Team performed this analysis: Zi Wang, George E. Dahl, Kevin Swersky, Chansoo Lee, Zachary Nado, Justin Gilmer, Jasper Snoek, and Zoubin Ghahramani. We’d prefer to thank Zelda Mariet and Matthias Feurer for assist and session on switch studying baselines. We’d additionally prefer to thank Rif A. Saurous for constructive suggestions, and Rodolphe Jenatton and David Belanger for suggestions on earlier variations of the manuscript. In addition, we thank Sharat Chikkerur, Ben Adlam, Balaji Lakshminarayanan, Fei Sha and Eytan Bakshy for feedback, and Setareh Ariafar and Alexander Terenin for conversations on animation. Finally, we thank Tom Small for designing the animation for this put up.

[ad_2]