[ad_1]

Discussions at chip design conferences not often get heated. But a yr in the past on the International Symposium on Physical Design (ISPD), issues obtained out of hand. It was described by observers as a “trainwreck” and an “ambush.” The crux of the conflict was whether or not Google’s AI resolution to one among chip design’s thornier issues was actually higher than these of people or state-of-the-art algorithms. It pitted established male digital design automation (EDA) consultants in opposition to two younger feminine Google pc scientists, and the underlying argument had already led to the firing of 1 Google researcher.

This yr at that very same convention, a frontrunner within the subject, IEEE Fellow Andrew Kahng, hoped to place an finish to the acrimony as soon as and for all. He and colleagues on the University of California, San Diego, delivered what he known as “an open and transparent assessment” of Google’s reinforcement studying method. Using Google’s open-source model of its course of, known as Circuit Training, and reverse-engineering some elements that weren’t clear sufficient for Kahng’s crew, they set reinforcement studying in opposition to a human designer, business software program, and state-of-the-art educational algorithms. Kahng declined to talk with IEEE Spectrum for this text, however he spoke to engineers final week at ISPD, which was held just about.

In most instances, Circuit Training was not the winner, however it was aggressive. That’s particularly notable provided that the experiments didn’t enable Circuit Training to make use of its signature means—to enhance its efficiency by studying from different chip designs.

“Our goal has been clarity of understanding that will allow the community to move on,” he instructed engineers. Only time will inform whether or not it labored.

The Hows and the Whens

The drawback in query known as placement. Basically, it’s the means of figuring out the place chunks of logic or reminiscence needs to be positioned on a chip with a view to maximize the chip’s working frequency whereas minimizing its energy consumption and the world it takes up. Finding an optimum resolution to this puzzle is among the many most troublesome issues round, with extra attainable permutations than the sport Go.

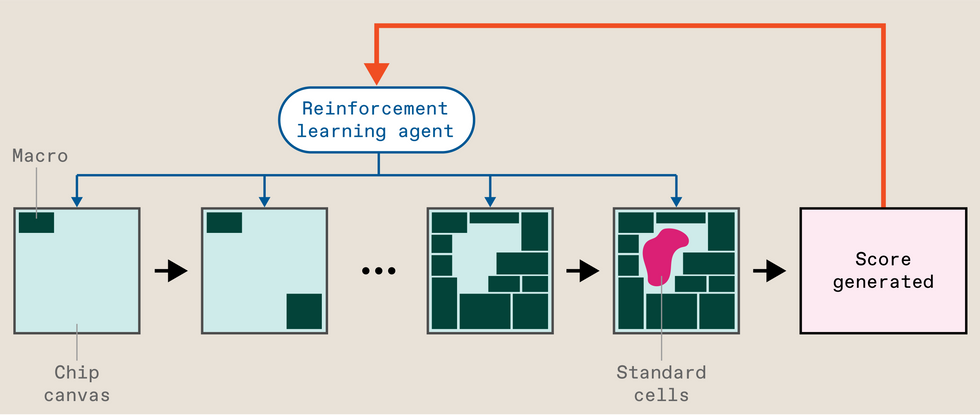

But Go was in the end defeated by a kind of AI known as deep reinforcement studying, and that’s simply what former Google Brain researchers Azalia Mirhoseini and Anna Goldie utilized to the position drawback. The scheme, then known as Morpheus, treats inserting massive items of circuitry, known as macros, as a recreation, studying to seek out an optimum resolution. (The places of macros have an outsize impression on the chip’s traits. In Circuit Training and Morpheus, a separate algorithm fills within the gaps with the smaller elements, known as commonplace cells. Other strategies use the identical course of for each macros and commonplace cells.)

Briefly, that is the way it works: The chip’s design file begins as what’s known as a netlist—which macros and cells are linked to which others in accordance with what constraints. The commonplace cells are then collected into clusters to assist pace up the coaching course of. Circuit Training then begins inserting the macros on the chip “canvas” separately. When the final one is down, a separate algorithm fills within the gaps with the usual cells, and the system spits out a fast analysis of the try, encompassing the size of the wiring (longer is worse), how densely packed it’s (extra dense is worse), and the way congested the wiring is (you guessed it, worse). Called proxy value, this acts just like the rating would in a reinforcement-learning system that was determining play a online game. The rating is used as suggestions to regulate the neural community, and it tries once more. Wash, rinse, repeat. When the system has lastly realized its job, business software program does a full analysis of the entire placement, producing the sort of metrics that chip designers care about, akin to space, energy consumption, and constraints on frequency.

Mirhoseini and Goldie revealed the outcomes and methodology of Morpheus in Nature in June 2021, following a seven-month assessment course of. (Kahng was reviewer No. 3.) And the approach was used to design a couple of era of Google’s TPU AI accelerator chips. (So sure, knowledge you used right now might have been processed by an AI working on a chip partly designed by an AI. But that’s more and more the case as EDA distributors akin to Cadence and Synopsys go all in on AI-assisted chip design.) In January 2022, they launched an open-source model, Circuit Training, on GitHub. But Kahng and others declare that even this model was not full sufficient to breed the analysis.

In response to the Nature publication, a separate group of engineers, principally inside Google, started analysis aimed toward what they believed to be a greater manner of evaluating reinforcement studying to established algorithms. But this was no pleasant rivalry. According to press stories, its chief Satrajit Chatterjee, repeatedly undermined Mirhoseini and Goldie personally and was fired for it in 2022.

While Chatterjee was nonetheless at Google, his crew produced a paper titled “Stronger Baselines,” crucial of the analysis revealed in Nature. He sought to have it offered at a convention, however after assessment by an impartial decision committee, Google refused. After his termination, an early model of the paper was leaked by way of an nameless Twitter account simply forward of ISPD in 2022, resulting in the general public confrontation.

Benchmarks, Baselines, and Reproducibility

When IEEE Spectrum spoke with EDA consultants following ISPD 2022, detractors had three interrelated considerations—benchmarks, baselines, and reproducibility.

Benchmarks are overtly obtainable blocks of circuitry that researchers take a look at their new algorithms on. The benchmarks when Google started its work have been already about twenty years outdated, and their relevance to trendy chips is debated. University of Calgary professor Laleh Behjat compares it to planning a contemporary metropolis versus planning a Seventeenth-century one. The infrastructure wanted for every is totally different, she says. However, others level out that there isn’t a manner for the analysis neighborhood to progress with out everybody testing on the identical set of benchmarks.

Instead of the benchmarks obtainable on the time, the Nature paper targeted on doing the position for Google’s TPU, a posh and cutting-edge chip whose design isn’t obtainable to researchers exterior of Google. The leaked “Stronger Baselines” work positioned TPU blocks but additionally used the outdated benchmarks. While Kahng’s new work additionally did placements for the outdated benchmarks, the primary focus centered on three more-modern designs, two of that are newly obtainable, together with a multicore RISC-V processor.

Baselines are the state-of-the artwork algorithms your new system competes in opposition to. Nature in contrast a human knowledgeable utilizing a business software to reinforcement studying and to the main educational algorithm of the time, RePlAce. Stronger Baselines contended that the Nature work didn’t correctly execute RePlAce and that one other algorithm, simulated annealing, wanted to be in contrast as effectively. (To be honest, simulated annealing outcomes appeared within the addendum to the Nature paper.)

But it’s the reproducibility bit that Kahng was actually targeted on. He claims that Circuit Training, because it was posted to GitHub, fell wanting permitting an impartial group to totally reproduce the process. So they took it upon themselves to reverse engineer what they noticed as lacking components and parameters.

Importantly, Kahng’s group publicly documented the progress, code, knowledge units, and process for example of how such work can improve reproducibility. In a primary, they even managed to influence EDA software program firms Cadence and Synopsys to permit the publication of the high-level scripts used within the experiments. “This was an absolute watershed moment for our field,” mentioned Kahng.

The UCSD effort, which is referred to easily as MacroPlacement, was not meant to be a one-to-one redo of both the Nature paper or the leaked Stronger Baselines work. Besides utilizing trendy public benchmarks unavailable in 2020 and 2021, MacroPlacement compares Circuit Training (although not the newest model) to a business software, Cadence’s Innovus concurrent macro placer (CMP), and to a technique developed at Nvidia known as AutoDMP that’s so new it was solely publicly launched at ISPD 2023 minutes earlier than Kahng spoke.

Reinforcement Learning vs. Everybody

Kahng’s paper stories outcomes on the three trendy benchmark designs applied utilizing two applied sciences—NanGate45, which is open supply, and GF12, which is a business GlobalFoundries FinFET course of. (The TPU outcomes reported in Nature used much more superior course of applied sciences.) Kahng’s crew measured the identical six metrics Mirhoseini and Goldie did of their Nature paper: space, routed wire size, energy, two timing metrics, and the beforehand talked about proxy value. (Proxy value isn’t an precise metric utilized in manufacturing, however it was included to reflect the Nature paper.) The outcomes have been blended.

As it did within the authentic Nature paper, reinforcement studying beat RePlAce on most metrics for which there was a head-to-head comparability. (RePlAce didn’t produce a solution for the biggest of the three designs.) Against a human knowledgeable, Circuit Training incessantly misplaced. Versus simulated annealing, the competition was a bit extra even.

For these experiments, the massive winners have been the most recent entrants CMP and AutoDMP, which delivered the very best metrics in additional instances than another methodology.

In the exams meant to match Stronger Baselines, utilizing older benchmarks, each RePlAce and simulated annealing virtually all the time beat reinforcement studying. But these outcomes report just one manufacturing metric, wire size, so that they don’t current an entire image, argue Mirhoseini and Goldie.

A Lack of Learning

Understandably, Mirhoseini and Goldie have their very own criticisms of the MacroPlacement work, however maybe an important is that it didn’t use neural networks that had been pretrained on different chip designs, robbing their methodology of its primary benefit. Circuit Training “unlike any of the other methods presented, can learn from experience, producing better placements more quickly with every problem it sees,” they wrote in an e-mail.

But within the MacroPlacement experiments every Circuit Training outcome got here from a neural community that had by no means seen a design earlier than. “This is analogous to resetting AlphaGo before each match…and then forcing it to learn how to play Go from scratch every time it faced a new opponent!”

The outcomes from the Nature paper bear this out, exhibiting that the extra blocks of TPU circuitry the system realized from, the higher it positioned macros for a block of circuitry it had not but seen. It additionally confirmed {that a} reinforcement-learning system that had been pretrained may produce a placement in 6 hours of the identical high quality as an untrained one after 40 hours.

New Controversy?

Kahng’s ISPD presentation emphasised a specific discrepancy between the strategies described in Nature and people of the open-source model, Circuit Training. Recall that, as a preprocessing step, the reinforcement-learning methodology gathers up the usual cells into clusters. In Circuit Training, that step is enabled by business EDA software program that outputs the netlist—what cells and macros are linked to one another—and an preliminary placement of the elements.

According to Kahng, the existence of an preliminary placement within the Nature work was unknown to him at the same time as a reviewer of the paper. According to Goldie, producing the preliminary placement, known as bodily synthesis, is commonplace business follow as a result of it guides the creation of the netlist, the enter for macro placers. All placement strategies in each Nature and MacroPlacement got the identical enter netlists.

Does the preliminary placement in some way give reinforcement studying a bonus? Yes, in accordance with Kahng. His group did experiments that fed three totally different unimaginable preliminary placements into Circuit Training and in contrast them to an actual placement. Routed wire lengths for the unimaginable variations have been between 7 and 10 % worse.

Mirhoseini and Goldie counter that the preliminary placement data is used just for clustering commonplace cells, which reinforcement studying doesn’t place. The macro-placing reinforcement studying portion has no information of the preliminary placement, they are saying. What’s extra, offering unimaginable preliminary placements could also be like taking a sledgehammer to the usual cell-clustering step and subsequently giving the reinforcement-learning system a false reward sign. “Kahng has introduced a disadvantage, not removed an advantage,” they write.

Kahng means that extra fastidiously designed experiments are forthcoming.

Moving On

This dispute has definitely had penalties, most of them adverse. Chatterjee is locked in a wrongful-termination lawsuit with Google. Kahng and his crew have spent an excessive amount of effort and time reconstructing work completed—maybe a number of occasions—years in the past. After spending years heading off criticism from unpublished and unrefereed analysis, Goldie and Mirhoseini, whose purpose was to assist enhance chip design, have left a subject of engineering that has traditionally struggled to draw feminine expertise. Since August 2022 they’ve been at Anthropic engaged on reinforcement studying for giant language fashions.

If there’s a brilliant aspect, it’s that Kahng’s effort provides a mannequin for open and reproducible analysis and added to the shop of overtly obtainable instruments to push this a part of chip design ahead. That mentioned, Mirhoseini and Goldie’s group at Google had already made an open-source model of their analysis, which isn’t widespread for business analysis and required some nontrivial engineering work.

Despite all of the drama, the usage of machine studying usually, and reinforcement studying particularly, in chip design, has solely unfold. More than one group was capable of construct on Morpheus even earlier than it was made open supply. And machine studying is helping in ever-growing points of business EDA instruments, akin to these from Synopsys and Cadence.

But all that good may have occurred with out the unpleasantness.

This publish was corrected on 4 April. CMP was initially incorrectly characterised as being a brand new software. On 5 April context and correction was added about how CT faired in opposition to a human and in opposition to simulated annealing. A press release relating to the readability of experiments surrounding the preliminary placement situation was eliminated.

To Probe Further:

The MacroPlacement mission is extensively documented on GitHub.

Google’s Circuit Training entry on GitHub is right here.

Andrew Kahng paperwork his involvement with the Nature paper right here. Nature revealed the peer-review file in 2022.

Mirhoseini and Goldie’s response to MacroPlacement could be discovered right here.

From Your Site Articles

Related Articles Around the Web

[ad_2]