[ad_1]

Major latest advances in a number of subfields of machine studying (ML) analysis, comparable to pc imaginative and prescient and pure language processing, have been enabled by a shared frequent method that leverages giant, numerous datasets and expressive fashions that may take in all the information successfully. Although there have been numerous makes an attempt to use this method to robotics, robots haven’t but leveraged highly-capable fashions in addition to different subfields.

Several components contribute to this problem. First, there’s the dearth of large-scale and numerous robotic information, which limits a mannequin’s means to soak up a broad set of robotic experiences. Data assortment is especially costly and difficult for robotics as a result of dataset curation requires engineering-heavy autonomous operation, or demonstrations collected utilizing human teleoperations. A second issue is the dearth of expressive, scalable, and fast-enough-for-real-time-inference fashions that may be taught from such datasets and generalize successfully.

To tackle these challenges, we suggest the Robotics Transformer 1 (RT-1), a multi-task mannequin that tokenizes robotic inputs and outputs actions (e.g., digicam pictures, activity directions, and motor instructions) to allow environment friendly inference at runtime, which makes real-time management possible. This mannequin is educated on a large-scale, real-world robotics dataset of 130k episodes that cowl 700+ duties, collected utilizing a fleet of 13 robots from Everyday Robots (EDR) over 17 months. We exhibit that RT-1 can exhibit considerably improved zero-shot generalization to new duties, environments and objects in comparison with prior strategies. Moreover, we fastidiously consider and ablate lots of the design decisions within the mannequin and coaching set, analyzing the results of tokenization, motion illustration, and dataset composition. Finally, we’re open-sourcing the RT-1 code, and hope it should present a priceless useful resource for future analysis on scaling up robotic studying.

|

| RT-1 absorbs giant quantities of knowledge, together with robotic trajectories with a number of duties, objects and environments, leading to higher efficiency and generalization. |

Robotics Transformer (RT-1)

RT-1 is constructed on a transformer structure that takes a brief historical past of pictures from a robotic’s digicam together with activity descriptions expressed in pure language as inputs and immediately outputs tokenized actions.

RT-1’s structure is much like that of a recent decoder-only sequence mannequin educated towards a normal categorical cross-entropy goal with causal masking. Its key options embrace: picture tokenization, motion tokenization, and token compression, described beneath.

Image tokenization: We cross pictures by means of an EfficientNet-B3 mannequin that’s pre-trained on ImageWeb, after which flatten the ensuing 9×9×512 spatial characteristic map to 81 tokens. The picture tokenizer is conditioned on pure language activity directions, and makes use of FiLM layers initialized to id to extract task-relevant picture options early on.

Action tokenization: The robotic’s motion dimensions are 7 variables for arm motion (x, y, z, roll, pitch, yaw, gripper opening), 3 variables for base motion (x, y, yaw), and an additional discrete variable to modify between three modes: controlling arm, controlling base, or terminating the episode. Each motion dimension is discretized into 256 bins.

Token Compression: The mannequin adaptively selects tender combos of picture tokens that may be compressed based mostly on their impression in direction of studying with the element-wise consideration module TokenLearner, leading to over 2.4x inference speed-up.

To construct a system that might generalize to new duties and present robustness to totally different distractors and backgrounds, we collected a big, numerous dataset of robotic trajectories. We used 13 EDR robotic manipulators, every with a 7-degree-of-freedom arm, a 2-fingered gripper, and a cellular base, to gather 130k episodes over 17 months. We used demonstrations offered by people by means of distant teleoperation, and annotated every episode with a textual description of the instruction that the robotic simply carried out. The set of high-level abilities represented within the dataset consists of selecting and inserting objects, opening and shutting drawers, getting objects out and in drawers, inserting elongated objects up-right, knocking objects over, pulling napkins and opening jars. The ensuing dataset consists of 130k+ episodes that cowl 700+ duties utilizing many alternative objects.

Experiments and Results

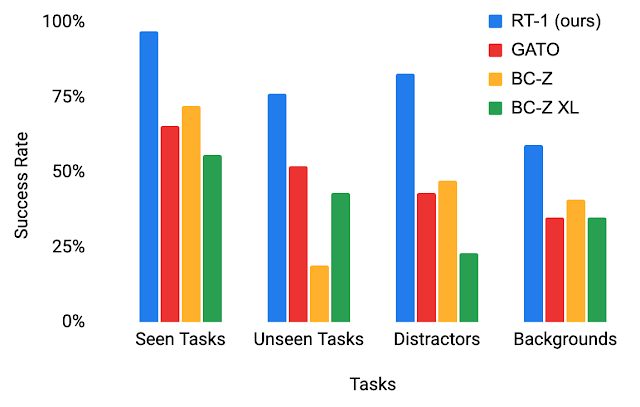

To higher perceive RT-1’s generalization talents, we research its efficiency towards three baselines: Gato, BC-Z and BC-Z XL (i.e., BC-Z with similar variety of parameters as RT-1), throughout 4 classes:

- Seen duties efficiency: efficiency on duties seen throughout coaching

- Unseen duties efficiency: efficiency on unseen duties the place the ability and object(s) had been seen individually within the coaching set, however mixed in novel methods

- Robustness (distractors and backgrounds): efficiency with distractors (as much as 9 distractors and occlusion) and efficiency with background modifications (new kitchen, lighting, background scenes)

- Long-horizon situations: execution of SayCan-type pure language directions in an actual kitchen

RT-1 outperforms baselines by giant margins in all 4 classes, exhibiting spectacular levels of generalization and robustness.

|

| Performance of RT-1 vs. baselines on analysis situations. |

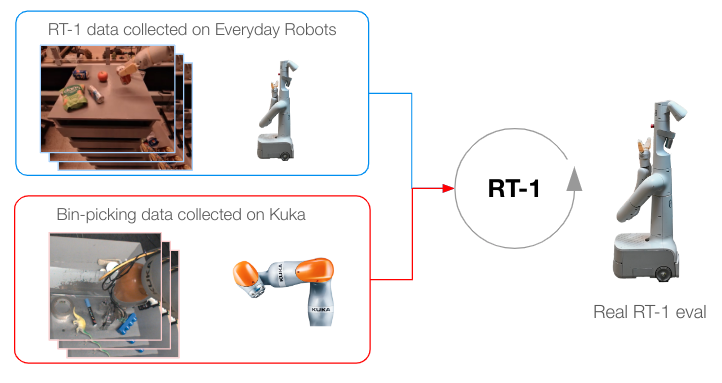

Incorporating Heterogeneous Data Sources

To push RT-1 additional, we practice it on information gathered from one other robotic to check if (1) the mannequin retains its efficiency on the unique duties when a brand new information supply is offered and (2) if the mannequin sees a lift in generalization with new and totally different information, each of that are fascinating for a basic robotic studying mannequin. Specifically, we use 209k episodes of indiscriminate greedy that had been autonomously collected on a fixed-base Kuka arm for the QT-Opt venture. We rework the info collected to match the motion specs and bounds of our authentic dataset collected with EDR, and label each episode with the duty instruction “pick anything” (the Kuka dataset doesn’t have object labels). Kuka information is then combined with EDR information in a 1:2 ratio in each coaching batch to regulate for regression in authentic EDR abilities.

|

| Training methodology when information has been collected from a number of robots. |

Our outcomes point out that RT-1 is ready to purchase new abilities by observing different robots’ experiences. In explicit, the 22% accuracy seen when coaching with EDR information alone jumps by nearly 2x to 39% when RT-1 is educated on each bin-picking information from Kuka and present EDR information from robotic lecture rooms, the place we collected most of RT-1 information. When coaching RT-1 on bin-picking information from Kuka alone, after which evaluating it on bin-picking from the EDR robotic, we see 0% accuracy. Mixing information from each robots, however, permits RT-1 to deduce the actions of the EDR robotic when confronted with the states noticed by Kuka, with out specific demonstrations of bin-picking on the EDR robotic, and by making the most of experiences collected by Kuka. This presents a possibility for future work to mix extra multi-robot datasets to boost robotic capabilities.

| Training Data | Classroom Eval | Bin-picking Eval |

| Kuka bin-picking information + EDR information | 90% | 39% |

| EDR solely information | 92% | 22% |

| Kuka bin-picking solely information | 0 | 0 |

| RT-1 accuracy analysis utilizing numerous coaching information. |

Long-Horizon SayCan Tasks

RT-1’s excessive efficiency and generalization talents can allow long-horizon, cellular manipulation duties by means of SayCan. SayCan works by grounding language fashions in robotic affordances, and leveraging few-shot prompting to interrupt down a long-horizon activity expressed in pure language right into a sequence of low-level abilities.

SayCan duties current a perfect analysis setting to check numerous options:

- Long-horizon activity success falls exponentially with activity size, so excessive manipulation success is vital.

- Mobile manipulation duties require a number of handoffs between navigation and manipulation, so the robustness to variations in preliminary coverage situations (e.g., base place) is important.

- The variety of doable high-level directions will increase combinatorially with skill-breadth of the manipulation primitive.

We consider SayCan with RT-1 and two different baselines (SayCan with Gato and SayCan with BC-Z) in two actual kitchens. Below, “Kitchen2” constitutes a way more difficult generalization scene than “Kitchen1”. The mock kitchen used to assemble many of the coaching information was modeled after Kitchen1.

SayCan with RT-1 achieves a 67% execution success fee in Kitchen1, outperforming different baselines. Due to the generalization issue offered by the brand new unseen kitchen, the efficiency of SayCan with Gato and SayCan with BCZ shapely falls, whereas RT-1 doesn’t present a visual drop.

| SayCan duties in Kitchen1 | SayCan duties in Kitchen2 | |||

| Planning | Execution | Planning | Execution | |

| Original Saycan | 73 | 47 | – | – |

| SayCan w/ Gato | 87 | 33 | 87 | 0 |

| SayCan w/ BC-Z | 87 | 53 | 87 | 13 |

| SayCan w/ RT-1 | 87 | 67 | 87 | 67 |

The following video reveals a number of instance PaLM-SayCan-RT1 executions of long-horizon duties in a number of actual kitchens.

Conclusion

The RT-1 Robotics Transformer is an easy and scalable action-generation mannequin for real-world robotics duties. It tokenizes all inputs and outputs, and makes use of a pre-trained EfficientNet mannequin with early language fusion, and a token learner for compression. RT-1 reveals robust efficiency throughout a whole lot of duties, and in depth generalization talents and robustness in real-world settings.

As we discover future instructions for this work, we hope to scale the variety of robotic abilities quicker by growing strategies that enable non-experts to coach the robotic with directed information assortment and mannequin prompting. We additionally sit up for bettering robotics transformers’ response speeds and context retention with scalable consideration and reminiscence. To be taught extra, try the paper, open-sourced RT-1 code, and the venture web site.

Acknowledgements

This work was performed in collaboration with Anthony Brohan, Noah Brown, Justice Carbajal, Yevgen Chebotar, Joseph Dabis, Chelsea Finn, Keerthana Gopalakrishnan, Karol Hausman, Alex Herzog, Jasmine Hsu, Julian Ibarz, Brian Ichter, Alex Irpan, Tomas Jackson, Sally Jesmonth, Nikhil Joshi, Ryan Julian, Dmitry Kalashnikov, Yuheng Kuang, Isabel Leal, Kuang-Huei Lee, Sergey Levine, Yao Lu, Utsav Malla, Deeksha Manjunath, Igor Mordatch, Ofir Nachum, Carolina Parada, Jodilyn Peralta, Emily Perez, Karl Pertsch, Jornell Quiambao, Kanishka Rao, Michael Ryoo, Grecia Salazar, Pannag Sanketi, Kevin Sayed, Jaspiar Singh, Sumedh Sontakke, Austin Stone, Clayton Tan, Huong Tran, Vincent Vanhoucke, Steve Vega, Quan Vuong, Fei Xia, Ted Xiao, Peng Xu, Sichun Xu, Tianhe Yu, and Brianna Zitkovich.

[ad_2]