[ad_1]

Ad know-how suppliers broadly use machine studying (ML) fashions to foretell and current customers with probably the most related advertisements, and to measure the effectiveness of these advertisements. With growing give attention to on-line privateness, there’s a chance to establish ML algorithms which have higher privacy-utility trade-offs. Differential privateness (DP) has emerged as a well-liked framework for growing ML algorithms responsibly with provable privateness ensures. It has been extensively studied within the privateness literature, deployed in industrial purposes and employed by the U.S. Census. Intuitively, the DP framework allows ML fashions to study population-wide properties, whereas defending user-level info.

When coaching ML fashions, algorithms take a dataset as their enter and produce a educated mannequin as their output. Stochastic gradient descent (SGD) is a generally used non-private coaching algorithm that computes the typical gradient from a random subset of examples (referred to as a mini-batch), and makes use of it to point the course in direction of which the mannequin ought to transfer to suit that mini-batch. The most generally used DP coaching algorithm in deep studying is an extension of SGD referred to as DP stochastic gradient descent (DP-SGD).

DP-SGD consists of two further steps: 1) earlier than averaging, the gradient of every instance is norm-clipped if the L2 norm of the gradient exceeds a predefined threshold; and a couple of) Gaussian noise is added to the typical gradient earlier than updating the mannequin. DP-SGD could be tailored to any current deep studying pipeline with minimal adjustments by changing the optimizer, comparable to SGD or Adam, with their DP variants. However, making use of DP-SGD in observe may result in a major lack of mannequin utility (i.e., accuracy) with giant computational overheads. As a end result, numerous analysis makes an attempt to use DP-SGD coaching on extra sensible, large-scale deep studying issues. Recent research have additionally proven promising DP coaching outcomes on pc imaginative and prescient and pure language processing issues.

In “Private Ad Modeling with DP-SGD”, we current a scientific examine of DP-SGD coaching on advertisements modeling issues, which pose distinctive challenges in comparison with imaginative and prescient and language duties. Ads datasets typically have a excessive imbalance between knowledge courses, and include categorical options with giant numbers of distinctive values, resulting in fashions which have giant embedding layers and extremely sparse gradient updates. With this examine, we show that DP-SGD permits advert prediction fashions to be educated privately with a a lot smaller utility hole than beforehand anticipated, even within the excessive privateness regime. Moreover, we show that with correct implementation, the computation and reminiscence overhead of DP-SGD coaching could be considerably diminished.

Evaluation

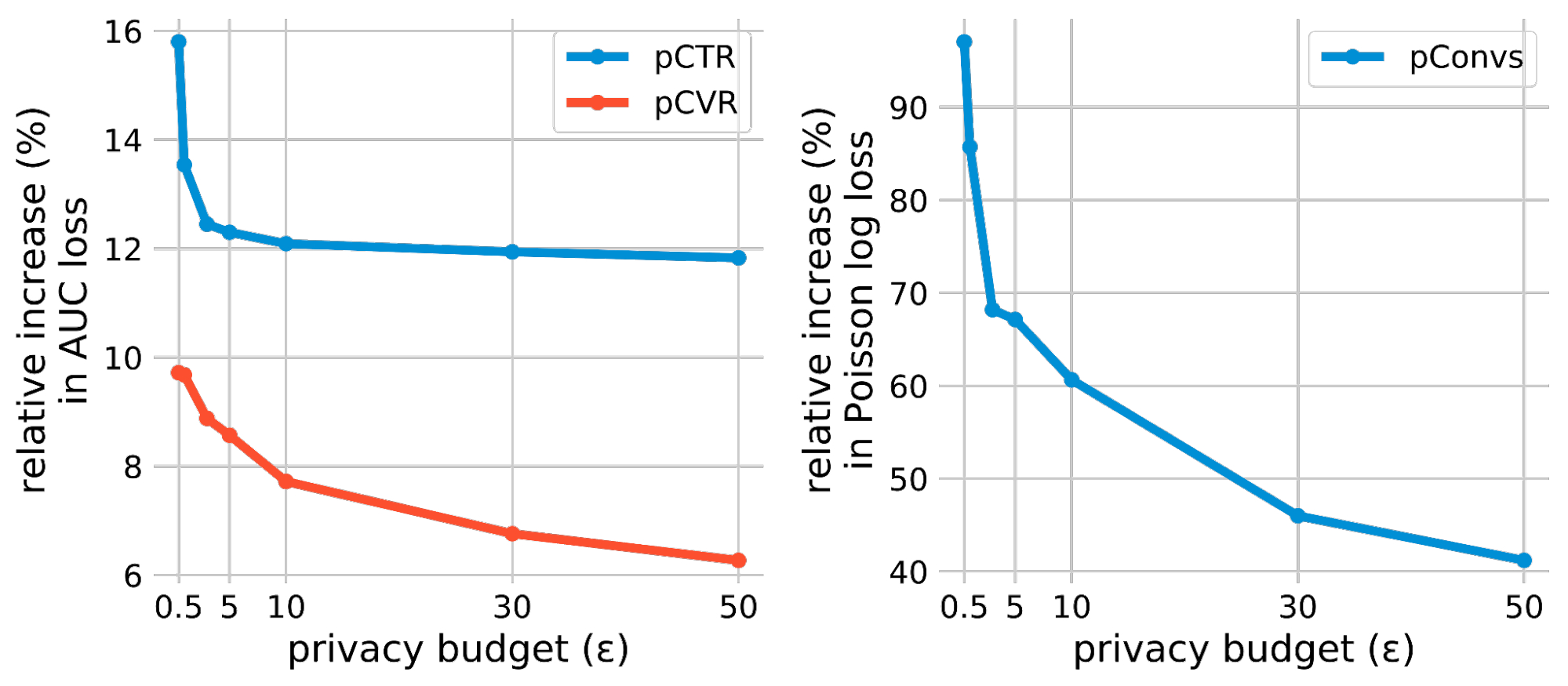

We consider non-public coaching utilizing three advertisements prediction duties: (1) predicting the click-through price (pCTR) for an advert, (2) predicting the conversion price (pCVR) for an advert after a click on, and three) predicting the anticipated variety of conversions (pConvs) after an advert click on. For pCTR, we use the Criteo dataset, which is a broadly used public benchmark for pCTR fashions. We consider pCVR and pConvs utilizing inner Google datasets. pCTR and pCVR are binary classification issues educated with the binary cross entropy loss and we report the check AUC loss (i.e., 1 – AUC). pConvs is a regression drawback educated with Poisson log loss (PLL) and we report the check PLL.

For every job, we consider the privacy-utility trade-off of DP-SGD by the relative improve within the lack of privately educated fashions beneath numerous privateness budgets (i.e., privateness loss). The privateness finances is characterised by a scalar ε, the place a decrease ε signifies larger privateness. To measure the utility hole between non-public and non-private coaching, we compute the relative improve in loss in comparison with the non-private mannequin (equal to ε = ∞). Our foremost remark is that on all three frequent advert prediction duties, the relative loss improve could possibly be made a lot smaller than beforehand anticipated, even for very excessive privateness (e.g., ε <= 1) regimes.

|

| DP-SGD outcomes on three advertisements prediction duties. The relative improve in loss is computed in opposition to the non-private baseline (i.e., ε = ∞) mannequin of every job. |

Improved Privacy Accounting

Privacy accounting estimates the privateness finances (ε) for a DP-SGD educated mannequin, given the Gaussian noise multiplier and different coaching hyperparameters. Rényi Differential Privacy (RDP) accounting has been probably the most broadly used method in DP-SGD since the unique paper. We discover the most recent advances in accounting strategies to offer tighter estimates. Specifically, we use connect-the-dots for accounting based mostly on the privateness loss distribution (PLD). The following determine compares this improved accounting with the classical RDP accounting and demonstrates that PLD accounting improves the AUC on the pCTR dataset for all privateness budgets (ε).

|

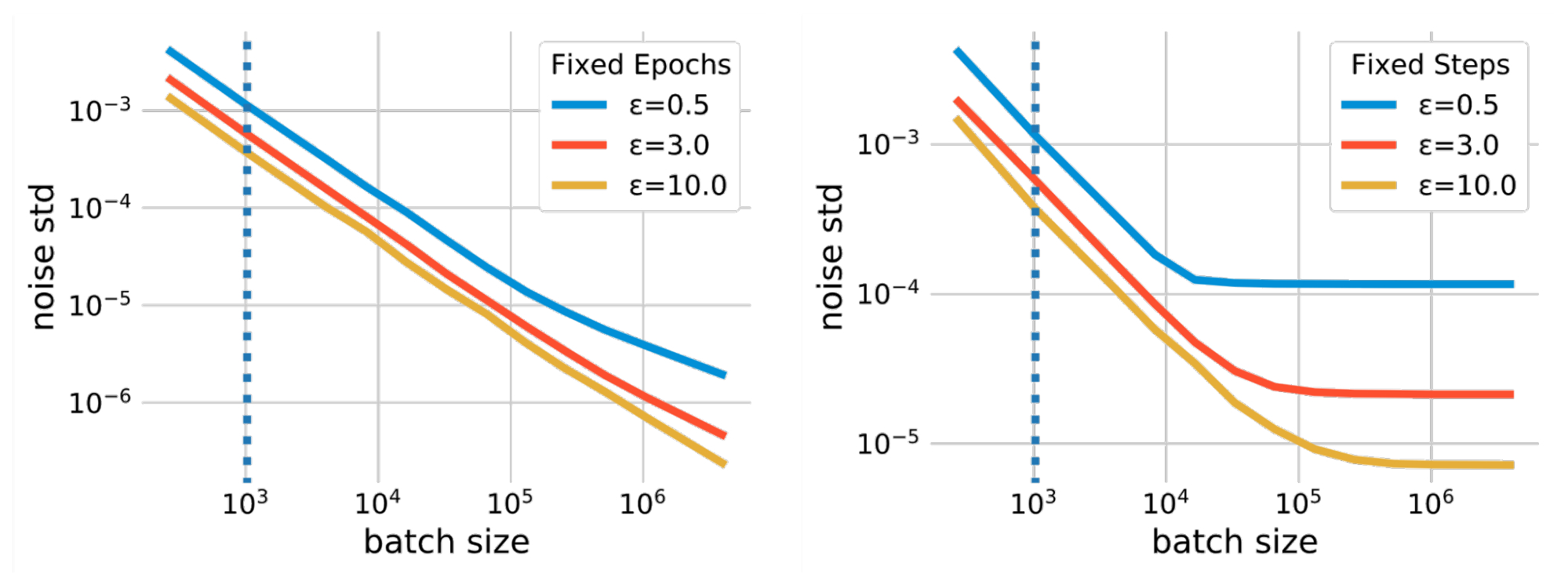

Large Batch Training

Batch dimension is a hyperparameter that impacts completely different points of DP-SGD coaching. For occasion, growing the batch dimension may cut back the quantity of noise added throughout coaching beneath the identical privateness assure, which reduces the coaching variance. The batch dimension additionally impacts the privateness assure by way of different parameters, such because the subsampling chance and coaching steps. There isn’t any easy method to quantify the influence of batch sizes. However, the connection between batch dimension and the noise scale is quantified utilizing privateness accounting, which calculates the required noise scale (measured when it comes to the commonplace deviation) beneath a given privateness finances (ε) when utilizing a specific batch dimension. The determine under plots such relations in two completely different eventualities. The first state of affairs makes use of fastened epochs, the place we repair the variety of passes over the coaching dataset. In this case, the variety of coaching steps is diminished because the batch dimension will increase, which may end in undertraining the mannequin. The second, extra simple state of affairs makes use of fastened coaching steps (fastened steps).

In addition to permitting a smaller noise scale, bigger batch sizes additionally permit us to make use of a bigger threshold of norm clipping every per-example gradient as required by DP-SGD. Since the norm clipping step introduces biases within the common gradient estimation, this rest mitigates such biases. The desk under compares the outcomes on the Criteo dataset for pCTR with a typical batch dimension (1,024 examples) and a big batch dimension (16,384 examples), mixed with giant clipping and elevated coaching epochs. We observe that giant batch coaching considerably improves the mannequin utility. Note that giant clipping is barely doable with giant batch sizes. Large batch coaching was additionally discovered to be important for DP-SGD coaching in Language and Computer Vision domains.

Fast per-example Gradient Norm Computation

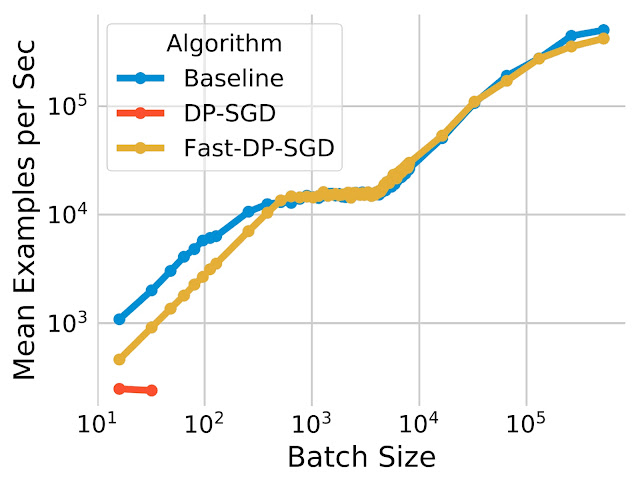

The per-example gradient norm calculation used for DP-SGD typically causes computational and reminiscence overhead. This calculation removes the effectivity of ordinary backpropagation on accelerators (like GPUs) that compute the typical gradient for a batch with out materializing every per-example gradient. However, for sure neural community layer varieties, an environment friendly gradient norm computation algorithm permits the per-example gradient norm to be computed with out the necessity to materialize the per-example gradient vector. We additionally notice that this algorithm can effectively deal with neural community fashions that depend on embedding layers and absolutely linked layers for fixing advertisements prediction issues. Combining the 2 observations, we use this algorithm to implement a quick model of the DP-SGD algorithm. We present that Fast-DP-SGD on pCTR can deal with an identical variety of coaching examples and the identical most batch dimension on a single GPU core as a non-private baseline.

|

| The computation effectivity of our quick implementation (Fast-DP-SGD) on pCTR. |

Compared to the non-private baseline, the coaching throughput is analogous, besides with very small batch sizes. We additionally examine it with an implementation using the JAX Just-in-Time (JIT) compilation, which is already a lot sooner than vanilla DP-SGD implementations. Our implementation shouldn’t be solely sooner, however it is usually extra reminiscence environment friendly. The JIT-based implementation can not deal with batch sizes bigger than 64, whereas our implementation can deal with batch sizes as much as 500,000. Memory effectivity is vital for enabling large-batch coaching, which was proven above to be vital for enhancing utility.

Conclusion

We have proven that it’s doable to coach non-public advertisements prediction fashions utilizing DP-SGD which have a small utility hole in comparison with non-private baselines, with minimal overhead for each computation and reminiscence consumption. We imagine there’s room for even additional discount of the utility hole by means of methods comparable to pre-training. Please see the paper for full particulars of the experiments.

Acknowledgements

This work was carried out in collaboration with Carson Denison, Badih Ghazi, Pritish Kamath, Ravi Kumar, Pasin Manurangsi, Amer Sinha, and Avinash Varadarajan. We thank Silvano Bonacina and Samuel Ieong for a lot of helpful discussions.

[ad_2]