[ad_1]

Picture two groups squaring off on a soccer area. The gamers can cooperate to realize an goal, and compete towards different gamers with conflicting pursuits. That’s how the sport works.

Creating synthetic intelligence brokers that may study to compete and cooperate as successfully as people stays a thorny drawback. A key problem is enabling AI brokers to anticipate future behaviors of different brokers when they’re all studying concurrently.

Because of the complexity of this drawback, present approaches are usually myopic; the brokers can solely guess the subsequent few strikes of their teammates or opponents, which ends up in poor efficiency in the long term.

Researchers from MIT, the MIT-IBM Watson AI Lab, and elsewhere have developed a brand new method that offers AI brokers a farsighted perspective. Their machine-learning framework allows cooperative or aggressive AI brokers to think about what different brokers will do as time approaches infinity, not simply over a number of subsequent steps. The brokers then adapt their behaviors accordingly to affect different brokers’ future behaviors and arrive at an optimum, long-term resolution.

This framework could possibly be utilized by a gaggle of autonomous drones working collectively to discover a misplaced hiker in a thick forest, or by self-driving automobiles that attempt to maintain passengers secure by anticipating future strikes of different autos driving on a busy freeway.

“When AI agents are cooperating or competing, what matters most is when their behaviors converge at some point in the future. There are a lot of transient behaviors along the way that don’t matter very much in the long run. Reaching this converged behavior is what we really care about, and we now have a mathematical way to enable that,” says Dong-Ki Kim, a graduate pupil within the MIT Laboratory for Information and Decision Systems (LIDS) and lead writer of a paper describing this framework.

The senior writer is Jonathan P. How, the Richard C. Maclaurin Professor of Aeronautics and Astronautics and a member of the MIT-IBM Watson AI Lab. Co-authors embrace others on the MIT-IBM Watson AI Lab, IBM Research, Mila-Quebec Artificial Intelligence Institute, and Oxford University. The analysis might be offered on the Conference on Neural Information Processing Systems.

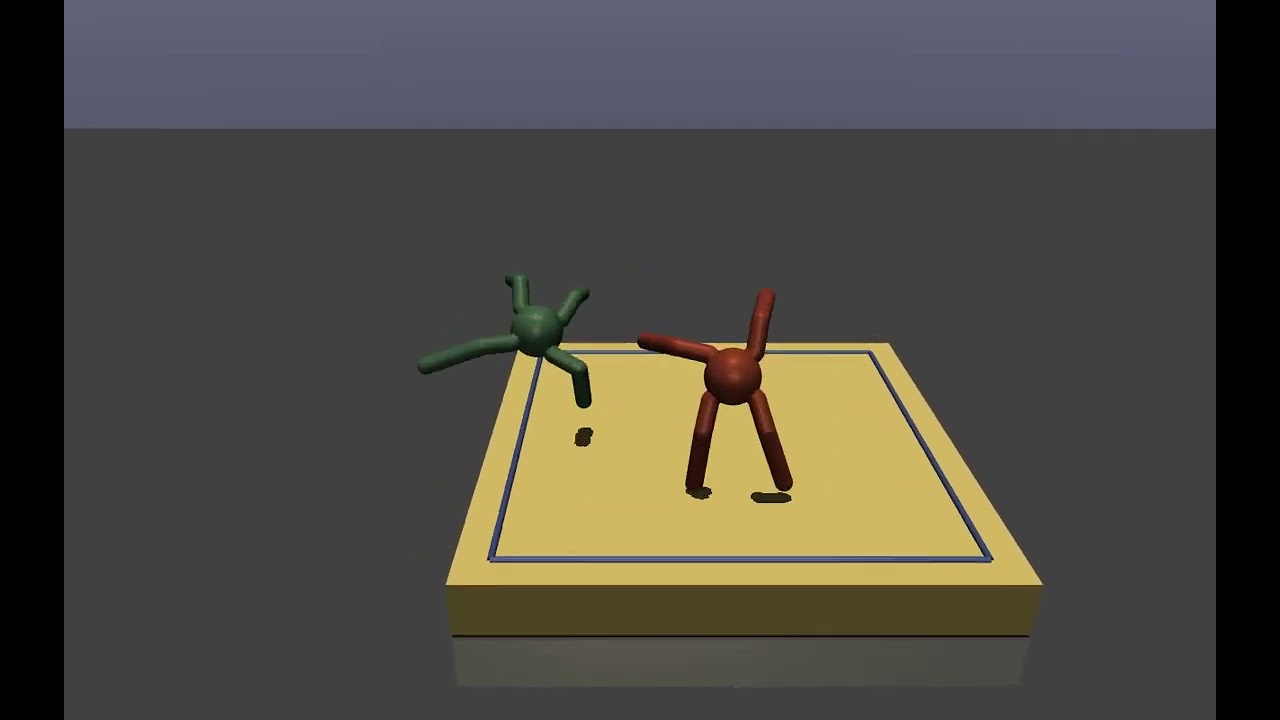

In this demo video, the crimson robotic, which has been skilled utilizing the researchers’ machine-learning system, is ready to defeat the inexperienced robotic by studying simpler behaviors that benefit from the always altering technique of its opponent.

More brokers, extra issues

The researchers targeted on an issue often known as multiagent reinforcement studying. Reinforcement studying is a type of machine studying during which an AI agent learns by trial and error. Researchers give the agent a reward for “good” behaviors that assist it obtain a aim. The agent adapts its conduct to maximise that reward till it will definitely turns into an knowledgeable at a activity.

But when many cooperative or competing brokers are concurrently studying, issues turn into more and more complicated. As brokers think about extra future steps of their fellow brokers, and the way their very own conduct influences others, the issue quickly requires far an excessive amount of computational energy to unravel effectively. This is why different approaches solely give attention to the brief time period.

“The AIs really want to think about the end of the game, but they don’t know when the game will end. They need to think about how to keep adapting their behavior into infinity so they can win at some far time in the future. Our paper essentially proposes a new objective that enables an AI to think about infinity,” says Kim.

But since it’s unattainable to plug infinity into an algorithm, the researchers designed their system so brokers give attention to a future level the place their conduct will converge with that of different brokers, often known as equilibrium. An equilibrium level determines the long-term efficiency of brokers, and a number of equilibria can exist in a multiagent situation. Therefore, an efficient agent actively influences the long run behaviors of different brokers in such a manner that they attain a fascinating equilibrium from the agent’s perspective. If all brokers affect one another, they converge to a common idea that the researchers name an “active equilibrium.”

The machine-learning framework they developed, often known as FURTHER (which stands for FUlly Reinforcing acTive affect witH averagE Reward), allows brokers to learn to adapt their behaviors as they work together with different brokers to realize this lively equilibrium.

FURTHER does this utilizing two machine-learning modules. The first, an inference module, allows an agent to guess the long run behaviors of different brokers and the educational algorithms they use, primarily based solely on their prior actions.

This data is fed into the reinforcement studying module, which the agent makes use of to adapt its conduct and affect different brokers in a manner that maximizes its reward.

“The challenge was thinking about infinity. We had to use a lot of different mathematical tools to enable that, and make some assumptions to get it to work in practice,” Kim says.

Winning in the long term

They examined their method towards different multiagent reinforcement studying frameworks in a number of totally different situations, together with a pair of robots preventing sumo-style and a battle pitting two 25-agent groups towards each other. In each situations, the AI brokers utilizing FURTHER received the video games extra usually.

Since their method is decentralized, which implies the brokers study to win the video games independently, additionally it is extra scalable than different strategies that require a central laptop to regulate the brokers, Kim explains.

The researchers used video games to check their method, however FURTHER could possibly be used to sort out any sort of multiagent drawback. For occasion, it could possibly be utilized by economists searching for to develop sound coverage in conditions the place many interacting entitles have behaviors and pursuits that change over time.

Economics is one software Kim is especially enthusiastic about learning. He additionally needs to dig deeper into the idea of an lively equilibrium and proceed enhancing the FURTHER framework.

This analysis is funded, partly, by the MIT-IBM Watson AI Lab.

[ad_2]